Deep Learning Meets 3D Modeling

Machine learning and AI introduce predictive behavior in design software.

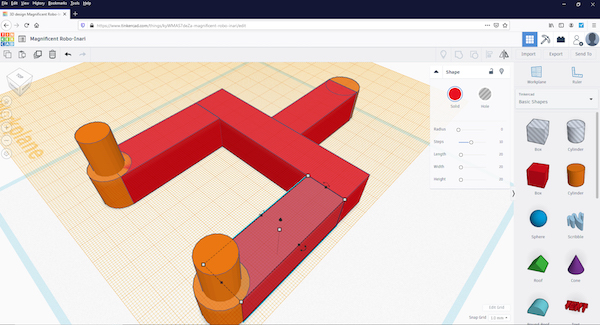

Autodesk studies TinkerCAD users’ modeling behavior using machine learning to develop predictive UI. Image courtesy of Autodesk.

Engineering Resource Center News

Engineering Resource Center Resources

January 10, 2020

The application of machine learning, also known as deep learning, to computer-aided design (CAD) is gradually becoming commonplace. Almost all mainstream CAD programs—Autodesk Fusion and SOLIDWORKS, to name but two—include some form of generative design or topology optimization tools. This allows the software to employ AI-like algorithms to identify the best shapes for the designer’s stated purpose, whether to reduce weight or to counterbalance the anticipated stress (usually it’s a combination of both).

But what’s far more subtle is the way machine learning is transforming the behavior of the CAD software itself.

Predictive Email to Predictive CAD

In May 16, 2018, explaining how Google uses neural networks to speed up Gmail users’ email composition in a blog post, Yonghui Wu, Principal Engineer of the Google Brain Team, wrote, “Smart Compose is a new feature in Gmail that uses machine learning to interactively offer sentence completion suggestions as you type, allowing you to draft emails faster. Building upon technology developed for Smart Reply, Smart Compose offers a new way to help you compose messages—whether you are responding to an incoming email or drafting a new one from scratch.”

Similar types of R&D efforts are ongoing in the CAD sector as well. In a 2019 talk titled “The Future of Design Powered by AI,” Mike Haley, Head of Machine Intelligence, Autodesk Research, revealed, “TinkerCAD [has] millions of users. People use it on the web. We’ve been mining what’s going on in there … What we did is, we started learning what kinds of models people had been creating with it, and how they created those models. After a while, the AI learns to predict what you are going to do. It looks at the steps; it knows what you have gone through; and it’s able to analyze and says, ‘I think you’re making one of these objects.’ And it understands the steps you need to take to get to that shape.”

TinkerCAD is a browser-based modeler from Autodesk targeting hobbyists, without the sophisticated surfacing and parametric features found in professional-class Autodesk products such as Autodesk Inventor or AutoCAD. But the way you use to build objects using primitive geometric shapes, or the way you adject the width and height of objects in TinkerCAD, are similar to the operations in classic parametric CAD. Therefore, it’s conceivable to employ machine learning in the same fashion to develop a next-generation parametric CAD program that can, for example, predict what steps you might take after watching your first two or three modeling operations. That would lead to more dynamic CAD UIs that are less cluttered—the type of UI that only presents you with the tools you might possibly need for the next step and hide the rest.

A similar initiative at Siemens Digital Industries Software, makers of the NX CAD-CAM-CAE suite and Solid Edge CAD program, is also reshaping the CAD experience. In February 2019, the company revealed what it calls Adaptive UI for NX software users.

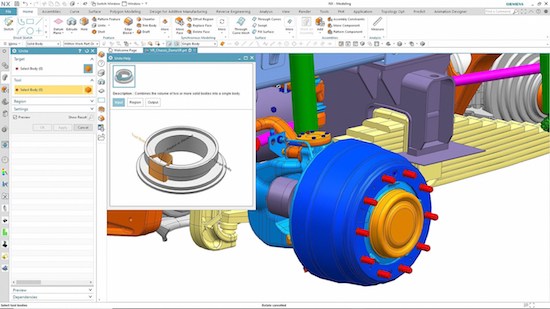

Siemens Digital Industries Software introduced Adaptive UI, which can predict user commands. Image courtesy of Siemens.

The outcome of machine learning and AI, “the adaptive UI will predict the commands that you will most likely want to use based upon the context of what you’re doing at the moment. It will put these recommended commands in a clean and compact panel to speed you through your workflow. The panel will reflect differently based upon each users unique style of usage in NX,” writes Siemens PLM.

“We can predict from previous behavior what the next set of behavior will be. We’re not relying on the customer to do the customization—we are learning and dynamically adjusting the UI on the fly,” said Paul Brown, Senior Product Marketing Director at Siemens PLM Software, in an interview with DE.

AI-Accelerated Raytracing

When hardware got better at harnessing the GPUs processing power, it also changed CAD users’ attitude toward rendering. Once an infrequent operation for CAD users, rendering is now a push-button option in many standard CAD packages. Whereas rendering in the past meant waiting a few minutes to hours for the results, today the rendering result is almost instantaneous.

The latest generation of GPUs, however, pushes the limits even further. With workstations equipped with NVIDIA RTX GPUs, you can work in real-time raytracing—a mode of working previously unthinkable due to the computing burden it entails.

When the first crop of NVIDIA RTX GPUs based on Turning architecture was introduced at the NVIDIA GPU Technology Conference (GTC), Kim Libreri, CTO of Epic Games, said, “The availability of these technologies is making real-time ray tracing a reality. By making such powerful features available in Unreal Engine 4, we are shaping the next generation of game and movie graphics.”

“With Quadro RTX-accelerated ray tracing and AI denoising, [users] can interactively ray trace in the application viewport, transforming the creative design workflow,” writes NVIDIA. Incorporated into the NVIDIA OptiX raytracing engine, the AI-based denoising uses “GPU-accelerated artificial intelligence to dramatically reduce the time to render a high-fidelity image that is visually noiseless,” according to the company.

With a dramatic reduction in computing cost for real-time raytracing, CAD users may consider working in raytraced mode most of the times rather than treating it as a precious one-time event for the final stage of design.

CAD applications supporting RTX-style denoising include SOLIDWORKS Visualize, the rendering application inside SOLIDWORKS CAD; and Luxion KeyShot 9, a CAD-friendly standalone rendering program.

Subscribe to our FREE magazine, FREE email newsletters or both!