FLUENT Helps Determine Scalability on Multicore Clusters

InfiniBand interconnect helps sustain efficiency in cluster computing.

Latest News

November 1, 2008

By Gilad Shainer, Swati Kher, and Prasad Alavilli

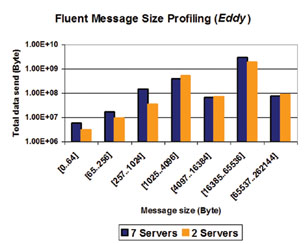

Figure 1: Eddy_417k benchmark results - InfiniBand versus GigE |

Increasing demand for computing power in scientific and engineering applications has spurred deployment of high-performance computing (HPC) clusters. As more engineers run CFD applications on HPC clusters to model sophisticated analysis, it becomes clear that these applications need to be highly parallel and scalable to make optimum use of cluster computing ability. Moreover, multicore-based clusters impose higher demands on cluster components, in particular the cluster interconnect. To determine the I/O requirements for achieving high scalability and productivity using cluster components, we decided to explore ANSYS FLUENT CFD software to demonstrate how interconnect hardware helps the efficiency of any cluster size.

Multicore Scalability Analysis

To achieve accurate results, CFD users rely on HPC clusters and InfiniBand-based multicore cluster environments provide such HPC systems for simulations. At the core of any CFD calculation is a computational grid, used to divide the solution domain into thousands or millions of elements where the problem variables are computed and stored.

For the performance and scalability analysis in this article, the newest set of benchmark cases from ANSYS was used:

- Eddy_417k: a reacting flow case with the eddy dissipation model. k-epsilon turbulence and segregated implicit solver are used. The case has around 417,000 hexahedral cells.

- Turbo_500k: single stage turbo machinery flow case using the Spallart-Allmaras turbulence model and the coupled implicit solver. The case has around 500,000 cells of mixed type.

- Aircraft_2m: external flow over an aircraft wing. The case has around 1.8 million hexahedral cells and uses the realizable k-epsilon model and the coupled implicit solver.

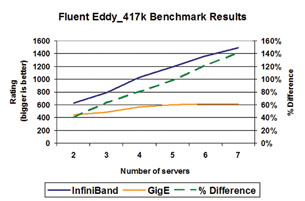

Figure 2: Turbo_500K benchmark results - InfiniBand versus GigE |

The scalability analysis was performed at Mellanox Cluster Center using a Maia cluster. A Maia cluster consists of eight server nodes, each with dual quad-core AMD Barcelona CPUs 2GHz and 16GB DDR2 memory. The servers were connected with gigabit Ethernet and Mellanox ConnectX InfiniBand adapters. Each of the three benchmarks were tested using gigabit Ethernet and then the InfiniBand networks, on cluster configurations from two server nodes to seven server nodes. ANSYS FLUENT software version 6.3 with HP MPI version 2.2.5.1 was used. The InfiniBand drivers were OFED 1.3 RC1 from OpenFabrics and the ConnectX InfiniBand FW version 2.3.0.

Eddy_417k, Turbo_500k, and Aircraft_2m Benchmark Results

Eddy_417k, Turbo_500k, and Aircraft_2m results are shown in Figures 1, 2, and 3. The InfiniBand connected cluster has demonstrated a higher performance (rating) than the GigE-based configuration, with 40 percent higher rating for only 2 server nodes, and up to a 140 percent higher rating with 7 cluster servers for Eddy_417k.

For four server nodes, InfiniBand provides 50 percent higher rating, which translated to 50 percent saving in power when switching to use InfiniBand instead of GigE for the same rating for Turbo_500k.

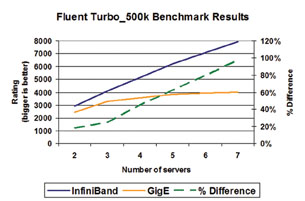

Figure 3: Aircraft_2m benchmark results - InfiniBand versus GigE |

According to the asymptotic curve of GigE rating, it takes only three InfiniBand connected servers to outperform any cluster size connected with GigE. For Aircraft_2m, GigE scalability is limited to four to five server nodes and is clearly the limiting factor for HPC clusters.

Furthermore, the benchmark results validate the massive increase of I/O load in the multicore platforms. In the past, single-core server clusters could use GigE for up to 16 to 32 servers before reaching the I/O bottleneck. In the Eddy_417k case, GigE limits the performance scaling from four to five servers and up. Any cluster size beyond four to five servers will show no performance gain.

Profiling of FLUENT Network Activity

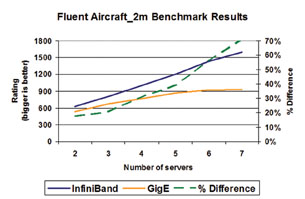

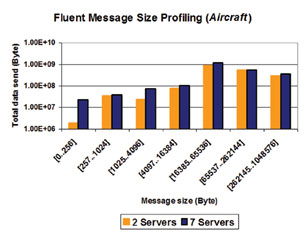

In order to understand the I/O requirements of FLUENT, we have analyzed the network activity during two different runs of Eddy_417k and Aircraft_2m benchmarks, one when testing two server nodes and the second when executing the benchmark in seven server nodes. The results of the total data that was sent for the corresponding MPI message size (or I/O message size) are shown in Figures 4 and 5.

For both benchmarks, as the number of nodes increases from two servers to seven servers, there are more control messages (small size) to synchronize more servers and more large data messages. For Eddy_417k, there are two peaks of I/O throughput, one at 1KB-4KB message size and the second at 16KB-64KB. In the Aircraft_2m case, the peaks of I/O throughput were in the large message size range, one at 16KB-64KB message size and the second at 64KB-256KB, which reflect the need for high bandwidth interconnect solution.

|

For Eddy_417k, higher number of small messages (mainly control type of messages), 2B-64B and 64B-128B in size, was also seen. These network profiling results show that the increase in cluster size and/or the increase in problem size (number of hexahedral cells), result in a higher number of control messages (small size), hence the need for low latency — and large data messages, hence the need for high throughput.

InfiniBand in Multicore Clusters

The way the cluster nodes are connected together has a great influence on the overall application performance, especially when multicore servers are used. The cluster interconnect is very critical to efficiency and scalability of the entire cluster, as it needs to handle the I/O requirements from each of the CPU cores, while not imposing any networking overhead on the same CPUs. In multicore setting, the performance and scalability bottleneck has shifted to the I/O and memory configuration.

In order to guarantee linear application scalability, it is essential to have I/O that provides the same low latency for each process/core, regardless of the number of cores that operate simultaneously. As each of the CPU cores generates and receives data, to and from other server nodes, the bandwidth capability needs to be able to handle all the data streams. Furthermore, the interconnect should handle all the communication and offload the CPU cores from I/O-related tasks in order to maximize the CPU cycles that are dedicated to the applications. The low-bandwidth and high-latency characteristics of GigE make it the performance bottleneck, limiting the overall cluster efficiency and preventing any performance gain beyond four to five servers.

Figure 5: Aircraft_2m network profiling in systems studied in Figure 4. |

InfiniBand Takes Advantage

ANSYS FLUENT CFD software and the new FLUENT benchmark cases were investigated. For all cases, FLUENT demonstrated high parallelism and scalability, enabling it to take full advantage of multicore HPC clusters. InfiniBand shows greater efficiency and scalability with cluster size, providing up to 40 percent higher performance than GigE when only two server nodes are being used, and the performance gap increases dramatically with cluster size. As a result, CFD users are likely to gain savings in cost, power, and maintenance.

We profiled the network activity of FLUENT software to determine the I/O requirements. From those results we have concluded that along with low-CPU overhead, a high-bandwidth and low-latency interconnect is required in order to maintain high scalability and efficiency. We can conclude that higher throughput (such as InfiniBand QDR 40Gbps) and lower latency interconnects will continue to improve FLUENT performance and productivity.

The authors would like to thank FLUENT Parallel/HPC Team at ANSYS Inc. and Scot Schultz at Advanced Micro Devices (AMD) for their support during the creation of this article.

More Info:

ANSYS, Inc.

Canonsburg, PA

Mellanox Technologies

Santa Clara, CA

Gilad Shainer is a senior technical marketing manager at Mellanox Technologies. He has an M.S. and B.S. in electrical engineering from the Technion Institute of Technology in Israel. Swati Kher has an M.S. in computer engineering from NorthCarolina State University and a B.S. in electrical engineering from the University of Texas at Austin. Prasad Alavilli, Ph.D., leads the FLUENT Parallel and High Performance Computing group at ANSYS Inc. You can send an e-mail about this article to [email protected].

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

DE’s editors contribute news and new product announcements to Digital Engineering.

Press releases may be sent to them via [email protected].