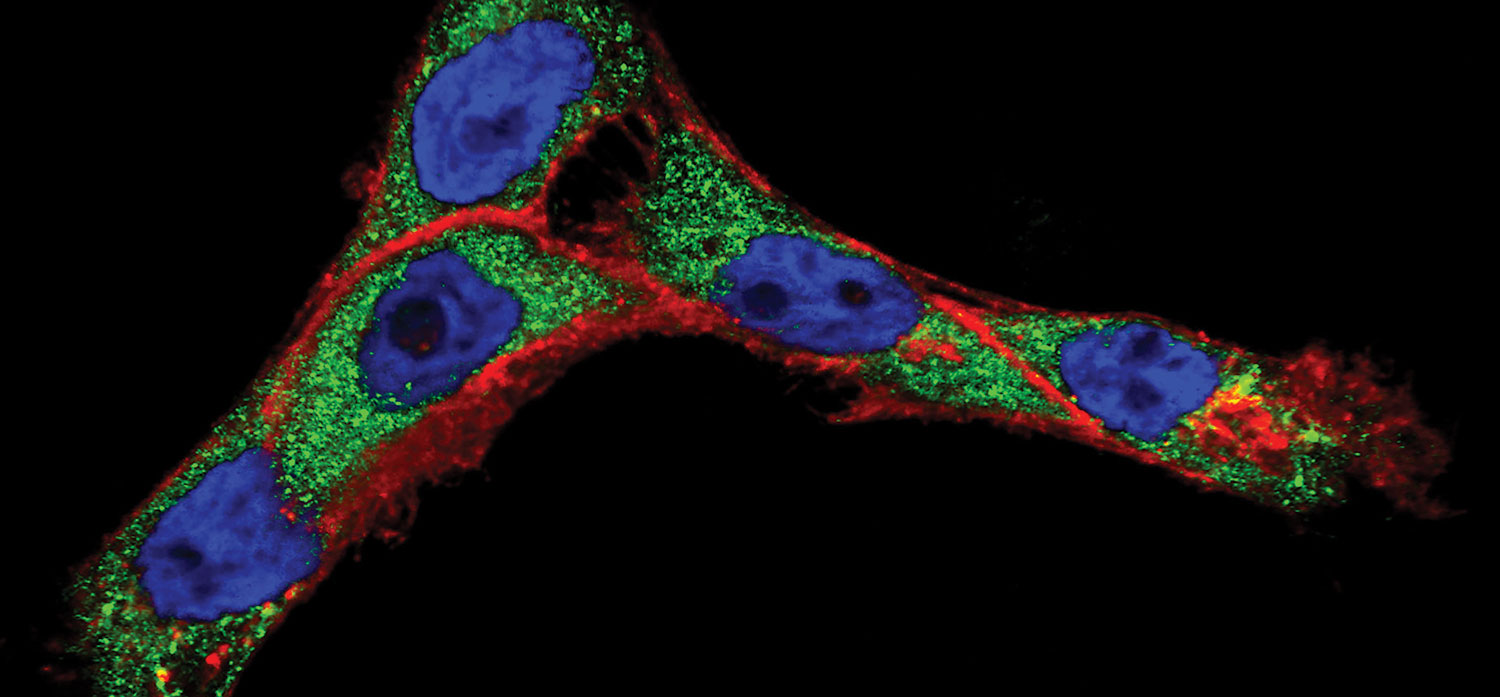

Progress in cancer nanotechnology requires a better understanding of how molecular-targeted nanoparticles interact with live cells. Here, when cancer cells (cell nuclei in blue) were treated with antibody-conjugated nanoparticles, the antibodies (red) and the nanoparticle cores (green) separated into different cellular compartments. Such knowledge may lead to improved methods of cancer detection in vivo as well as better nanoparticle-based treatments. Image by Sangheon Han, Konstantin Sokolov, Tomasz Zal and Anna Zal, Image courtesy of National Cancer Institute / M.D. Anderson Cancer Center.

From Big Data to Bioinformatics

The future of personalized medicine depends on data and the high performance computing resources needed to analyze it.

Latest News

November 9, 2018

There was a time when healthcare computing was all about collecting data. While the need for data is ongoing, those challenges have largely been addressed. Now, healthcare computing is about computational power and algorithms. Turning healthcare data into actionable information is the job of pattern recognition, data mining, neural networks, machine learning and visualization — and they all need high performance computing (HPC).

“In 10 years, neural networks will represent about 40% of all compute cycles,” says Jeff Kirk, HPC and AI technology strategist for Dell EMC. Computational science has mostly been about simulation, Kirk notes. “Solving theoretical equations forward in time to make predictions, and comparing those simulation outputs to observations to test theories.” But the human body is a “far bigger model than any computer can solve directly,” he says, thus the need for HPC technologies.

The big four technologies healthcare needs are synergistic in their relationship to the data, Kirk notes. Artificial intelligence (AI) is the broader concept of machines being able to carry out tasks in a way we would consider “smart.” If AI is the box, inside we find neural networks, machine learning, and deep learning, entangled like so many cords. A neural network classifies information similar to how the brain works. Neural nets can be taught to recognize images and classify them based on elements in the images. Machine learning enables computers to make decisions without explicit programming, using data to train a model that can then be used to make inferences, predictions, and establish probabilities. Deep learning is a subset of machine learning that uses neural net algorithms for training the model, taking advantage of the massive data already classified.

Between the algorithmic action and the wide variety of input from data sources, the future is wide open for HPC in healthcare.

Overcoming Systemic Challenges

Traditionally, healthcare has been slow to use data and analytics due to multiple disconnected systems, poor data quality and behavior on the part of both patients and providers. In a review of the industry, the global business research firm Deloitte notes change has arrived.

“We are now at a tipping point in advanced technology adoption towards an outcomes-based, patient-centric care model,” the company announced in a study titled “The Future Awakens: Life Sciences and Health Care Predictions 2022.” In particular, Deloitte sees six significant changes:

1. The quantified self: “The ‘genome generation’ is more informed and engaged in managing their own health.”

2. Healthcare culture: “Smart healthcare is delivering more cost-effective, patient-centered care.”

3. Industrialization of life sciences: “Advanced cognitive technologies have improved productivity, speed, and compliance.”

4. Data availability: “Data is the new healthcare currency.”

5. Futuristic thinking: “Exponential advances in life-extending and precision therapies are improving outcomes.”

6. Changing roles: “The boundaries between stakeholders have become increasingly blurred.”

Despite progress, there are more than enough challenges to go around. The biggest names in HPC hardware, as well as leading software developers of all sizes are taking on how to work with this new healthcare data tsunami.

For example, a startup in Slovenia believes it is on the right track with an emphasis on decoupling healthcare data from the specifics of device, application, or ecosystem. Iryo.Network is bringing interoperability to healthcare so data can be combined from various sources (clinical, research, wellness) and used for broader purposes. Iryo believes a user-centric personal health data repository, stored on an open blockchain, can be the solution to both access to data and personal rights regarding sensitive data.

“The common layer that Iryo is providing assures medical device manufacturers several data management benefits,” says Iryo.Network CEO Vasja Bocko. “Security, legal compliance, transparency, interoperability and immutability [will make] data future-proof, enabling research based on big data analysis, machine learning, the development of predictive algorithms, and supporting tele-medical solutions.”

The European Union’s PRACE — Partnership for Advanced Computing in Europe — acknowledges the strong need for HPC computing in healthcare and life sciences to be widely distributed. The data infrastructure of healthcare “needs to deal with high volumes and velocity,” says Steven Newhouse, head of Technical Services, EMBL-EBI (European Bioinformatics Institute, a PRACE associated agency). “HPC is not just PRACE; capacity is as important — perhaps more important — than capability.” From a resource allocation standpoint, Newhouse says it is important to manage data needs dynamically in a defined space.

HPC specialist Verne Global notes there are “obstacles” to HPC adoption in healthcare and life sciences. To name a few: Too many applications are being developed only for the desktop, industry researchers lack the specific IT training or support for HPC operations, and there is lack of raw computing availability. When it takes between 300-500GB of unstructured data to represent one human genome, processing genomes for a study group or the population at large becomes beyond the scope of

many organizations.

Computing at the Cellular Level

The challenges and obstacles are real, but so are the solutions and recent breakthroughs. Studying tumor tissue has been a common approach to cancer research. Some research groups are now using the power of HPC computing to use a single-cell approach to studying tumor development. Mathematical models simulate cells that researchers use to manipulate variables including hormone levels. By observing the changes in the cell, researchers can model more precisely the specific probabilities of the tumor’s development. This method requires dissection of hundreds of cell mutations, impossible without the advanced modeling capabilities of HPC.

Artificial intelligence routines running on HPC are being used for mammogram studies, significantly shortening the diagnosis phase. Other modeling in healthcare allows rapid creation and analysis of pandemic models. Modeling of neural circuits in the brain is another promising research area where data collection methods have created a rich body of data with which to work.

HPC for genome sequencing has led to the process being 10 million times cheaper than when the technology was first developed. A human genome can now be sequenced for as little as $1,000 in some high-volume laboratories. McKinsey Global Institute predicts the price will drop by another factor of 10 within the next decade.

Deep learning is another area where the combination of HPC and healthcare data are becoming valuable. A group of researchers at Johns Hopkins University developed a novel approach applying deep neural networks (DNNs) to predict pharmacologic properties of drugs. In this study, scientists trained DNNs to predict the therapeutic use of a large number of drugs using gene expression data obtained from high-throughput experiments on human cell lines. Authors measured differential signaling pathway activation scores, then used the data to reduce dimensionality before using the scores to train the deep neural networks.

In another deep learning project, scientists at Insilico Medicine, Inc. recently published the first deep-learned biomarker of human age, aiming to predict the health status of the patient.

Hadoop Possibilities

The set of open source utilities for HPC known collectively as Hadoop are designed to simplify the use of large data sets — and no industry has greater need of processing large data sets than healthcare.

Hadoop is an example of a technology that allows healthcare to store data in its native form. If Hadoop didn’t exist, decisions would have to be made about what can be incorporated into the data warehouse or the electronic medical record (and what cannot). Now, everything can be brought into Hadoop, regardless of data format or speed of ingest. If a new data source is found, it can be stored immediately.

A “no data left behind” policy based on Hadoop use is crucial. By the end of 2018, the number of health records is likely to increase into tens of billions (due to multiple health records for one person). The computing technology and infrastructure must be able to inexpensively process billions of unstructured data sets with top-notch fault tolerance.

More Dell EMC Coverage

For More Info

Deloitte

EMBL-EBI

Insilico Medicine

Iryo.Network

Johns Hopkins University

McKinsey Global Institute

PRACE

Verne Global

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Randall S. Newton is principal analyst at Consilia Vektor, covering engineering technology. He has been part of the computer graphics industry in a variety of roles since 1985.

Follow DE