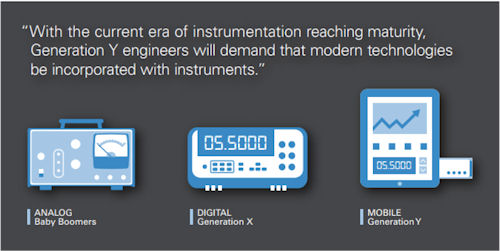

Each generation of engineer has seen new generations of instrumentation. Baby Boomers (born in the 1940s to 1960s) used cathode-ray oscilloscopes and multimeters with needle displays, now commonly referred to as “analog” instruments. Generation X (born in the 1960s to 1980s) ushered in a new generation of “digital” instruments that used analog-to-digital converters and graphical displays. Generation Y (born in the 1980s to 2000s) is now entering the workforce with a new mindset that will drive the next generation of instrumentation.

Generation Y has grown up in a world surrounded by technology. From computers, to the internet, and now mobile devices, this technology has evolved at a faster rate than ever before. A recent report from Cisco delved into the nature of Generation Y and their relationship with technology:

With the current era of instrumentation reaching maturity, Generation Y engineers will demand that modern technologies be incorporated with instruments. Instrumentation in the era of Generation Y will incorporate touchscreens, mobile devices, cloud connectivity and predictive intelligence to provide significant advantages over previous generations.

According to Frost & Sullivan, “engineers will increasingly associate the concept of a user interface with the one they use on their consumer electronics devices.“ The touchscreen-based user interfaces found in today’s mobile devices provide a drastically different experience compared to the physical knobs and buttons on today’s instruments, which will be unsatisfactory for Generation Y.

As instruments have added new features, they’ve also added new knobs and buttons to support them. However, this approach is not scalable. At some point, the number of knobs and buttons becomes inefficient and overwhelming. Some instruments have resorted to multi-layered menu systems and “soft buttons” that correspond to variable actions, but the complexity of these systems have created other usability issues. Most Generation Y engineers would describe today’s instruments as cumbersome.

An instrument that completely ditches physical knobs and buttons, and instead uses a touchscreen as the user interface, could solve these challenges. Rather than presenting all of the controls at once, the touchscreen could simplify the interface by dynamically delivering only the content and controls relevant to the current task. Users could also interact directly with the data on the screen rather than with a disjointed knob or button. They could use gesture-based interactions, such as performing a pinch directly on the oscilloscope graph to change the time/div or volts/div. Touchscreen-based interfaces provide a more efficient and intuitive replacement for physical knobs and buttons.

By leveraging the hardware resources provided by mobile devices, instruments can take advantage of better components and newer technology.

This approach would look very different from today’s instruments. The processing and user interface would be handled by an app that runs on a mobile device. Since no physical knobs, buttons, or a display would be required, the instrument hardware would be reduced to only the measurement and timing systems, resulting in a smaller size and lower cost. Users wouldn’t be limited by the tiny built-in displays, small onboard storage and slow operation. They could instead take advantage of large, crisp displays, gigabytes of data storage and multi-core processors. Built-in cameras, microphones and accelerometers could also facilitate new possibilities such as capturing a picture of a test setup or recording audio annotations for inclusion with data. Users could even develop custom apps to meet specialized needs.

While it’s entirely possible for traditional instruments to integrate better components, the pace at which this can happen will lag mobile devices. Consumer electronics have faster innovation cycles and economies of scale, and instruments that leverage them will always have better technology and lower costs.

Engineers commonly transfer data between their instruments and computers with USB thumb drives or with software for downloading data over an Ethernet or USB cable. While this process is fairly trivial, Generation Y has come to expect instantaneous access to data with cloud technologies. Services like Dropbox and iCloud store documents in the cloud and automatically synchronize them across multiple devices. Combined with Wi-Fi and cellular networks that keep users continuously connected, they can access and edit their documents from anywhere at any time. In addition to just storing files in the cloud, some services host full applications in the cloud. With services like Google Drive, users can remotely collaborate and simultaneously edit documents from anywhere.

Instrumentation that incorporates network and cloud connectivity could provide engineers these same benefits. Both the data and user interface could be accessed by multiple engineers from anywhere in the world. When debugging an issue with a colleague who is off site, rather than only sharing a static screenshot, engineers could interact with the instrument in real time to better understand the issue. Cloud technologies could greatly improve an engineering team’s efficiency and productivity.

Context-aware computing is beginning to emerge and could fundamentally change how we interact with devices. This technology uses situational and environmental information to anticipate users’ needs and deliver situation-aware content, features, and experiences. A popular example of this is Siri, a feature in recent Apple iOS devices. Users speak commands or ask questions to Siri, and it responds by performing actions or giving recommendations. Google Now provides similar functionality to Siri, but also passively delivers information that it thinks the user will want based on geolocation and search data: weather information and traffic recommendations appear in the mornings; meeting reminders are displayed with estimated time to arrive at the location; and flight information and boarding passes are surfaced automatically.

Similar intelligence, when combined with instrumentation could be game-changing. A common challenge engineers face is attempting to make configuration changes to an instrument at the same time that their hands are tied up with probes. Voice-control could not only provide hands-free interaction, but also easier interaction with features. In addition, predictive intelligence could be used to highlight relevant or interesting data. An oscilloscope could automatically zoom and configure based on an interesting part of a signal or it could add relevant measurements based on signal shape. An instrument that leverages mobile devices could integrate and take advantage of context-aware computing as the technology is developed.

Technology in consumer electronic devices is evolving rapidly and influencing the expectations of Generation Y. As more and more Generation Y engineers enter the workforce, it is only a matter of time before their expectations are applied to the instrumentation they use for their jobs. Not only will this evolving technology provide significant benefits to instrumentation, the technically savvy Generation Y engineer will leverage it to solve engineering challenges faster than previous generations ever thought possible.

Join over 90,000 engineering professionals who get fresh engineering news as soon as it is published.