Integrating Legacy Data: A Perennial PLM Pain Point

Support for multi-CAD was among the many reasons Gentherm upgraded its 15-year-old PLM system to PTC Windchill despite the difficulty of migrating legacy data. Image Courtesy of Gentherm.

Latest News

January 2, 2018

Last January, Marinko Lazanja, director of engineering systems at Gentherm, pulled the trigger on the first of many dress rehearsals for an important milestone for the manufacturer of climate control and thermal management systems: migrating from a 15-year-old legacy product lifecycle management (PLM) platform to PTC’s Windchill.

The $1 billion Gentherm, a key player in the automotive supply chain, had long outgrown its MatrixOne system. However, because the platform was ground zero for critical product and engineering-related material (nearly 40,000 documents comprising up to 2 to 3 terabytes of data) and because its functionality had mostly held up over the years, Gentherm kept postponing an upgrade to modern-day PLM. That is until the company’s exponential growth—nearly doubling in size over the last five years—and the complexity of its product bench made the decision to put off switching PLM platforms no longer sustainable.

“Our old system served its job well, but it couldn’t support our current processes—it lacked support for a multi-CAD environment and it didn’t adequately enable reuse,” Lazanja explains. “We couldn’t keep using old tools. It was becoming more work to use the tool than to look for something else.”

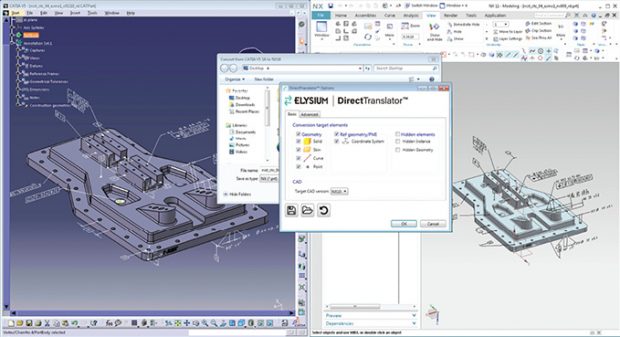

Elysium DirectTranslator ensures 3D data exchange through all current and many legacy CAD systems. Image courtesy of Elysium.

Elysium DirectTranslator ensures 3D data exchange through all current and many legacy CAD systems. Image courtesy of Elysium.Gentherm, like hundreds of early adopters of PLM, kept its first-generation PLM platform active far beyond its shelf life because of the cost and complexity associated with replacing a core system on an enterprise scale. “Lots of customers are stuck with 15- to 20-year-old PLM technology and they can’t upgrade,” says Kevin Power, business development manager for Tata Technologies. “They look at the data migration mountain and the huge effort to move into a new system and there’s not enough pain (tolerance) to go through with that.”

In addition to expensive software, protracted implementation services and the general upheaval to engineering culture and the broader core business processes, one of the biggest inhibitors to moving between PLM platforms is the laborious task of migrating legacy data over to the new system. The process hasn’t gotten much easier despite the significant technology advances in current-day PLM, in part, because of the sheer size and scope of data now managed by the platform. In addition, many first-generation PLM deployments are highly customized, making it difficult for data to easily translate between systems.

“The tools are getting better at the same time the problem is getting harder and more complex,” says Tom Makoski, executive vice president, PLM & Migration for ITI Global, a consultancy specializing in product data interoperability problems. “There’s been an improvement in import and export capabilities, but there’s now much more complexity to the data going into these systems and much larger amounts of data migrating.”

PLM Data Migration Best Practices

Gentherm’s migration plan involves shifting 15 years’ worth of product-related data out of its long-time Matrix One PLM platform and over to PTC Windchill. Rather than taking a big bang approach, the company has staged its migration over the course of two years, initially moving its quality management and documentation systems, implementing ProjectLink for project management in September, conducting a range of migration dress rehearsals and data verification tests, and targeting a full migration and retirement of MatrixOne by 2018.

Support for multi-CAD was among the many reasons Gentherm upgraded its 15-year-old PLM system to PTC Windchill despite the difficulty of migrating legacy data. Image courtesy of Gentherm.

Support for multi-CAD was among the many reasons Gentherm upgraded its 15-year-old PLM system to PTC Windchill despite the difficulty of migrating legacy data. Image courtesy of Gentherm.“We’re building it out phase by phase—Windchill is a powerful tool, but there’s a lot of cultural change that comes with it and we’ve been using [Matrix One] for many years,” Lazanja says. “This is something you have to plan carefully.”

One of the first steps to migration planning is to understand your data and figure out exactly what and how much data to move over to a new PLM system, according to Annalise Suzuki, director of technology and engagement for Elysium, a provider of multi-CAD interoperability tools. In the case of CAD data and 3D models, much of the complexity can be removed from the equation because it’s not always necessary to transfer 100% of existing data, depending on how that data is expected to be used, she explains.

“The percentage of legacy data expected to undergo engineering changes, which truly require feature history, can be very small,” she explains. “It’s a lot of work if you expect you need everything and really don’t.” Elysium’s tools can be tapped to repair quality issues on CAD models and to facilitate CAD-to-CAD translation.

Once the data has been identified, transformation of that data is critical to ensure a smooth migration. Over the years, data in legacy PLM systems can be corrupted for a variety of reasons, including system updates and upgrades or changes to process and business rules. Removal of relations, missing mandatory attributes, incomplete revisions and orphan data sets are common causes of data integrity failures, says Troy Banitt, director, Teamcenter Product Management, Platform and Product Engineering at Siemens PLM Software.

“When you want to move from an older to a newer PLM solution and migrate data, the big expense is cleansing and validating the legacy data,” says Tom Gill, senior consultant, PLM Enterprise Value & Integration Practice Manager for CIMdata, a PLM strategy management consultant firm. “The reality is data atrophy can set in, and data needs to be maintained to stay valid. People don’t follow standards and rules so data is never perfect.”

PLM migrations can also be compromised by legacy data that doesn’t conform to current business rules. In addition, because companies are increasingly operating at a 24/7 pace, migration performance is critical, as there is a limited window to accommodate what is typically a lengthy schedule, Siemens’ Banitt adds. To help its customers with PLM migration, Siemens released the Deployment Center, a web-based installer designed to make it easier to install, patch, and upgrade Teamcenter software, including development and test environments. The company also has a number of loading tools to convert data from CSV format to native Teamcenter format along with frameworks designed to streamline the integration of Teamcenter with legacy applications.

“People don’t follow standards and rules, so data is never perfect.”

— Tom Gill, CIMdata

One of the ongoing questions in a PLM migration project is what and how much data to migrate. Some, like CIMdata’s Gill, advocate for migrating all relevant data because it creates too much complexity to maintain separate processes and incur infrastructure costs related to keeping siloed systems live. “If you think data has value then it should be migrated—scope what needs to be done and retire the old solutions to reduce complexity and costs,” he says.

Another school of thought is to make some data accessible in a PLM system, in PDFs for viewing, for example, but don’t migrate complex native data like CAD to the new platform. A manufacturer building a highly engineered product like a satellite might not want to retire its legacy system and instead may want to build integration between that platform and its new PLM system. On the other hand, a supplier that builds small assemblies might decide it’s easier to bite the bullet and drop its legacy platform and migrate everything over to the new PLM platform.

After the merger of car giants Chrysler and Fiat, the new Fiat Chrysler Automobiles (FCA) embarked on a full-scale integration effort to replace Dassault Systèmes’ CATIA V5 and VPM with the Siemens suite of Teamcenter, TcVis, and NX to serve as the key PLM backbone for FCA Engineering. There was considerable effort to prepare CAD data structures and a set of dedicated tools were implemented for data extraction from the previous solution for ingestion into Teamcenter, says Gilberto Ceresa, senior vice president at CIO for FCA. To streamline the effort, FCA staged the migrations based on vehicle program timing and their related part carry over needs to be made available from legacy solutions. “This approach allowed us to split the migration in different waves of smaller complexity than a single big bang approach and allowed the team to gain experience on the migration procedures applied along the process to improve the overall quality,” Ceresa says.

From the get-go, Ceresa says it’s important to have a clear understanding of the business benefits to be gained, starting with a clear picture of the existing processes and with a clear vision about how the new PLM solution will interact with the existing application landscape. “Internal customer involvement in the solution design, a timely communication plan for change management, and tailored training are then crucial to mitigate the risks of activities with this level of complexity,” he explains.

Honda, a long-time Dassault customer, is taking a similar tack of careful planning and moving only the data it needs as part of its ongoing transition toward increased virtualization and digital manufacturing using Dassault Systèmes’ 3DEXPERIENCE platform. For example, when implementing a new model process planning tool to help engineers create an efficient workflow for assembly, the company realized it only needed some of the CAD data in the new tool.

“We had a legacy tool that was only text-based. It was really a valuable tool for our process engineers for what we had at the time, but we couldn’t take advantage of the 3D data that was being generated by R&D,” said Ron Emerson, associate chief engineer, Honda North America, during his presentation at the Dassault Systèmes 3DEXPERIENCE Forum in October last year. “We couldn’t take advantage of all the data that came along with that. It was really hard for the process planners to visualize what they were assembling.”

The requirements for the more visual tool included fast and simple part visualization. Honda worked with Dassault to develop the new model planning structure application, which helps engineers quickly see when they’ve dragged and dropped all the required parts into an assembly sequence. The new tool provides Honda with the ability to load an entire vehicle into a session 80% faster using lightweight data, rather than all of the heavy mathematics of the full CAD data.

“Both strategies are valid and you make the decision on a customer-by-customer, application-by-application basis,” says ITI Global’s Makoski, which promotes a multistep process for PLM migration, including exporting, transforming, aggregating and loading.

Evolving Technologies

PTC offers a range of choices to ease the migration burden, promoting multiple levels of migration and integration and the idea data should live where it works best. For lightweight, spontaneous access to data in PLM or SAP platforms, mainstream users can tap the new ThingWorx Navigate to aggregate data, enabling them to look at a parts structure as a part of a bill of materials (BOM), for example, without going through the pain of a big migration project, says Mark Taber, vice president of marketing for the PLM group.

Support for standards like Open Services for Lifecycle Collaboration (OSLC) enable a higher level of integration, allowing data to stay in an existing system, but still synchronizing traceability and compliance between platforms, Taber explains. PTC also has capabilities for integration via partner middleware programs along with a solutions and partner program to assist customers in the traditional full-scale PLM migration.

With ThingWorx Navigate, companies can deliver role-based access to accurate product information without going through the pain of a full PLM migration, according to the company. Image courtesy of PTC.

With ThingWorx Navigate, companies can deliver role-based access to accurate product information without going through the pain of a full PLM migration, according to the company. Image courtesy of PTC.The idea, Taber says, is to offer choices. “The fact that product data lives in so many different systems of record, the idea that you have a single system that has everything isn’t practical anymore,” he says. “But you still need a single view of that information.”

For companies like Autodesk and Arena Solutions, the nature of their typical customer profile (companies new to PLM) and the fact they support cloud-based PLM solutions makes migration less of a pain point for customers, although it still remains an issue, says Steve Chalgren, Arena Solutions’ executive vice president of product management and strategy, and its chief strategy officer. Most Arena customers don’t have PLM already in place, he explains, and are instead migrating data stored in Access databases or spreadsheets to the new platform.

In fact, Chalgren contends Arena’s support for the cloud actually serves as a catalyst for many customers to bite the bullet on PLM migration. “We are seeing a regular cadence of people migrating to us from legacy systems because we are modern technology and they want to move to a more efficient enterprise software strategy in the cloud,” he says. “Enterprise software is relatively sticky—it’s expensive and disruptive to move so you need a reason to do it.”

Cloud-based PLM also provides an opportunity to approach what is typically a significant business project in digestible pieces, notes Charlie Candy, Autodesk’s senior manager, Global Business Strategy for Enterprise Cloud Platform, which includes the Fusion Lifecycle PLM platform. “Starting with quick wins, supporting processes to larger use cases like NPI (new product introduction) is a good way to get users on board early and show the product potential,” he explains. “This approach delivers incremental value and improvement.”

Standards help alleviate some of the pain associated with legacy PLM data migration, but the problem will remain a heavy lift for the foreseeable future. “The newer PLM solutions have more capabilities and support integration better and there are standards that make overall interconnection easier, but it’s still a complex task and you still need governance,” CIMdata’s Gill explains. “Otherwise, you paint yourself into a corner and make the solution unsustainable.”

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Beth Stackpole is a contributing editor to Digital Engineering. Send e-mail about this article to [email protected].

Follow DE