Mathematics Software Solves Big Data Problems

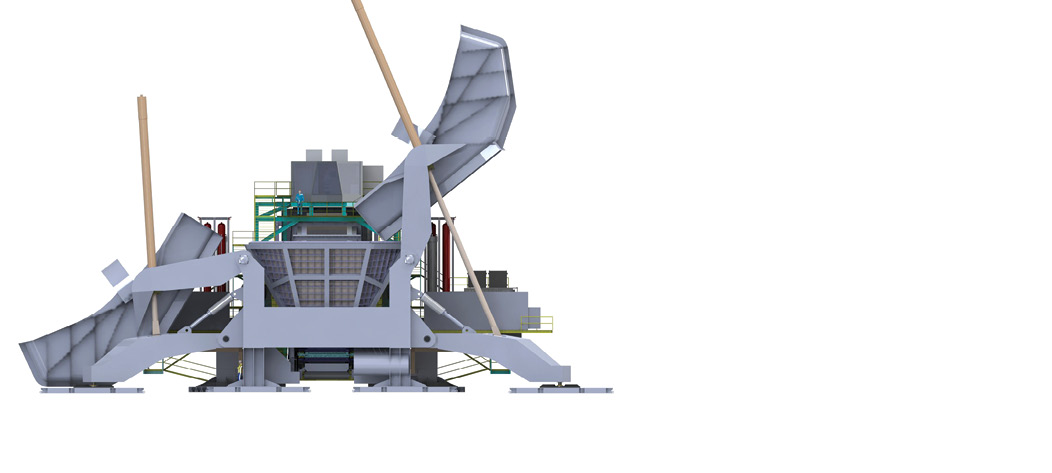

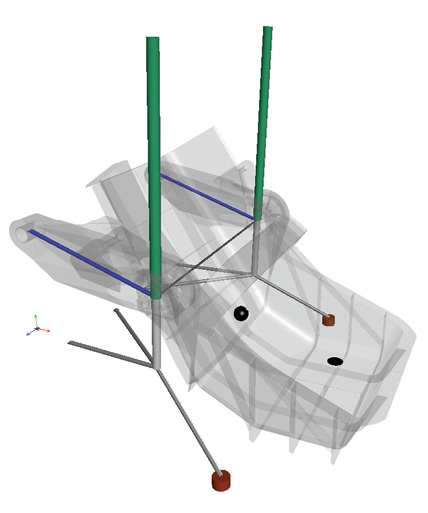

FLSmidth used Maplesoft tools to determine the vertical displacement of mining equipment under different soil conditions. Image courtesy of Maplesoft.

Latest News

August 1, 2017

Huge amounts of detailed data are being collected from scientific instruments, manufacturing systems, connected cars, aircraft and consumer devices. But how can that data be mined to make it useful? One way is to employ mathematics software to develop algorithms to find the useful needles in the data haystack.

As firms collect these larger amounts of data, organizations are recognizing the value and putting it into specialized systems like Hadoop. Engineers need to be able to get that data from those types of platforms and run their analyses.

“It’s clear that more and more data is being collected and is available for engineers to make the right decision. It is also very clear that it’s a problem to easily make sense of all of that data,” says Laurent Bernardin, executive vice president of research and development, and chief scientist at Maplesoft.

FLSmidth used Maplesoft tools to determine the vertical displacement of mining equipment under different soil conditions. Image courtesy of Maplesoft.

FLSmidth used Maplesoft tools to determine the vertical displacement of mining equipment under different soil conditions. Image courtesy of Maplesoft.Engineers now have access to high performance computing (HPC) clusters, cloud-based compute resources and more powerful workstations that can handle advanced mathematics, analysis and other functions. That’s given them the ability to leverage advanced mathematics tools to develop algorithms to manage Big Data.

Part of the data glut is being fed by the Internet of Things (IoT). In the past, the product lifecycle was limited to design, manufacturing and putting a product in service. With connected devices and the IoT, engineers now have the ability to understand how an item is performing and how it affects the performance of an entire operation.

That’s opened up a whole new way to use analytics and specially developed, predictive algorithms. “We now have real physical data from an inductive model to get insight for future designs,” says Chris MacDonald, director of analytics at PTC. “We can create this virtuous cycle that can be leveraged for the benefit of the product, both as designed and as operated.”

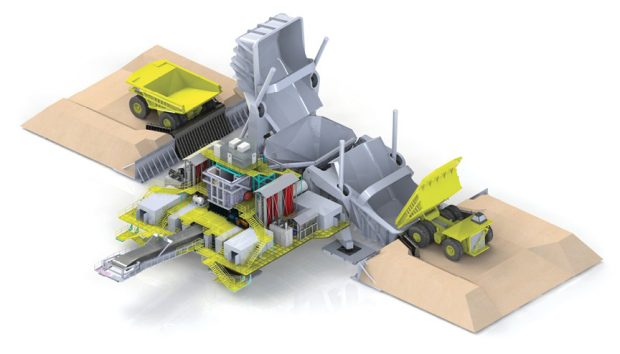

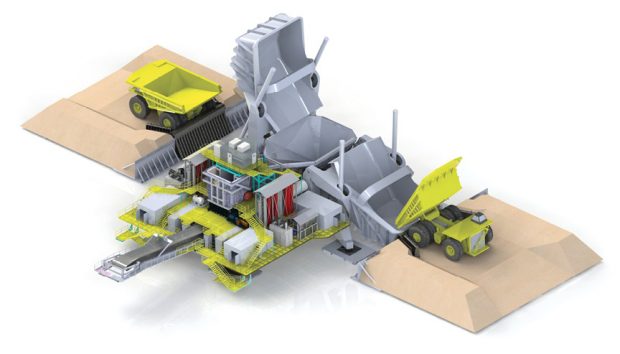

FLSmidth used Maplesoft’s products to create a fully functional model of its Dual Truck Mobile Sizer equipment for mining operations. Image courtesy of Maplesoft.

FLSmidth used Maplesoft’s products to create a fully functional model of its Dual Truck Mobile Sizer equipment for mining operations. Image courtesy of Maplesoft.In the past, traditional analysis focused on diagnoses and understanding how designs were done. “What we’re seeing now is a move toward creating models to predict behaviors,” says Dave Oswill, product marketing manager at MathWorks. “Companies want to optimize the uptime of their machines for their customers.

Big Data, Complex Modeling

As data sets grow and the number of inputs expand, mathematical solutions are more important than ever. “Just trying to find what is in the data you are collecting is a challenge,” Oswill says. “We have visualization capabilities in our MATLAB product to help customers identify what they are looking for. For example, with the sensors coming off an aircraft, there are thousands of signals. Statistical methods can be used to see which signals the engineers want to use.”

According to Jan Brugard, CEO of Wolfram MathCore, drawing conclusions from these models relies on increasing cross-sectional integration between mathematical models and advanced statistical methods, machine learning analysis, Bayesian models and other approaches.

In some of Wolfram’s key markets like medical devices and pharmaceuticals, Big Data usage is rapidly increasing but still not widely adopted. “However, when these new technologies are used widely, it is predicated to yield significant gains both in terms of time spent as well as financial gains,” Brugard says. “[T]he ability to combine different types of modeling methods will be a key factor to fully benefit from Big Data.”

As an example, Brugard points to mathematical models that can be used as sophisticated “virtual patients.”

“These sophisticated, high-fidelity models could be used to simulate the function of a medical device in patients, which would drastically cut cost and time spent with clinical trials as well as in early development,” Brugard says. “In order to reach this goal companies have to work together and also with regulatory agencies since it requires significant resources to develop and validate these tools.”

Putting Algorithms to Work

Companies are at very different levels of sophistication and adoption depending on their industry and their culture. “Whether a company has that data-driven culture really depends on how important data and analytics is within an organization,” MacDonald says. “We’re already seeing companies taking information and driving predictions of failure to get ahead of problems that exist. We’re just starting to see the beginning of using that information in the design phase.”

This is where math tools make it easier for companies to engage in more advanced modeling and analysis. “The data doesn’t mean a whole lot on its own,” Bernardino says. “You can have the ability to build a mathematical model that allows you to drill down and get insights, and map that data down into subsystems to get a deeper understanding of the how the system works and doesn’t work. You can see the ways it will fail and what its uses are going to be, and how to go about fixing it.”

Math tools also help determine what data is important. MATLAB allows users to bring in large sets of data and create scatterplots to help identify where there may be patterns to explore further, Oswill says. “They can use correlations and other features to identify the right signals and identify what data to use for their models,” he continues.

According to Bernardin, one common scenario is that companies will use mathematics tools to create a model or hypothesis of what the data need should look like. Once enough data has been gathered, the tools can be used to improve the model.

“At the next stage, once you have a model that is validated, you can use a continuous data stream to compare the data from the actual system in use to the mathematical model,” Bernardin says. “This goes into the concept of digital twins. By comparing the incoming data stream from the actual system to the mathematical model, you can detect anomalies, predict failure modes and react to that information in real time.”

Predictive data analysis will be an important application for these capabilities for manufacturers moving forward. Data can be analyzed and used to minimize maintenance costs and improve future designs.

For example, Baker Hughes collects data from expensive pumps that extract oil out of field wells. To monitor the pumps for potentially catastrophic wear and predict failures in advance, the company analyzes pump sensor data with MATLAB and applies MATLAB machine learning algorithms.

“They can put a model together that will indicate when a pump is nearing the point where it can be taken offline, repaired and put back online quickly,” Oswill says. “Being able to manage those activities better via a predictive machine health system can help both the company and its customers maintain service.”

That type of data could conceivably then be fed back to engineering teams to help improve product design and operations.

FLSmidth used Maplesoft’s products to create a fully functional model of its Dual Truck Mobile Sizer equipment for mining operations. The company created a fully parameterized model of the machine in MapleSim. The company’s Geometric Design Evaluation system, which uses the Maple symbolic computation tool, then performed a parameter sweep. The computational abilities of the Maplesoft tools were also used to evaluate joint flexibility, center of mass variations and soil modeling to determine the vertical displacement of the equipment under different soil conditions.

Automaker Renault similarly used Maple to help reduce the mass of a rotor for its electric vehicle design. After creating first-order approximations of the rotors, they developed mathematical models based on physical equations to further test and refine the design. For example, they were able to use the models to select the appropriate thickness and material for a slot wedge to hold the rotor wire in place. That modeling exercise uncovered a way to further reduce the mass of the rotor and they validated the complete design via finite element analysis (FEA).

The company was also able to use Maple to model nonlinear features such as wire stiffness that were difficult to determine and would have required a time-consuming trial-and-error approach via FEA.

Ongoing Challenges

Companies may face cultural or operational challenges to fully leveraging mathematics tools, depending on how experienced they are with this type of data analysis. “Is there a culture there that is motivated to try out innovative projects and find ways to rapidly develop?” MacDonald asks. “On the other side is the personnel issue. Do you have the right kind of resources in place? Your people have to trust the data rather than their instincts.”

Engineers also need education on how they can use these analytics and mathematics systems most effectively. “They ask us if they can do the same things with their data if it’s sitting on Hadoop, for example, so we have to educate our customers so that they can still do everything they used to do,” Oswill says.

MacDonald says that companies need to understand that this is a more complex endeavor than bolting machine learning on top of an existing process. “There are a lot of steps in the value chain to make this work,” MacDonald says. “They have to determine what problem they are trying to solve and if they have sourced and contextualized the data in the right way. This is a journey rather than just an innovation.”

Identifying the business problem is a critical first step. Companies have to determine where the right data resides and how to bring it together in a unified view to tell a descriptive story. “If it cannot be consumed in a way that is seamless, no one can use it,” MacDonald adds.

Brugard sees opportunities for precompetitive collaboration in many industries to develop analytical models that can be shared. In life sciences, he says that he expects more modeling and simulation for the development and approval of medical devices (and pharmaceuticals). “The Food and Drug Administration is currently working on guidelines for how to use, validate and report mathematical models, so we are expecting these areas to grow,” Brugard says. “Similar things can be seen in other fields, like social sciences, of course.”

Software companies are also working together to make it easier to pull these functions into the design process. PTC and ANSYS, for example, are integrating their solutions so that ANSYS simulation technology can be rapidly added to applications built with PTC’s ThingWorx IoT platform.

“That connectivity and contextualization and analytics will give you an understanding of failure, even when you can’t necessarily get specific data from a sensor,” MacDonald says. “We create a model zero with physics-based, raw simulation data. It may not be the most accurate model, but we can automatically tune a supervised machine learning model and use insights from deductive or the simulative model in ANSYS. Then as the data from the physical world comes in, we can understand its performance and then swap out the model for one that is based on that operational data.”

Those capabilities are only going to expand as designers gain access to more advanced tools. “As compute power increases and mathematical and system-level modeling evolve, we are able to model engineering systems in more detail and more holistically,” Bernardin says. “We are able to look at the entire system and consider complicated interactions between aspects of a machine and really optimize across all the different domains that are contained within a system.”

More Info:

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Brian Albright is the editorial director of Digital Engineering. Contact him at [email protected].

Follow DE