Redefining What’s Possible with High-Fidelity GPUs

What are you missing with good enough graphics processing vs. top-of-the-line graphics? It depends on how much you simulate and render complex models and scenes.

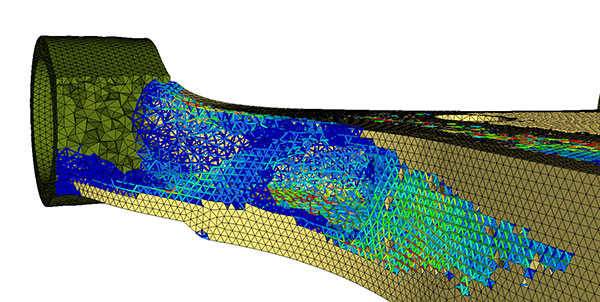

Simulation software vendor Altair reports its OptiStruct structural analysis solver runs 10x faster on the NVIDIA GV100 compared to the previous generation K6000. Image courtesy of Altair Inc.

June 6, 2019

A revolution is taking place today in high-fidelity graphics for product development technology, thanks to increased performance in graphics processing units (GPUs). Computer-aided design and engineering software vendors are updating their products from being CPU-only for many tasks to some form of shared CPU-GPU computation. To take advantage of the speed increases requires investing in a GPU, but which one?

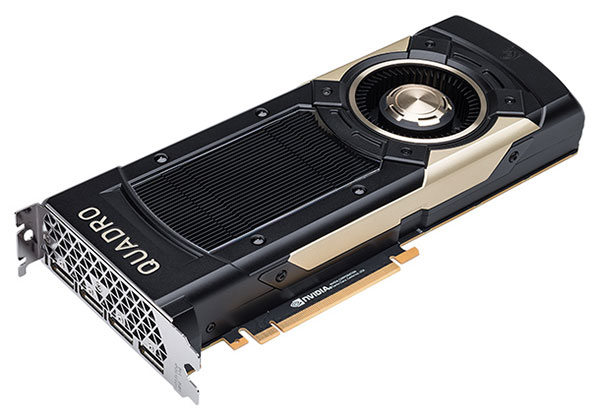

Do you need something like the NVIDIA Quadro GV100, an extremely powerful GPU based on the company’s Volta microarchitecture? It uses a 14nm chip manufacturing process, allowing it to pack 21.1 billion transistors onto a processor die only 815 mm2. Two aspects of the GPU architecture that make a big difference in some applications are double-precision floating point processing and memory bandwidth.

Floating Point Processing Facts

Double-precision floating point processing is more accurate, but that accuracy comes at a throughput price and isn’t needed for every application. For engineering, one reason to consider the GV100 is the speed increase in double-precision floating point processing, which is required by many CAD and CAE products. At 64-bit floating point processing — abbreviated at FP64 — the GV100 records an average 4.35x speed improvement for a 2x increase in GPU price, compared to the NVIDIA Kepler-generation Quadro K6000.

CAD and simulation/analysis products vary on exactly what GPU specs are most important. For example, some electromagnetic simulation applications use single-precision calculations, so wouldn’t get the full benefit of the GV100’s double-precision floating point processing. However, those users would still benefit from increased dataset throughput speed from the GV100’s high bandwidth memory.

Memory Matters

The GV100 has 32GB of 2nd Generation High Bandwidth Memory (HBM2). This is different from the more common DDR5 RAM, says NVIDIA’s Baskar Rajagopalan, who heads simulation strategy for the company.

“HBM is different [from DDR5] because because it can be stacked and so consumes less power,” he says. Stacking the memory means it can be located closer to the GPU processor. The difference is an average 3x improvement in moving data between the GPU processor and its memory. “Most applications are memory-bandwidth bound,” notes Rajagopalan, who says the increase in memory throughput is most noticeable with the largest datasets for simulation.

He adds that the GV100 is “3x faster for some applications; 5x to 7.5x for others. Applications like particle acceleration and discrete element modeling will be up to 7.5x faster than before.” HBM2 also includes the error-correcting code found in DDR5, which detects and then corrects single-bit memory errors.

Simulation Speeds

“If we tell people their application will run 5x faster, generally they take a close look right away,” says Rajagopalan. “If we tell them of a 1.5x speed increase, then it is ‘OK, we might look.’”

But with large, complex simulations, the key is how long a simulation will run. The speed increase may “only” be 1.5x, but if that means a rendering job goes from all-day to half-day, “that’s a big deal. Model size is the differentiator,” notes Rajagopalan.

Altair reports the GV100 provides a 10x speed increase to its OptiStruct structural analysis solver. “This breakthrough represents a significant opportunity for our customers to increase productivity and improve ROI with a high level of accuracy, much faster than was previously possible,” says Uwe Schramm, Altair CTO for solvers and optimization. Altair says this match of solver and GPU enables scaling for large models — particularly noise, vibration and harshness models — that may run poorly or not at all on CPU-based workstations.

Some CAE applications that can benefit from the GV100 are capable of supporting one or two GPUs, while others—such as the Altair FluidX line of particle-based fluid dynamics simulation tools—can run eight or more GPUs at the same time. To take advantage of multiple NVIDIA Quadro GV100 GPUs, you’ll need a workstation like the Dell Precision 7920 Tower, which can support three GV100s via NVIDIA NVLink high-speed GPU interconnect. The NVIDIA Quadro GV100 is supported in Dell Precision 7920, 7820 and 5820 Towers.

Rendering Rate of Return

For some users, it will not be the double-precision calculations or the fast memory throughput that makes a board like the GV100 worth the upgrade. Instead it will be NVIDIA’s new AI Denoiser, which uses artificial intelligence to hunt out the image noise that occurs during the ray tracing process. Scenes that might render in 12 hours can be rendered in as little as 35 minutes.

“SolidWorks Visualize can create 10x as much content in the same amount of time,” says Brian Hillner, senior product portfolio manager for Dassault Systemès SolidWorks Visualize. Such speed increases mean more options, including complex rendering of scenes for virtual reality. “Users are shaving months off design because they can see photoreal views in real time,” continues Hillner.

As noted above, 3x, 5x, 7.5x and 10x gains in performance may seem like investing in a new GPU is a no-brainer, but determining whether you need the GV100’s high bandwidth memory and double-precision floating point processing is fundamentally determined by your workflow. If you are routinely working with complex models using software that can support double-precision floating point processing, those speed gains will quickly return a GV100 and new workstation investment.

Subscribe to our FREE magazine, FREE email newsletters or both!