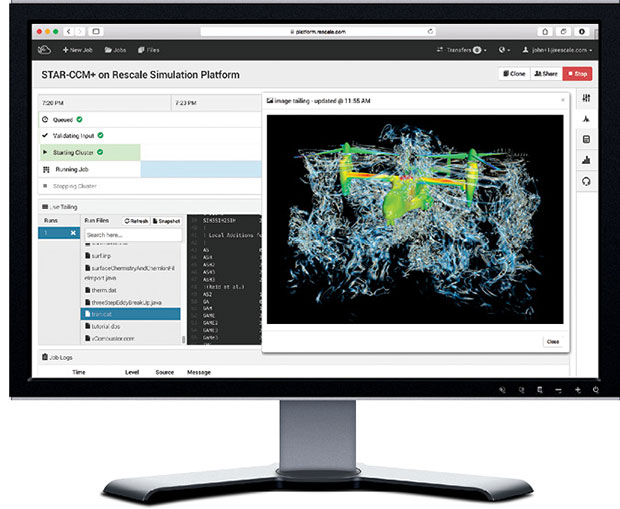

Rescale is a cloud simulation platform designed to make simulation easy through an integrated, optimized, workflow-driven solution. Shown on the screen is a CD-adapco STAR-CCM+ simulation of an Osprey tilt-rotor aircraft running on Rescale’s simulation platform. Image courtesy of Rescale and CD-adapco.

Latest News

March 23, 2015

Rescale is a cloud simulation platform designed to make simulation easy through an integrated, optimized, workflow-driven solution. Shown on the screen is a CD-adapco STAR-CCM+ simulation of an Osprey tilt-rotor aircraft running on Rescale’s simulation platform. Image courtesy of Rescale and CD-adapco.

Rescale is a cloud simulation platform designed to make simulation easy through an integrated, optimized, workflow-driven solution. Shown on the screen is a CD-adapco STAR-CCM+ simulation of an Osprey tilt-rotor aircraft running on Rescale’s simulation platform. Image courtesy of Rescale and CD-adapco.Innovations in the design and deployment of high-performance computing (HPC) hardware and supporting middleware are paving the way to new services and opportunities to improve engineering simulation and analysis. Vendors and customers alike are finding ways to increase the number of simulations, spend less money and still get results faster than with traditional methods.

At the heart of this revolution is not one innovation but several, creating what could be called a simulation stack. You may be familiar with the software term “stack” popularized by the rise of open source software solutions that have become foundational in computing today. The best-known stack is LAMP (Linux, Apache, MySQL, PHP): four separate technologies that when used in tandem become a synergistic force that make today’s connected world possible.

The emerging simulation stack is a trifecta of faster/cheaper/better, moving simulation further upfront into the design cycle and bringing to reality the often-prophesied “democratization of simulation.”

The simulation stack is still a fluid concept. The software and hardware varies from vendor to vendor. Elements include cloud storage and computation, HPC clusters, virtualization technology and the use of GPUs (graphics processing units), either specifically for graphics computation or for general-purpose computation.

Eating the Dog Food

There is a saying in the software industry: “eating our own dog food.” It started at Microsoft in the 1980s, when a manager told employees they needed to be using Microsoft products whenever possible.

Altair is no stranger to using its own engineering simulation solutions, having launched as a software developer in 1985. But Altair also offers consulting services, taking on complex CAE projects for customers in a variety of industries. “We produce software, but we are also a big consumer of it,” says Ravi Kunju, head of strategy and marketing for Altair’s enterprise business unit. Over the years Altair has built several HPC data centers strategically placed around the globe. Creating each data center was a six-to-eight month process, Kunju says, of specifying hardware components, choosing vendors, selecting CAE applications (its own or from competitors) and modifying software for cluster management and scheduling, and much more. In software industry terms, Altair was eating its own dog food.

As computer technology advanced, Altair realized it could replace all the work of creating each custom installation with a simulation appliance. Now known as HyperWorks Unlimited, the appliance puts in all the required software and hardware in one physical unit. Altair built the appliance for its own use, but also produced a commercial model. “It gives the customer a Web portal, not a Linux command line,” says Kunju. “Our customers could connect, power up and run their first project in one hour.”

Because Rescale’s simulation platform is cloud-based, it can be accessed via lightweight computing devices, such as a tablet. Image courtesy of Rescale.

Because Rescale’s simulation platform is cloud-based, it can be accessed via lightweight computing devices, such as a tablet. Image courtesy of Rescale.The HyperWorks Unlimited appliance eliminated the need for individually sourcing every part of a HPC cluster and its associated storage and software. Altair leases HyperWorks Unlimited, turning a capital expense into a recurring operational expense item. Today each appliance is custom built in a six-to-eight week process of determining customer need and building it to match.

Kunju say the 5,000+ customers of HyperWorks Unlimited include boutique consulting firms, Fortune 100 manufacturers and everyone in-between. But there were still customers for whom the appliance was too expensive or more powerful than required. When cloud computing and virtualization arrived on the scene, Altair realized they could be used to take the appliance concept to another level. “We have replicated the physical appliance as a virtual product,” says Kunju. “It offers all the access, all the applications, and the same portal, but we don’t move a physical appliance to the premises; they get it on the cloud.” Kunju says Altair can spec and deliver a physical copy of HyperWorks Unlimited in six to eight weeks, but it can deliver a virtualized cloud version “in minutes.”

A virtual HyperWorks Unlimited brings high-performance simulation to a larger customer base, but there is still a problem: bandwidth. A single simulation from a 1MB input file can generate 10GB of data. If a customer is connecting on a 10 Mbps connection, it takes hours longer to download the results than to generate the data. Altair solves the problem with another part of the emerging simulation stack, remote graphics virtualization support. “One customer calls it the best thing they’ve introduced into HPC,” says Kunju. Customers can run their simulation jobs and immediately process the data where it is, viewing and working with the graphical results as if they were being generated on a local computer.

Changing the Workflow

Access to high-performance simulation on-demand turns the traditional system of running CAE jobs on its head. When all simulation and analysis work had to be submitted to an in-house computing center, jobs were prepared then handed off to specialists. All workflow was based on the capabilities of the data center. An engineer might say “I can only run my job on 20 cores, because if I do more people will be standing in line.” To run a simulation every day, engineers had to optimize models to complete a run in eight to 10 hours. Now engineers are adapting to a new reality of unlimited cores available on-demand without scheduling issues. To get a faster result, it is now possible to throw more cores at the problem instead of reducing the complexity. “Use the cloud for dynamic provisioning of resources,” says Altair’s Kunju. “No more worries about fixed resources and budget and time constraints.” Altair refers to this process as design exploration; others call it the democratization of simulation.

Rescale is an engineering simulation service provider with a different approach. It does not write CAE software but offers products from all vendors as a cloud-based service. Rescale CEO Joris Poort says the on-demand access to as many as 10,000 HPC computing cores beats on-premise HPC in two key ways that radically change engineering workflow.

First is the accessibility of services. “Our customers upload a model and kick off the job in a few clicks,” says Poort. “It does not require knowledge of how to access the hardware. Many who are traditionally trained as designers are now doing simulations, and others are running more simulations because of the ease.” Barriers to entry are removed, Poort says, and feedback comes more quickly, creating a virtuous cycle of more information to move to the next design iteration faster.

The second big workflow change is related to cost. The cost of running a purchased copy of NX Nastran for a year might work out to between $10 and $20 per hour. One hour of using Rescale might cost double on an hourly basis, but only be used a few hours a year. And that’s just for the one software product; there must also (generally) be a dedicated workstation or HPC cluster running the software, and at least one person dedicated to its use. The savings add up rapidly, and as the cost advantages sink in, Rescale users generally run more simulations and run them more often.

Rescale provides common-use clusters and custom clusters. “We tailor the hardware to the simulation,” says Poort. An acoustics simulation may require terabytes of processing RAM and storage, but such a hardware investment is out of reach for all but the largest users. Part of the Rescale offering is matching the customer and the service. Rescale works with the leading cloud vendors, including Microsoft Azure and Amazon Web Services. Customers do not have to modify their IT stack for a particular cloud service; Rescale acts as the middleman.

Visual computing hardware specialist NVIDIA is also a player in the new simulation stack. Baskar Rajagopalan, senior marketing and alliances manager at NVIDIA, sees big changes coming to the traditional three-step CAE workflow. The three steps—pre-processing, solving, and post-processing—have been done on three different computers or a mix of workstations and HPC clusters for years.

Pre-processing prepares a simulation model from the original CAD design, usually on a local workstation. The results are then passed along to a HPC cluster or single high-performance computer. The results then move to post-processing (generally a single workstation) for design review and possible submission of another simulation run. Typically, if the data is 1x at pre-processing, solving generates from 10x to 100x of data. Moving this data back to another computer for post-processing can become a significant bottleneck; it might take longer to move the solution set data than to generate it.

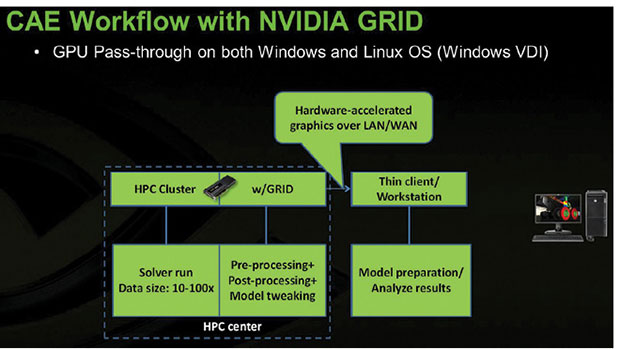

NVIDIA says its GRID technology—GPU technology as a cloud-based service—can eliminate the bottlenecks by taking over the solver and post-processing stage. “Move the model tweaking back to the cluster and save all the transfer time,” says Rajagopalan. This “GPU pass-through” saves all the transfer time while offering a state-of-the art computing environment for the solving stage. “Any engineer can now use the data set when the system is idle,” he says. NVIDIA’s GRID technology is available in both public cloud services and private cloud clusters.

GRID technology can eliminate the bottlenecks by taking over the solver and post-processing stage, NVIDIA states. Image courtesy of NVIDIA.

GRID technology can eliminate the bottlenecks by taking over the solver and post-processing stage, NVIDIA states. Image courtesy of NVIDIA.Rajagopalan agrees this new technology is giving engineers reason to change their workflows. But he notes such change is not coming at break-neck speed. “CAE users are conservative; adoption is just the start.” Few engineering companies use the latest versions of their CAE products. The current version of ANSYS Workbench, for example, is certified for use on the NVIDIA GRID. “We foresee more use [of this technology] as customers upgrade their software, then they will also do a hardware refresh” and consider new options.

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Randall S. Newton is principal analyst at Consilia Vektor, covering engineering technology. He has been part of the computer graphics industry in a variety of roles since 1985.

Follow DE