Support for GPU computing has accelerated rendering performance in KeyShot 10. Image courtesy of Luxion.

Latest News

March 5, 2021

In 2019, Luxion raised the bar on rendering when it announced its KeyShot product would support GPU-accelerated ray tracing via NVIDIA technology. Since then, the software’s real-time rendering performance has only become more impressive. With the recent release of both KeyShot 10 and the NVIDIA RXT A6000 GPU, designers have access to previously unheard of capabilities.

We spoke with Luxion Co-founder and Chief Scientist Henrik Wann Jensen to talk about these enhancements and the value of real-time rendering for engineering organizations.

What were the key driving factors that led KeyShot to support GPU acceleration?

We originally stuck with the CPU because the algorithms we had were doing really well did not map well to GPU. In 2018 I attended SIGGRAPH and in Vancouver and was invited to a keynote by Jensen Huang, CEO of NVIDIA, where he announced the company’s RTX hardware.

For the first time, you could have an output of a billion rays per second, which is a fantastic number for anyone doing ray tracing. I spoke to a lot of people from NVIDIA at that conference and asked if this was really possible. They said yes, it was really that good, and it gave me confidence.

We had been dipping our toes in to see what we could get with the GPU, and we had found that it did not give us enough benefits before. It was not able to compete with the CPU at the time. In 2018, the graphics cards came out with 8GB of memory and performance was fantastic. NVIDIA released some programming models to support it, and we were ready for it. We had been preparing internally the algorithms that could map to the GPU. We were up and running in less than a year.

The latest version of KeyShot shows additional improvements related to GPU acceleration. Can you describe some of the features and benefits available in the new release?

In 2018 we started out with the OptiX 6 library, and that presented some challenges for us, but NVIDIA then released OptiX 7, which was a better fit for our needs. We re-implemented everything for KeyShot 9. In the meantime NVIDIA released improvements in OptiX 7.2, and the software keeps improving. In our first release, we had to make certain shortcuts that let us just do brute force on a GPU. For some of the more advanced algorithms, we just leveraged brute force to do that. For KeyShot 10, we optimized some of those algorithms.

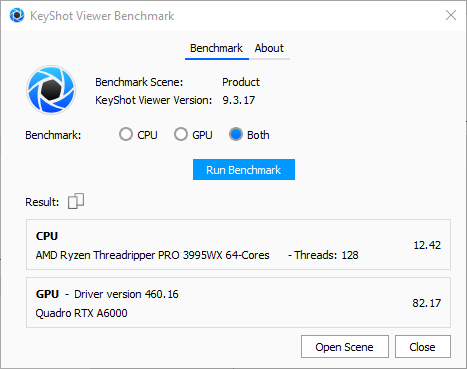

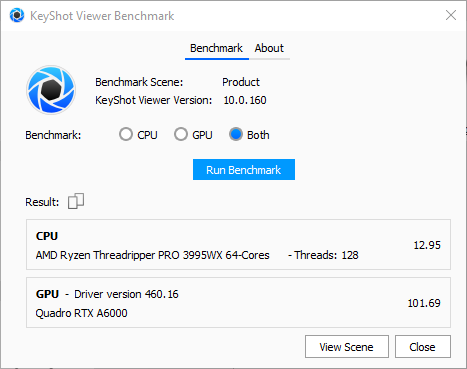

One in particular is the rendering of caustics. We have a really sophisticated algorithm that is super fast on the GPU. We have done things to more closely map to and leverage the fact that the GPU can run with thousands of threads in parallel. We have been able to get some good performance out of the GPUs. The Ampere GPUs that came out, area beasts. The RTX A6000 card we found is 100X faster than the 8core i7 processor.

Benchmark data showing the improvements in KeyShot 10 vs. KeyShot 9, using the NVIDIA QUADRO RTX A6000 GPU.

The new release, in combination with the latest GPU from NVIDIA, has enabled some pretty amazing productivity improvements, according to users we have spoken to. Can you talk about how the software/hardware combination affects performance, and why users might want to upgrade?

Rendering is all about speed. As you get more speed you can do more. The customers we work with that have gotten faster computers and hardware, they do well. We have a lot of customers that do animations. If you do animations, anda have 1,000 frames, it takes that much longer. If it took a minute to render a frame, now it is 1,000 minutes. If you can accelerate that, it is helpful. Performance is just getting so good it enables a really smooth workflow. In KeyShot we have always had a really smooth workflow, but you can really take it up a notch when you have complex scenes and have these super fast GPUs.

Can you explain the role of both the CPU and GPU in terms of the types of tasks within KeyShot where one would be more useful than the other? Are there workflows in which the CPU is preferable?

We still have some advanced algorithms that do run on CPUs. There are certain lighting scenarios where CPU might still outperform GPU because of those algorithms. Operations like importing and processing geometry, the whole flow to set up a scene, those are controlled by the CPU. Rendering is done on the GPU, which receives the data and sends back images very quickly. The CPU still has a role to control all of that. In some cases the GPU may not have enough memory, so with the CPU you can tap into hundreds of gigabytes or terabytes of memory.

Has the ability to access the power now available in both newer CPUs and GPUs changing the way your customers use KeyShot? How are workflows evolving?

The hardware has allowed us to push the envelope. So in KeyShot 10, for example, we released a much more sophisticated version of RealCloth compared to KeyShot 9. In KeyShot 10, we took that to the next level and allowed RealCloth material to represent cloth, but to make cloth out of curved threads, so you can render individual threads in clothing. That is incredibly heavy computational work, but you get this detail that is ridiculous. You get detail you cannot model in a CAD system. You have that compute power available and you can put it to good use.

Because we have users that do these close ups of textiles, now have a new way of doing that. Without that compute power, it would be too slow. Now you can still do this and have this amazing performance. What is so great about the new GPUs and faster CPUs, is they allow us to push the envelope all the time and push for even more realism and more detail. We have continued to push those boundaries, and it is something our users appreciate.

Where do you see the next frontier in rendering performance? Given what is possible now, what other types of improvements or features are customers going to look for or need in the future?

Right now we are funding research looking at the use of ray tracing for virtual reality, which is still beyond the capability of current graphics cards.

In VR you have 2 or 4 million pixels and then you have to ray trace that at 90 or 120 frames per second. That is a ridiculous number of rays. We want to look at that and examine what you can do there that can go beyond classic rasterization techniques? Can we get this hyper realistic ray tracing rendering to run in real time, in a set of VR goggles? And when you move your head around, it follows you? That will be quite fantastic.

Imagine if you could have a photorealistic experience in VR, but it looks and behaves very natural. That would be groundbreaking and help VR take off to the next level. It is still a niche, but if you add that element, that could elevate the experience.

More Luxion Coverage

More NVIDIA Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News