Making Connections for Digital Thread

APIs and data standards help PLM move forward and break down information silos.

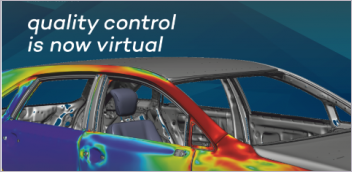

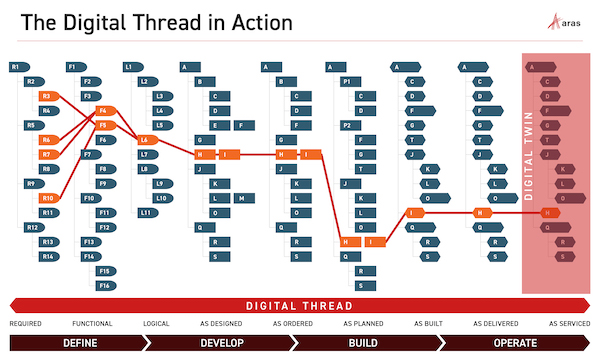

Fig. 1: A digital thread does not create value from data alone. The basis of its value stems in part from the connections made throughout the asset’s lifecycle, combining information on software, electronics, mechanical design, manufacturing requirements and service histories to create a complete representation of the asset over its operating life. Image courtesy of Aras.

Latest News

January 4, 2021

The ability of product lifecycle management (PLM) to deliver on the promise of digital thread depends on its capacity to integrate data of diverse types, from divergent sources (Fig. 1).

Unfortunately, there’s a problem.

The changing data landscape and the inherent nature of a digital thread make it impossible for thread users to ignore the fact that traditional PLM software cannot meet all digital thread requirements.

This realization has triggered a search for new ways of accessing and processing distributed data, causing PLM platform makers to embrace web-based architectures, standards and software strategies. That said, the challenges that PLM platform developers and users face are daunting.

Software’s Tower of Babel

The application environment has always been fragmented, and despite the rise of open software, segmentation persists. Software’s heterogeneous nature stems from a number of debilitating factors.

For one, organizations rely on all sorts of software systems, some provided by different vendors, others created by in-house development teams. To complicate matters further, some software is tailored to meet the unique needs of engineering, manufacturing, aftermarket and service interests, such as enterprise resource planning (ERP), customer relationship management (CRM) and supply chain management.

The results of this specialization has been the creation of a patchwork of often incompatible applications—data silos insulated to varying degrees from other data repositories.

“A digital thread is not a single-vendor offer,” says Dave Duncan, vice president of product management for PTC’s PLM segment. “No vendor can cover the waterfront effectively, and manufacturers will always have varying data sources implemented in varying ways.”

Another factor is the increasing number of stakeholders in a digital thread. The more companies or users that become involved, the greater the diversity and complexity, all of which hinders effective interaction.

“Product lifecycles span design partners, suppliers, OEMs [original equipment manufacturers], sales dealers, service partners and operators,” says Marc-Thomas Schmidt, senior vice president of architecture at PTC. “Old-school document export packages don’t cut it here anymore. Secure real-time data access is needed.”

Furthermore, software is not static. Systems emerge and evolve over time. Standards change. This means that integration requires not only linking two systems, but often linking different versions of a software program.

These software programs use various languages, semantic data models, interfacing mechanisms and mapping strategies. This diversity precludes the kind of plug-and-play integration that a digital thread requires, making integrations complex and difficult and rendering many traditional PLM platforms unsuitable for the new demands.

Beyond Original Intent

Knowing everything that doesn’t work is one thing, but identifying the root cause of the shortcomings is quite another. Eventually, developers arrive at the question: Exactly why can’t traditional PLM platforms deliver the level of cross-platform integration required to create a complete digital thread?

The answer to this question lies in the structural limitations of traditional PLM systems and those of the other applications that come into play.

“PLM data must play a key role in structuring the digital thread, and this goes well beyond typical PLM activities,” says Garth Coleman, vice president, ENOVIA advocacy marketing, at Dassault Systèmes. “Because other platforms—such as ERP, CRM and EAM [enterprise asset management]—have often been developed based on the needs and constraints of real-life transactions, enabling a broader set of activities with digital threads will not only require a new level of experience but also will demand bringing the underlying data of these platforms to a new level.”

In addition to these issues, some PLM systems stumble when dealing with the sheer volume of data currently required to create a complete digital thread. Managing data in transit and data at rest in massive loads often proves to be impossible for PLM platforms.

Plus, traditional PLM platforms can run into difficulties when they attempt to map data onto their referentials.

“Vendor-to-vendor mapping and translation of data formats can be challenging,” says Brian Schouten, director of technical presales for Prostep. “CAD translation is not an exact science. In addition, data fields—while mostly familiar—have different system behaviors and do not always map correctly.”

These structural and functional shortcomings have spurred developers to set aside some established software conventions and turn to architectures and tools previously untapped by PLM platform designers. The aim here is to provide the means for greater interaction and integration among a broader range of data sources, breaking down data silos in the process.

APIs Past and Present

An area where this shift is evident is the application programming interface (API). Traditionally a key enabler of interapplication interaction and data exchange, APIs maintain a large presence in the PLM developer’s toolkit. That said, new demands made by digital thread and the Internet of Things, the rise of cloud-based systems and the discovery of new uses of web technologies has put API shortcomings in the spotlight and begun a process of redefining the capabilities and roles of these interfaces.

By definition, an API consists of rules, commands and permissions that allow users and applications to interact with, and access data from, other applications. APIs provide a list of URLs and query parameters, specifying how to make a request for data or services and defining the sort of response to be expected to each query. Using these specifications, APIs carry out interactions via mechanisms called function calls—language statements that request software to perform particular actions and services.

These software interfaces come in three classes. Some are public APIs, others are restricted to private use and a third type is designed to only support transactions among partners.

Despite these variations, API architectures typically serve one of three purposes:

- System APIs manage configurations within a system, such as whether data from a specific database is locked or unlocked.

- Process APIs synthesize accessed data, enabling it to be viewed or acted upon across various systems.

- Experience APIs add context to system and process APIs, making their information more understandable to a target audience.

This interface technology has proven to be an elegant answer to interoperability between enterprise applications. APIs also have a long track record of working as designed, and despite a number of major and minor upgrades and improvements, they still ensure backward compatibility.

As impressive as its strengths are, however, API technology has some significant shortcomings in its dealings with the volume of responses.

“APIs are usually created to support the transaction at a client level, and the use cases for bulk transactions—not so common at client level—rarely get implemented,” says Anthony John, senior director of product management at Arena Solutions. “This leads to a ‘chatty’ custom client. For example, a data set of 10,000 records would result in 10,000-plus API calls.”

Another area where the technology falls short is in executing certain integration strategies, such as federation of data, which involves reading live data from one system into another. Many legacy APIs do not have this capability.

To address these shortcomings and to meet the demands of digital thread, PLM developers have turned to a technology called web APIs, which exposes an application’s data and functionality over the internet, using established protocols.

Moving to the Web

Adoption of web APIs has grown steadily over the past few years, as organizations and software engineers have come to realize the opportunities associated with running an open platform. This trend has gained momentum as the number of open source tools has increased, providing more sophisticated search and discovery tools.

The APIs in this class of interfaces fall into two categories: representational state transfer (REST) and simple object access protocol (SOAP) APIs. Both provide a key ingredient for digital thread’s success.

“Technologies like REST and SOAP APIs facilitate the ability to federate access to important information across systems,” says Coleman. “Because digital threads cover the full end-to-end lifecycle of an asset, regardless of the many platforms that host information about the asset, using technologies like REST and SOAP can tap into this ‘dark data’ to give additional context for the asset through its extended lifecycle.” [Fig. 2]

These APIs offer other significant benefits. For example, REST and SOAP interfaces allow for convenient, well-known technologies to be used.

“HTTP-based integrations are typically more performance efficient and well known to developers,” says Prostep’s Schouten. “They also allow better transport across enterprise networks because they are set up to do this with conventional web traffic. REST and SOAP web services also have smaller, more granular queries that are more flexible in what they can accomplish when put together for different business-driven use cases.”

Fig. 2: To create a complete digital thread, PLM platforms must be able to construct an end-to-end information chain, covering the asset’s entire lifecycle. This requires the software to be able to collect and manage data from a broad assortment of engineering tools, as shown in this diagram. Image courtesy of Prostep.

REST vs. SOAP APIs

There are, however, differences between these two types of interface, and these lie in their operating principals, communication tools and level of performance. It is these differences that have helped REST to gain more traction than its competitor, SOAP.

A key factor driving REST’s popularity stems from the file and data formats that it supports, including HTTP, JSON (better performance compared to XML parsing), URL and XML. In comparison, most other types of APIs require the developer to define a protocol to enable the functioning of the APIs.

Because REST APIs use HTTP, there is no need to install additional libraries for creating and functioning interactions. Also, many software engineers perceive REST APIs as offering great flexibility because they can handle different types of calls and returns in different data formats.

Generally speaking, REST promises better performance than SOAP. “REST uses less bandwidth, better caching mechanisms and is generally the faster of the two,” says John.

As valuable as API technology is, it’s important to remember that it is only part of the connective tissue that digital thread requires.

“APIs only get you so far in a digital thread program,” says Schmidt. “Programs benefit from adding higher levels of prepackaged data connections, using standards and API gateways, semantic organization via IoT Platforms, and componentized UI [user interface] widgets via mashup or other low-code offers.”

A Clearinghouse for API Communications

The next software component in the code chain, the API gateway resides between its API clients and other application services. Acting as a central interface, these gateways route data and service requests to the appropriate application service and then pass the service’s responses back to the requesting APIs. In addition to these routing functions, API gateways perform numerous management functions, such as authentication, input validation and metrics collection.

Gateways perform data mapping, which is a significant management function. Here, the software bridges the differences between two systems’ data models, matching fields from one data set to another.

Data mapping ensures that data remains accurate when it moves from a source to its destination, and makes it possible to merge the information from one or multiple data sets into a single schema that users can query and from which they can glean insights.

In performing these tasks, gateways help digital threads streamline interapplication interactions.

“Digital thread information is stored across multiple platforms,” says Coleman. “An API gateway acts as the centralized access point to information residing among these cross-platforms. Because the multiple systems hosting information on an asset will not converge on a single standard or technology, the API gateway can act as a front-facing interface that masks more complex underlying or structural code. In doing so, the gateway can simulate this type of standardized representation of asset information across platforms.”

Another element of the streamlining process takes the form of prepackaged functions and processes residing on the gateway.

“For operational technologies, API gateways are prepackaged to support decades of machine protocols,” says Schmidt. “For information technologies, API gateways offer prepackaged and extensible connectors to RESTful business systems. Additionally, both IT and OT API gateways may interface with a semantic layer in an IoT platform, where IoT serves both connected products and connected business systems, which in turn also may provide UI building blocks for digital thread applications.”

Mini Programs Complete the Thread

Closely associated with API gateways and REST APIs, microservices provide the mechanism that completes the cross-platform integration process.

Based on the service-oriented architecture style, these software-based, self-contained mini-programs deal with a small subset of information and perform narrowly defined functions.

Because microservices are loosely coupled with the rest of the application, an individual service can often be added, modified or removed without requiring application-wide changes.

The nature and structure of microservices opens the door for a number of advantages for digital thread developers. For example, because of their autonomous nature, microservices allow small teams of developers to work in parallel, which shortens development cycles. In addition, because microservices are small, they fit more easily into continuous delivery/continuous integration pipelines, and they require fewer resources.

Combined, these features translate into greater flexibility.

“Microservices can improve the flexibility and scalability of cross-platform interactions and data exchange,” says Christine Longwell, product marketing manager at Aras. “The autonomous and streamlined nature of microservices allows developers from different application areas to work independently, increasing productivity and creating more easily accessible data for the digital thread.”

What’s Next?

The success of digital thread implementations depends on PLM software vendors providing integration technologies capable of pulling together data from widely distributed applications, based on diverse standards, formats and data models.

Until now, many traditional PLM technologies have fallen short in this area.

“‘Typical’ PLM systems, as they stand today, are still missing the foundation necessary to support the digital thread,” says Coleman.

As a result, PLM platform developers have turned to technologies cultivated in other areas.

“Integration technologies and approaches are continually improving,” says Longwell. “Many products are providing publicly available web-based interfaces, either through OData, REST or SOAP. These open interfaces allow us to integrate to various products easily without worrying about complex, product-specific programming interfaces. We see integrations being built using web services to most applications.”

Industry watchers expect the next five years will usher in continued adoption of web APIs and enhancements of standard data formats within the PLM space. Standards-based connectivity between systems, such as REST API orchestration, promises to be the default connectivity pattern.

There is, however, one more factor in play here. To focus solely on technology alone overlooks a key component necessary to make cross-platform integration efficient and digital thread successful. Organizations should also evaluate the capabilities of the staff and the software tools.

Tom Kevan is a freelance writer/editor specializing in engineering and communications technology. Contact him via [email protected].

More Arena Solutions Coverage

More Dassault Systemes Coverage

More PTC Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News