A fluid simulation in Dassault Systèmes SIMULIA showing a high-speed train’s aerodynamics. Image courtesy of Dassault Systèmes.

June 21, 2021

In theory there is no difference between theory and practice, while in practice there is.

This quote has been attributed to Yogi Berra, Albert Einstein and Richard Feynman, but credible sources that link them to the quote are rare. Its unverifiable origin aside, the quote is a good reminder for the engineering industry that has been relying more on digital simulation to make design decisions.

How important is the peculiar bending mode that a new type of plastic exhibits in heat? Is it wise to dismiss it in the simulation setup? Is it overkill to spend time and money to scrutinize the phenomenon in a testing lab to quantify it? These decisions all come down to a simple act of balance, the experts say.

A U.S. $6.1 Billion Market

Jakob Hartl, a Ph.D. student and graduate research assistant at Purdue University, spoke on “Application of a Verification and Validation Framework for Establishing Trust in Model Predictions” at the upcoming CAASE21 virtual conference (June 16, 2021), co-produced by Digital Engineering and NAFEMS.

“Computer simulation is a more cost-efficient and faster way to acquire information than traditional methods of experimentation, so it’s gaining adoption,” he says. “But compared to real-world tests, computer-based simulation is a relatively new field of science.”

That new field of science has spawned a robust computer-aided engineering (CAE) software industry, generating $6.1 billion in 2020, according to a Cambashi analyst. The market offers digital solutions for simulating everything from acoustics behavior, thermal events and manufacturing processes to failure modes. The top three vendors—Ansys, Siemens and Dassault Systèmes—accounted for 47% of the total 2020 CAE software market, according to Cambashi.

During the 2017 NAFEMS conference, Patrick Safarian, Federal Aviation Administration’s Fatigue and Damage Tolerance senior technical specialist, revealed that “Certain regulatory requirements allow analytical approaches to be used for compliance purposes as an option to testing. In all cases these regulations require validation of the analysis before the results can be accepted.”

But he also noted “the reliability of these solutions [analytical approaches] is always a major concern.”

Automotive and aerospace, the two industries that heavily leverage digital simulation, are dominated by a handful of titans pursuing similar projects in the race against one another—autonomous vehicles, for example.

“A bunch of organizations are probably doing the same type of work and studies in validating their simulations. It would move faster if everyone were willing to share, but of course, they cannot because of competition,” says Hartl.

Relatively Reliable

In different products and different phases of the design, the consequences of design failure vary. In finite element analysis (FEA) terms, the damage to a smartphone’s outer shell and internal components and the failure of an airplane’s landing gear are quite similar. Both can be represented and simulated with a blend of structural and electromechanical solvers. But the consequences of failure are significantly different. Therefore, the simulation reliability, or the desired level of accuracy in the digital model, should be judged accordingly.

“If you are using the model to make high-consequence decisions, involving human lives or financial loss, then you obviously need to be more rigorous,” warns Hartl.

Contrary to what people might deduce from his upcoming technical talk about using uncertainty quantification and numerical methods to boost trust in simulation, Hartl is a fan of simpler digital models providing a wider range of applications.

“I prefer simpler models to complex, expensive models, as long as you take the time to understand what your predictions mean, and the errors in them,” he notes.

Material Matters

Tim Whitwell, VP of Engineering at Tectonic Audio Labs, cares a lot about what he hears. He and his team use COMSOL Multiphysics to build simulation models to analyze the inner workings of Tectonic’s Transducer devices.

“We conduct dedicated motor, suspension and diaphragm bending studies in addition to fully-coupled analyses of the whole transducer,” he says.

For him, the choice between digital simulation and physical testing is also an act of balance. “There are times when we can get the answer we’re looking for more easily with a physical test,” he says. “I can spend two weeks building a simulation model and validating it, but with modern rapid prototyping tools, like 3D printers, I can build a prototype and test it in three days.”

On the other hand, with components like the spider corrugated suspension element, the digital model is the only choice. “These components in the drive unit require tooling, so you can’t use rapid prototyping,” he explains.

For Tectonic’s simulation needs, standard material definitions are not always enough. “Rubber under dynamic conditions behaves very differently from under static conditions,” he explains. “You can get the data for rubber from a lot of public sources. That may be OK if you’re using it for refrigerator doors, but in our speakers, it’s oscillating 10,000 times a second, so the tensile stiffness is very different.”

Tectonic runs its own testing lab to recreate the operating conditions of its products and measure the materials’ stretching and bending behaviors.

“A digital model is good for preliminary assessments, but if we want to put more trust in the model, we have to measure the sample materials under the conditions they will be used,” he says.

Material calibration is also part of simulation software from Dassault Systèmes’ SIMULIA brand. It’s a feature that has served the tire industry. This is a critical tool for modeling nonlinear elasticity, stiffness damage and viscoelastic behaviors found in tires.

“In short, the material calibration app lets you take experimental data and use it to replicate any material’s behavior,” says Anders Winkler, portfolio technical director, R&D Strategy, Dassault Systèmes. “So in your simulation, the material models describing the material behavior produce more accurate results.”

High Fidelity versus Low Fidelity

In general, CAE users tend to think of a higher mesh-count as high-fidelity simulation, but that’s not necessarily the case, says Rachel Fu, portfolio technical director, R&D Strategy, Dassault Systèmes.

“High fidelity means taking the approximations out of the simulation,” she says. “For simplicity, you might assume certain parts behave linearly, as opposed to nonlinearly. You might assume certain parts are clamped down rather than fastened with fasteners. High fidelity means removing these simplifications.”

Simplified—or low-fidelity simulation—has a purpose too, especially in the conceptual phase.

“You can conduct lower fidelity simulation with faster run times, then have the simulation results dictate what your design should look like. Simulation-based optimization at the conceptual phase will become the norm,” Fu says.

With on-demand computing power, small and midsized firms can also afford to run high-fidelity simulation once accessible only to industry titans with proprietary HPC (high-performance computing) servers. But Winkler worries about a side effect.

“That goes against the fiber of green software,” he points out. “If you run more simulation on bigger clusters, it generates a lot of heat, so it needs more cooling, which in turn increases power consumption. Converting server halls into heat-power-generating units is certainly one of the more rewarding engineering challenges of our time.”

Winkler thinks the complexity and sizes of the digital simulation models are beginning to test the limits of FEA itself. “On a 10- to 15-year horizon this puts healthy pressure on us [software developers] to collaborate even tighter with academia to see how different types of numerical methods evolve to increase accuracy and speed in simulation,” he reasons.

High-fidelity simulation powered by on-premises or on-demand HPC costs money, but it’s not the only cost. “It costs time,” says Todd Kraft, CAD product manager, PTC. “If you run the simulation at 50,000 elements, you might get the answer in 5 minutes. But with 2 million [or more] cells with advanced connections and physics, you may have to wait a day or two. It’s common to run coarse-mesh simulations early on.”

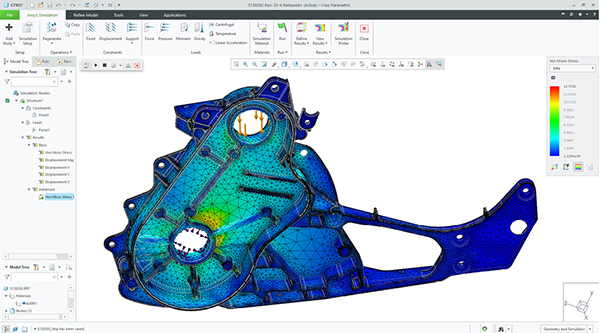

In 2018, at PTC’s LiveWorx Conference in Boston, the company announced a partnership with Ansys, to deliver Ansys Discovery Live real-time simulation within PTC Creo software. What Ansys developed—a faster, easier simulation aimed at designers—was a departure from classic simulation paradigm. The graphics processing unit (GPU)-accelerated code with direct modeling contributed to near-real-time interactive response, promoting a more liberal use of simulation among the designers.

This allows PTC to offer a simulation tool called Creo Simulation Live that targets the conceptual design phase to complement the company’s other analyst-targeted simulation products in the Creo portfolio.

A Historical Record

What are the chances that, 20 years later, someone might want to consult a simulation run you conducted today? Perhaps not in the use-and-toss electronics industry, but in the infrastructure, automotive and aerospace sectors where product lifespans are much longer, this is more common than you might imagine.

“Keeping track of the loads, requirements and specifications for the case, perhaps even the specific digital machine involved or the regulatory compliance demand, is paramount. It has to do with traceability,” says Winkler. “Storing all that information in a file system is a nightmare. It has to be a data model that can carry all that information with complete interoperability. That way, you can take the results from one analysis and continue working on them in a different scenario without adjusting or simplifying it.”

Combining Simulation and Testing

With the advent of internet of things, embedded sensors are becoming the norm, finding their way into not only vehicles and planes but also washing machines and refrigerators. This represents a boon for simulation professionals that want ways to recreate a product’s behaviors more accurately.

“Instead of just using a standard test, the question is, how do you better capture lifecycle loads?” asks S. Ravi Shankar, director of simulation product marketing, Siemens Digital Industries Software. “You need to think about the different types of load cases for your washing machine. Some based on routine usage, some based on possible misuse, but you cannot wait to capture 10 years’ worth of data before you do the simulation. With our Simcenter software, you can perform mission synthesis to create an accelerated test profile that represents what a washing machine experiences over its lifecycle.

“On the other hand, simulation models can also augment test data for better engineering insights. Our software includes technology to create virtual sensors, so you get data in regions where a real sensor is difficult to place” he explains.

For Siemens customers, Teamcenter, the company’s data management software is where test and simulation data converge. “By bringing together the design, simulation and test data to create a validation sequence [in Teamcenter], you can be confident that they all represent the same version of the design,” Shankar says.

Measuring Uncertainty

Physical test remains the gold standard in full system validation, but there are scenarios where the capacity for physical testing is severely limited.

“In the fields of nuclear or satellite, for example, you can’t do all the physical experiments you may want to do, so simulation and uncertainty quantification is extremely important,” notes Shankar.

All simulation-based predictions involve uncertainty but “you can create an environment where you can say with numerical certainty that, these results, in this context, have ‘x’ percentage of reliability,” says Winkler.

Uncertainty quantification is a discipline unto itself, supported by a brand of specialized software makers. SmartUQ, for example, offers solutions to address data sampling, design of experiments, statistical calibration and sensitivity analysis.

Don’t Forget the Human-in-the-Loop

The one surefire way to increase simulation reliability without increasing model mesh count or server cost is with a careful choice of the CAE user, many suggest.

“It’s important to understand the event you’re modeling, the physical phenomenon you are trying to capture,” Hartl says.

Arnaud van de Veerdonk, product manager, PTC echoes this sentiment.

“When validating the final design, it’s important to have a simulation professional correctly apply the real-world loads and interpret the results. With the wrong assumptions, the model still gives you an answer, but that doesn’t necessarily match what’s happening in the real world,” he says.

More Ansys Coverage

More COMSOL Coverage

More Dassault Systemes Coverage

More PTC Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE