Fig. 1: When combined with physics-based simulation, AI may be the active ingredient to help design engineers overcome hurdles that to date have hindered or precluded the simulation of some challenging applications facing product development teams. Image courtesy of Siemens Digital Industries Software.

Latest News

November 16, 2020

Product development teams have only just begun to use artificial intelligence (AI) in combination with physics-based simulation platforms, moving beyond advanced automotive applications to more consumer-oriented designs.

The use of AI technologies such as machine learning (ML) and deep learning seems like a logical progression, as engineers take on increasingly complex designs and contend with shorter and shorter development cycles.

But exactly where does AI fit into the design and development landscape? Does it replace physics-based simulations, or are the two complementary toolsets?

A Paradigm Shift

Conventional wisdom contends the use of physics-based simulations will continue to increase, at least nominally. The application of AI-based methods, however, shows signs of rapid and continuous growth, opening the door for new design methodologies (Fig. 1).

“AI will allow us to move from the traditional paradigm to a brand new one, where CAE simulation is used for design of experiments (DOEs) to feed AI models with data that will then be reused for much faster runs, improving productivity and allowing for more optimization of products,” says Bruce Engelmann, chief technology officer at MSC Software. “This is a paradigm shift from simulation validated by test to DOE-fed AI models validated by simulation and testing.”

Using this observation as a starting point, a sound rule of thumb for design teams is to use conventional tools for any application that can be done well with physics-based simulation. An advantage of these modeling tools is that they are generally less biased than data-driven models, because physics-based tools are governed by the laws of nature.

That said, there are cases where AI offers the only way to successfully take on an application. The technology is also proven to be useful in cases where it is essential to reduce the time and resources required to solve complex problems.

Manufacturers have also begun to recognize that they can no longer afford the “build it and tweak it” approach that has long characterized many design projects. Recent experience shows that CAE must evolve with growing expectations across the many disciplines because systems-oriented approaches make conventional engineering judgment less feasible and scalable.

When There Isn’t Enough Data

One area where AI can pick up where physics-based simulations leave off centers around data limitations. For example, until recently, engineers have been precluded from modeling and simulating certain aspects of designs either because they simply didn’t have enough physical data—such as the part geometry early in the design process or a well-defined behavior—where generating the data would prove to be too expensive or time-consuming.

AI provides a way around this obstacle via a deep learning technique called generative adversarial networks (GANs). This approach automatically identifies features or patterns in available input data and then uses the information to generate synthetic data with characteristics similar to physical data from the original dataset.

GANs produce realistic data by training a generator network that outputs synthetic data. A discriminator network then tries to classify this output as either genuine physical data or synthetic data. The two networks essentially train each other until the discriminator network is fooled about half the time, indicating that the generator network is producing plausible data.

The GAN approach offers a number of advantages. For example, it does not require intimate knowledge of the physical system. Furthermore, it can use historically observed data to generate synthetic data, which can then be used to train other AI models. An example of this is to use GANs to produce synthetic images to train an object detector or image classifier.

Confronting Complexity

Ironically, while too little data can hinder the use of physics-based simulations, so can too much data, and that has become a major obstacle for design engineers. The reason for this is found in the evolution of finite element simulation.

This form of simulation has experienced continuous growth in the complexity of the problems it has been asked to simulate and the fidelity required to represent real-life behavior. To put this into perspective, in the 1990s, typical design simulations consisted of a few thousand elements.

Today, simulations involve several million elements. The driving force behind the data explosion lies in the demand for more authentic representations of real-life problems. This shift has triggered a dramatic increase in the computing resources needed to perform finite element simulations and a notable rise in design costs.

To make matters worse, nearly every industry has ramped up demands on design engineers to turn out higher quality products in shorter design cycles.

AI’s solution to these challenges is to have development teams use ML to develop reduced order models (ROMs) of finite element simulations. Applying this method, engineers construct ROMs of large, complex systems using small datasets derived from previous simulations and testing, gleaning insight into system characteristics. This allows engineers to quickly study a system’s dominant features using minimal computational resources.

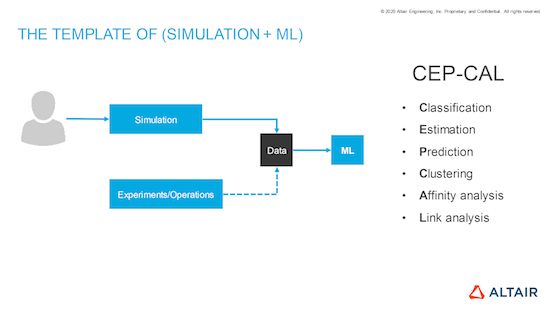

Starting with a problem that the engineer defines, ML can help streamline optimization of a high number of variables. The workflow begins by using a co-simulation model to create datasets to train a ROM, providing sufficiently accurate results across all of the physics required (Fig. 2).

“ROM can improve product design in numerous ways,” says Jenmy Zhang, post-doctoral researcher at Autodesk. “A few examples include reducing overall time to generate many design possibilities, predicting part performance on the fly during interactive design and product performance probabilistic sensitivity or uncertainty analyses, which require repeating a simulation many times.”

The Accuracy Question

AI can handle large, complex applications. It has shown that it can work around data shortages. Furthermore, these technologies have proven that they can quickly resolve time-critical applications. The big question is: Are AI-enabled simulations as accurate as conventional physics-based systems? Sometimes the answer is “yes,” and sometimes “it doesn’t matter.”

The characteristic of AI technology that comes into play here is its ability to “learn” the physics of an application. Development teams can combine physics-based simulation models with a ML model to improve overall model accuracy. The underlying physics can be described with known physics-based formulations, and the uncertainty or unmodeled dynamics can be captured using a neural network. In this way, AI allows engineers to solve problems through simulation that in the past could not be described by physics.

However, there are limitations that development teams should keep in mind.

“Machine learning typically produces results as accurate as the data it has been learning from,” says Peter Mas, director of Simcenter engineering and consulting services for Siemens Digital Industries Software. “If it just makes relationships without any physical meaning, the machine learning model will not be able to generalize this to new problems. If, on the other hand, the machine learning algorithm also learns the physics behind the data, it will be able to replace a pure physics-based simulation while being as accurate as the data that it learned from.”

Though one advantage is that ML models can improve over time. When new data becomes available, neural networks can be formulated in such a way that they can improve themselves without forgetting the physics that they have already learned. One method that can be used to accomplish this is the GAN approach, where the learning network is constantly reviewed by the discriminator network to evaluate the quality of the output versus currently available information.

There are occasions when discussing accuracy where the standard for success is measured on a sliding scale. In these applications, ML-enabled simulations excel.

“The level of accuracy of ROM models used for wide design space exploration and optimization does not need to be at the level of a high-fidelity physics-based model, but rather just requires the capture of the essential behavior and relationships,” says Engelmann. “While classical surrogate models, based on algebraic fitting, work in some applications, they do not capture the essential behavior and relationships for many problems of current interest. The state-of-the-art of machine learning-based ROM models can do just that even for highly nonlinear and transient responses across multiple physics.”

A Fresh Look at Data Management

The fact that AI technologies use data differently than physics-based simulations raises questions around data management demands.

If product development teams are to rely on virtual data, then CAE users must become adept at routinely collecting data and managing it effectively. The volumes required by ML mandate efficient storage and careful management of data as a strategic asset to the organization. This can only be achieved if data management becomes a routine part of design and engineering workflows.

Unfortunately, current practices often don’t conform to these protocols. Too many product development teams simply don’t adhere to good data management practices. To rectify the situation, companies will have to make fundamental changes.

“Most engineering teams delete data from failed simulations,” says Fatma Kocer, vice president of engineering data science at Altair. “This needs to change. All data that has been simulated and generated—whether it was a failure or a success—needs to be kept. Of course, this creates large files, so a data management team within the organization needs to compress these files to extract the relevant metadata so there is relevant information to apply to future simulations.”

This means that the data management community will have to come up with compressed file formats, ensure that all engineers follow the prescribed practices and make sure that IT departments store company data safely and securely.

The call to action, however, goes beyond this. Data management needs to become more widespread and pervasive so that materials, design and engineering, and manufacturing and quality data is automatically captured within companies and surfaced on-demand to those who need it.

As AI gains a firmer foothold in the simulation arena, companies will be able to more clearly define data management requirements. “As the use of machine learning expands, new demands on data management will emerge so that one can determine what data was used to train a neural network and how that data can be augmented in the future,” says Mas.

Chances of Success

If you brush aside the enthusiasm and the hype, what are the odds of successfully incorporating AI into the design simulation process? While AI has been effectively harnessed in some areas, it is still a relative newcomer to the simulation arena.

“Today we are only in the early stages of AI design tools that guide the engineer toward solving his or her complex problem,” says Siemens’ Mas.

As a result, important infrastructure elements have yet to be developed. The challenge, however, requires not only introducing AI development frameworks and tools, but developing these elements in such a way that ensures they are accessible to existing development teams.

“To embed AI into design engineering processes, I think it is essential that frameworks and tools are presented in the prism of CAE so that they help today’s users effectively apply complex machine learning algorithms,” says Engelmann. “Doing so means not only abstracting the data science and machine learning part, but also giving users the full control of the areas that they require. Expecting a design engineer or even a methods developer to become an AI expert is not a likely proposition, but helping them understand the benefits and limitations of AI is a must.”

This raises the question of automating the development tools and processes. Consider that the success of incorporating AI into product design requires more than training an AI model or relying on specific libraries. It’s imperative to consider the entire workflow when implementing AI.

“A key factor for successful AI projects is the incorporation of automation in AI workflows, which can significantly speed up the time to production,” says David Willingham, senior product manager, deep learning at MathWorks. “Automation can mean automating part or all of the AI workflow, such as automating labeling data, training of AI models, and automatically generating the production code that will run on the final AI system.”

Some companies offer tools that they contend are suited to automate parts of the workflow. Other companies working in this area, however, find developers’ options limiting.

“The current generation of AI design tools does not provide guided and automated workflows to easily train AI models,” says Altair’s Kocer. “Although there are countless algorithm libraries, there are no proven, comprehensive, guided and automated workflows.”

What This Means for the Design Engineer

In sizing up AI’s effect on the simulation process, awareness and understanding play equally important roles. Design engineers will use AI only if they are satisfied with the results. For engineers to trust a data-driven model that uses ML, they must have a basic understanding of how the algorithms work. This means understanding where and how AI benefits them and how to combine it with physics-based simulation (Fig. 3).

Make no mistake, this will be no easy task. Typically, mathematicians and statisticians are the main users of data science and ML technologies. Simulation-driven development is typically part of physics-based science, and engineers are often the users of these technologies. Today, only a handful of people have mastered both skills. As work in this area continues, the combination of physics-based and data-driven models promises to transform the mechatronic product development process.

“While AI won’t completely transform design practices, it will amplify and make them better,” says Kocer. “With ML models, you will get quick decisions, and to come up with these models, you need good data discipline and simulation.”

More Altair Coverage

More Autodesk Coverage

More Hexagon MSC Software Coverage

More MathWorks Coverage

More Siemens Digital Industries Software Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News