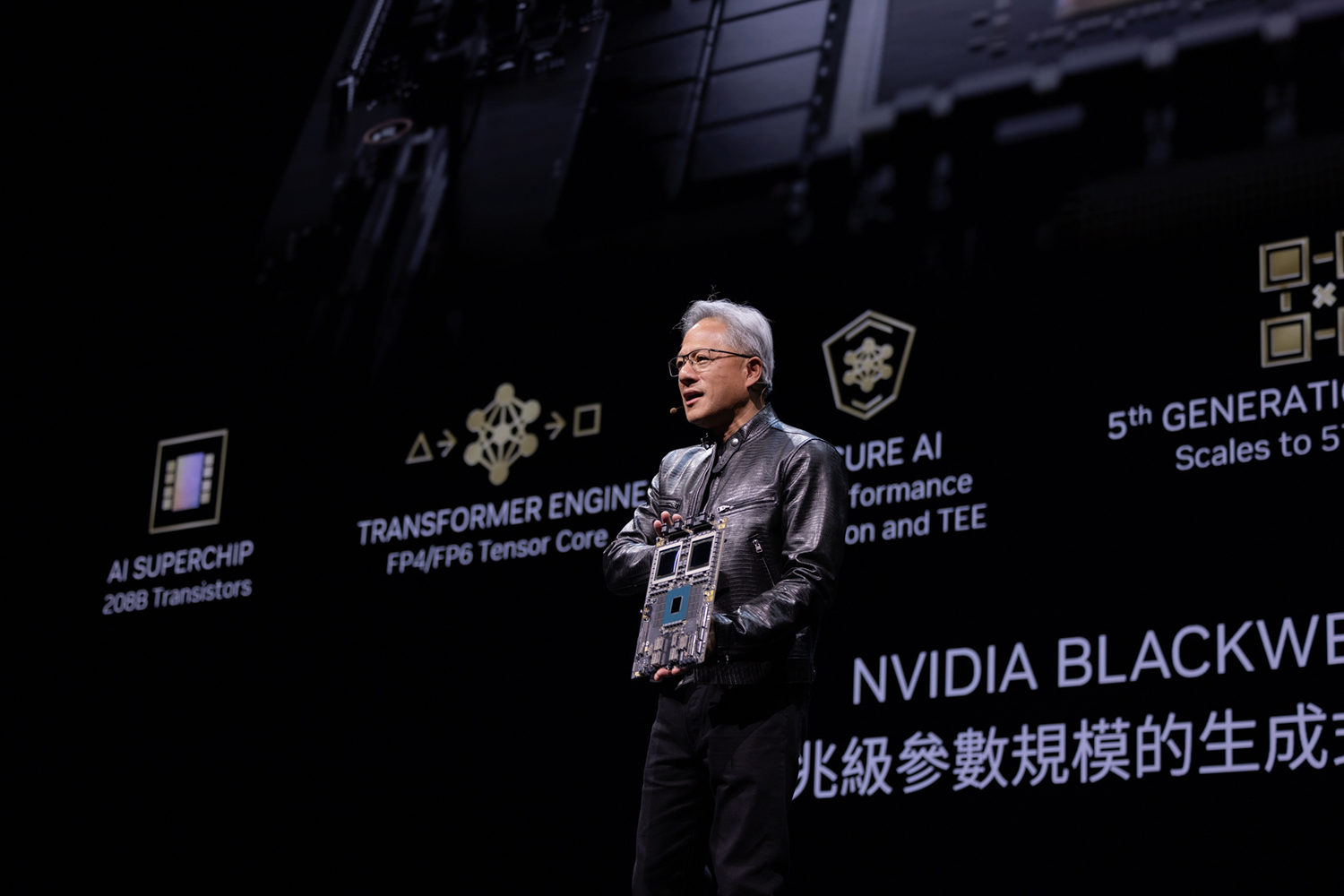

This week, NVIDIA CEO Jensen Huang flew to Taiwan, his birthplace, to unveil his plans to expand the company's AI-targeting data center products. Speaking at COMPUTEX Taipei, Huang said, "The future of computing is accelerated. With our innovations in AI and accelerated computing, we’re pushing the boundaries of what’s possible and driving the next wave of technological advancement.”

Moving forward, we can expect NVIDIA to refresh its AI processor lines in a one-year rhythm. "Our basic philosophy is very simple: build the entire data center scale, disaggregate and sell to you parts on a one-year rhythm, and push everything to technology limits,” Huang explained.

At the event, he also revealed Rubin, the successor to the current Blackwell processor architecture. Rubin is set to debut in 2026. It features new GPUs, a new Arm-based CPU called Vera, advanced networking with NVLink 6, CX9 SuperNIC, and the X1600 converged InfiniBand/Ethernet switch, the company announced.

Also speaking at the same conference was Taiwan-born Dr. Lisa Su, CEO of AMD. Kicking off the show with her keynote, she said, "AI transforms virtually every business, improves our quality of life, and reshapes every part of the computing market." She revealed AMD's new AI-targeted processor, the AMD Ryzen AI 300 series, the third generation of AMD AI-enabled mobile processors. Intel CEO Pat Gensinger is scheduled to speak there on June 4.

For NVIDIA, data centers are where AI workload will inevitably end up, due to their compute-intense nature. "Companies and countries are partnering with NVIDIA to shift the trillion-dollar traditional data centers to accelerated computing and build a new type of data center--AI factories--to produce a new commodity: artificial intelligence,” said Huang. “From server, networking and infrastructure manufacturers to software developers, the whole industry is gearing up for Blackwell to accelerate AI-powered innovation for every field.”

To standardize server setup and configurations, NVIDIA is releasing the NVIDIA MGX modular reference design. In a rare instance of coopetition for common interest, NVIDIA's rivals Intel and AMD also came on board. "AMD and Intel are supporting the MGX architecture with plans to deliver, for the first time, their own CPU host processor module designs. This includes the next-generation AMD Turin platform and the Intel Xeon 6 processor with P-cores," according to NVIDIA.

With increased use of large-scale computing in AI projects, processor makers face questions about their commitment to sustainable or green computing. Huang pointed out that combined use of GPUs and CPUs could deliver to a 100x speedup in performance while only increasing power consumption by a factor of three, suggesting adding GPUs into the mix is better than running on CPUs alone.

Some seem to be looking at liquid-cooling as the greener alternative. Anson Chiu, president at LITEON Technology, said, “With the launch of the NVIDIA Blackwell platform, LITEON is releasing multiple liquid-cooling solutions that enable NVIDIA partners to unlock the future of highly efficient, environmentally friendly data centers.”

NVIDIA is not only supplying hardware to the AI market but also nurturing it to help it grow. At NVIDIA GTC in March, Huang announced the plan to empower AI application developers with the release of NVIDIA Inferencing Microservices (NIMs). During COMPUTEX, NVIDIA revealed nearly 200 software partners--including Cadence, Cloudera, Cohesity, DataStax, NetApp, Scale AI, and Synopsys--are integrating NIM into their platforms to speed up the development of copilots, code assistants, and digital human avatars.

Huang said, “Every enterprise is looking to add generative AI to its operations, but not every enterprise has a dedicated team of AI researchers. Integrated into platforms everywhere, accessible to developers everywhere, running everywhere--NVIDIA NIM is helping the technology industry put generative AI in reach for every organization.”

For more, watch the NVIDIA CEO Jensen Huang's talk below:

Since its founding in 1993, NVIDIA (NASDAQ: NVDA) has been a pioneer in accelerated computing. The company’s invention of the GPU in 1999 sparked the growth of the PC gaming market, redefined computer graphics, ignited the era of modern AI and…

Cut Retrieval-Augmented Generation (RAG) Hallucinations by 50%

Most teams hit the same wall with enterprise AI: LLMs that hallucinate, pipelines that don’t scale, and infrastructure that’s harder to design than the models themselves.

Kenneth Wong is Digital Engineering's resident blogger and senior editor. Email him at [email protected] or share your thoughts or suggestions at digitaleng.news/facebook.

Follow DE

Join over 90,000 engineering professionals who get fresh engineering news as soon as it is published.