Sponsored

As more and more design and engineering applications increase their graphics performance and take advantage of GPU acceleration, configuring a workstation that is optimized for those applications has become more complex.

“You need to buy a system that is the right balance in its configuration,” says Scott Hamilton, technical marketing operations manager for Dell’s specialty product group. “If you buy a big CPU or GPU and don’t put enough memory in the computer to supply cdata to the other components, then you are not going to have a great experience overall.”

While there is usually plenty of information available online gauging computer performance when it comes to standard consumer or office applications, engineers have unique workloads that are sensitive to that balance in compute power. With the wrong hardware, simulation or rendering tasks could slow down significantly, and erode productivity.

Benchmark data can help companies make apples-to-apples comparisons between workstations, CPUs and GPUs. While hardware and software vendors usually provide some of this information, there are independent, third-party resources as well. When it comes to graphics performance, the SPECViewPerf 13 benchmark can be a helpful guide in determining which workstation is right for a given engineering application.

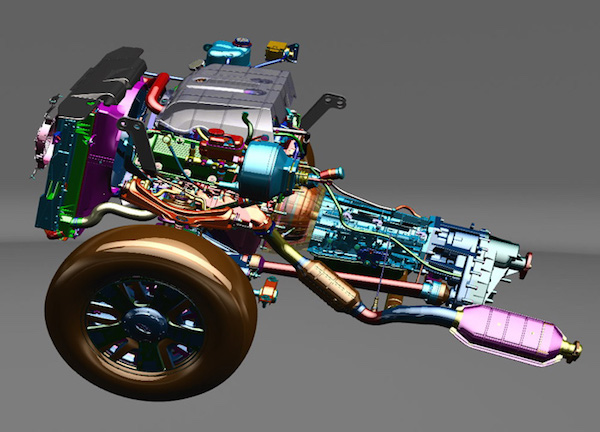

The SPECviewperf 13 benchmark is a global standard for measuring graphics performance for professional applications. Data for both Windows and Linux machines is available for a variety of applications, including 3DS Max, Siemens NX, Solidworks, Creo, and Maya.

The benchmark is managed by the Standard Performance Evaluation Corporation (SPEC), a non-profit that was created to miatin standardized benchmarks and tools. Its Graphics and Workstation Performance Group (GWPG) includes major hardware providers like Dell and NVIDIA.

While there aren’t results for every application available, Hamilton says the SPECviewperf data is still a good measuring stick. “The benefits to the workstation user is an understanding of how the graphics subsystem is going to perform relative to the software,” Hamilton says. “Ideally you would have a report specific to the software you are using, but in some cases you can use that as a guide to evaluate a similar piece of software.”

Because the benchmark provides a range of results based on a given application, users can see if a given test matches the type of workflow they are likely to encounter during normal use of the workstation. “That allows you to correlate whether that view set is the appropriate one to use to help make your decision,” Hamilton says.

The benchmark also provides insight into relative performance, because in most of the benchmarks there is a predictable scaling of performance as you move up the graphics card stack from a given vendor. For certain applications and use cases, the benchmark can also illustrate where there may be a diminishing return on a given investment.

“If you are comparing two GPUs, you may discover that a lower-cost card gives you 90% of the performance you need, and with the best price-performance ratio,” Hamilton says. Moving up to a more powerful GPU will accelerate performance, but in some cases not enough to justify the additional expense.

While the SPECviewperf benchmarks are a valuable source of information, they need to be utilized in a broader context. Whenever possible, users should try to test their actual application on the hardware to gauge performance, and reference additional materials that might have applicable test data. (You can read more about what SPECviewperf can and cannot do here.)

Hardware vendors like Dell and NVIDIA also publish their own benchmark data. For example, NVIDIA provides benchmark data that makes it easier to compare the relative performance of its Quadro GPUs for SolidWorks. Dell also provides guidance on its Precision workstations via certification with a wide range of design and engineering software systems.

There are other independent benchmarks that can be helpful as well. FurMark, for example, is another OpenGL benchmark that uses fur rendering algorithms to measure GPU performance.

“If you take into account benchmarks that are as close as possible to representing a typical workload, then I think you’ll come up with a better end result,” Hamilton says.

It’s also important to have a balanced system. Graphics performance isn’t the only spec that will drive hardware selection. Underpowering the workstation when it comes to memory or CPU will degrade performance, while overpowering in any of those areas might be a waste of money.

How the workstation is used will also affect these decisions. You can’t just consider the performance of one application. “An engineer may do design work in SolidWorks, and then run finite element analysis in Ansys,” Hamilton says. “How do they use the system during the design lifecycle? You may design a component in a CAD application, then close that down and move to Ansys Mechanical to do stress analysis. If you are doing those independently, you are only putting one demand on the workstation at a time. In other models, you may configure the workstation so you can perform both of those tasks at the same time.”

It’s also important to buy a workstation that can meet your future needs. A lot can change of the typical three-year refresh cycle for an engineering workstation. Consider a workstation that allows you to upgrade memory or move up to the next class of GPU down the road. “You might want to buy a higher end system you have that extra headroom for future usage,” Hamilton says.

Dell sells personal computers (PCs), servers, data storage devices, network switches, software, computer peripherals, HDTVs, cameras, printers, MP3 players, and other electronics.

Artificial Intelligence for Design and Engineering Workflows

In this white paper, learn how artificial intelligence and machine learning can improve design and simulation.

Brian Albright is the editorial director of Digital Engineering.

Contact him at [email protected].

Join over 90,000 engineering professionals who get fresh engineering news as soon as it is published.