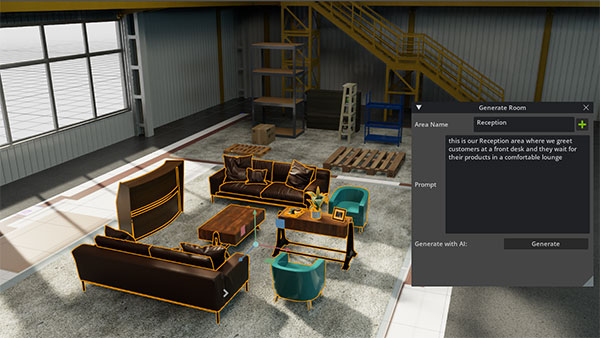

NVIDIA incorporated natural language input to make content creation easier in its AI Room Generator Extension for Omniverse. Image courtesy of NVIDIA.

Latest News

July 14, 2023

This year in March, Ansys Chief Technical Officer Dr. Prith Banerjee took the stage as the keynote speaker at OzenCon, a simulation conference hosted by Ansys and engineering consultancy Ozen Engineering. Artificial intelligence (AI) chatbot ChatGPT, developed by OpenAI, was causing a buzz and showing off its robust natural language processing skills in the weeks leading up to the event.

For highly technical disciplines such as finite element analysis (FEA), Banerjee believes ChatGPT offers tantalizing possibilities to drastically lower the learning curve and broaden the reach.

“ChatGPT will completely transform the way simulation tools are used in the future,” says Banerjee. “Today, when you use a simulation tool such as Ansys HFSS for electromagnetic simulation or Ansys Fluent for fluid simulation, you must set up a hundred different parameters.

“You almost have to have a Ph.D. in aerospace engineering to know which parameters to set,” Banerjee adds. “In the future, with technology like ChatGPT, you can actually say, ‘Run an external aerodynamic simulation over a Boeing 747 plane,’ and it will figure out for that particular case [and] the fluid settings.”

Why Was It Designed This Way?

“ChatGPT has really lit a fire under everyone in research,” Alex Tessier, director of simulation optimization and systems research at Autodesk, says. “It has hastened a lot of our early work leveraging these large language models from companies like OpenAI.”

CAD programs allow users to construct sophisticated geometry by giving them granular control (for example, extruding a 2D profile, creating surfaces based on spline curves, etc.), but they have never been able to capture the design intent—the reasons the engineer felt a certain shape or profile was the best option. With the dialog-like interaction seen in ChatGPT, this may become possible, Tassier says.

“We see huge potential with these large language models. They can help the users work through logical iterations of concepts, high level designs, sort of like walking into the Holodeck in Star Trek, where you’re having a conversation with the AI to explore your design options,” he says.

With algorithm-driven generative design use, early users were surprised to discover that often the software’s algorithm came up with ingenious lightweighting options that have escaped human engineers. Tessier thinks engineers should expect similar types of surprise discoveries.

“If you ask the chatbot for a certain device that satisfies your driving requirements, you might have been thinking of a combustion engine, but the chatbot might propose an electric motor or a pneumatic mechanism instead,” he says.

The chatbot-based design workflow will eliminate many of the tedious tasks, such as searching and replacing commonly used items or placing bolts in specific places, Tessier predicts. “We view this as an augmentation, an assistance. It’s not meant to be a replacement,” he cautions. “It’s up to the human user to verify the answers.”

Going forward, Tessier thinks CAD UIs will evolve to accommodate granular design methods (the existing geometry sculpting tools) as well as high-level design methods (the conversation-style method). As he sees it, “AI may get you 80 percent of the way. But that final 20 percent will be up to you. You’re going to have to make sure that your i’s are dotted and the t’s are crossed.”

Automatically Generating a Program

Bjorn Sjodin, vice president of product development at COMSOL, has watched COMSOL users’ interactions with ChatGPT with great interest. Though COMSOL hasn’t integrated ChatGPT—or similar chatbots—into its UI, that didn’t stop ChatGPT from learning a thing or two about COMSOL. In fact, ChatGPT seems to have learned to write COMSOL simulation apps in Java.

“ChatGPT can give our users advice on modeling tasks. If they ask, say, what types of physics they should consider for certain models, or what steps to follow when setting up certain kinds of simulation, we found that ChatGPT can give you a pretty good answer. It’s just like having a copilot or a personal assistant with expertise in modeling,” says Sjodin. “It’s probably because we have been quite open with our information, like our manual.”

Sjodin has seen some users ask ChatGPT to write Java programs to execute certain simulation tasks. “It still needs some guidance from the users, but that means users don’t need to know Java or MATLAB programming to produce these programs,” he says.

But users shouldn’t blindly put their trust in AI-generated codes, Sjodin warns. “You can ask the same question, but you don’t always get the same answer. AI tends to introduce certain randomness. Sometimes that randomization can introduce errors,” he says.

This suggests chatbots like ChatGPT could become an effective way to cut down on mundane programming and repetitive tasks, but the user needs to have domain knowledge to assess the quality of the AI-generated output. For example: If you’re asking ChatGPT to write you a program to simulate heat transfer in a battery pack, you must know enough about the phenomenon to be able to tell if the output code makes sense.

Sjodin believes there’s a desire among the simulation users to interact with their software through chatbots. After all, typing in a command or request is far more natural than fidgeting with menu buttons and dialog boxes. “We will probably do something in the future, but we have barely started thinking about it,” he says.

Technically, AI-based chatbots can easily be integrated into FEA programs, but Sjodin wants users to remember one thing: “Chatbots are good at chatting, but they’re not particularly good at math.”

Reduced-Order Models on the Rise

Solve time usually determines the simulation job cost. The longer it takes to solve, the costlier it is. This has led some to employ reduced order models (ROM) and uncertainty quantification (UQ) to drastically cut down solve time. ROMs and UQ allow you to bypass the need to run simulations based on full physics models. Instead, you can focus on a small number of parameters that make a huge difference in simulation outcomes. Both methods require AI or ML.

“[Using physics solvers] is very accurate, but it takes a long time to solve,” Banerjee says. “ Simulation problems could have 200 million unknowns, a billion unknowns, and so on. With ROMs, you can take a problem with a billion unknowns and automatically reduce it to maybe 1,000 unknowns.

“At Ansys we have a suite of technologies with static ROMs, dynamic ROMs, linear ROMs, and nonlinear ROMs. They’re built into our Twin Builder, our digital twin tool,” he adds.

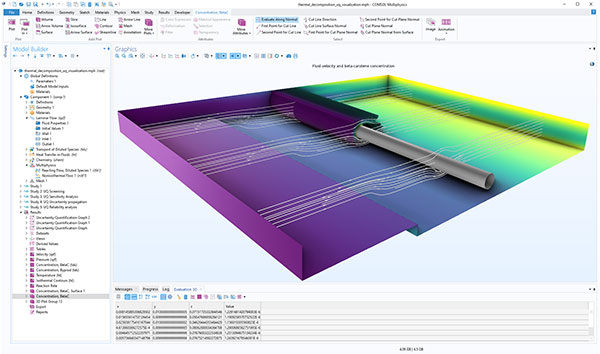

In 2021, COMSOL added a new UQ Module to its Multiphysics software. The company describes it as a tool for “understanding the impact of model uncertainty—how the quantities of interest depend on variations in the inputs of a model. It provides a general interface for screening, sensitivity analysis, uncertainty propagation, and reliability analysis.”

The UQ Module gives COMSOL users a way to use machine learning to build ROMs or surrogate models based on the users’ own historical data, which can speed up simulation by bypassing the need to run full physics simulation.

“This is fairly new technology. Some of the algorithms we have in the UQ Module didn't exist 10 years ago,” says Sjodin.

Learning Machine Speak

NVIDIA has been pitching its immersive 3D simulation environment, Omniverse, as a virtual place to house digital twins. In March, Mario Viviani, developer relationship manager for Omniverse, stirred up excitement with a video demonstration when he showed how to use ChatGPT-style natural input for scene creation.

The AI Room Generator Extension (available from GitHub) is powered by NVIDIA DeepSearch and GPT 4. When creating environments such as warehouses and reception areas, instead of dragging and dropping items from a library, users can use prompts, such as “add common items found in a warehouse/reception area.”

The default layout can be further customized and configured by the user, but the extension speeds up the creation of a basic environment. The method points to a fresh new usage paradigm in 3D content creation software, making it more accessible to a wider audience.

Whereas computing programming language requires highly specific input in the predefined syntax, ChatGPT-style input is quite different. Its robust natural language processing offers greater freedom in how the user phrases the command.

“The extension combines the prompt provided by the user (for example, “fill the defined space with classic art deco furniture”) with a system prompt provided to GPT-4 that provides more context to the large language model on how to process the request,” Viviani explains. “So the system prompt may be as complex as, for example, ‘You are an area generator expert. Given an area of a certain size, generate a list of items that are appropriate to that area, in the right place, and with representative material.’”

“The AI Room Generator is one of our experiments using large language models for accelerating 3D workflows,” says Viviani. “We’ve run another small experiment called Camera Studio, which is an extension that allows users to generate virtual cameras in Omniverse by creating the camera settings via ChatGPT.”

Early users of the AI-based image-making program Midjourney discovered it’s not so easy to ask the software to produce exactly the type of image desired. Quite often, there’s a mismatch between what the user is attempting to describe with his or her prompt, and what the software produces. This is the double-edged nature of natural language input: It’s not a precise language like mathematics; it’s open to interpretation.

Editor’s Note: Some parts of this article previously appeared in “How ChatGPT and Other AI Bots Could Make Simulation Easier, Faster,” published June 2023.

More Ansys Coverage

More Autodesk Coverage

More COMSOL Coverage

More NVIDIA Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE