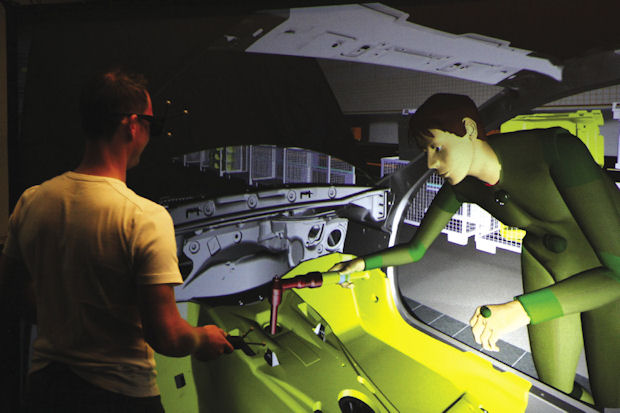

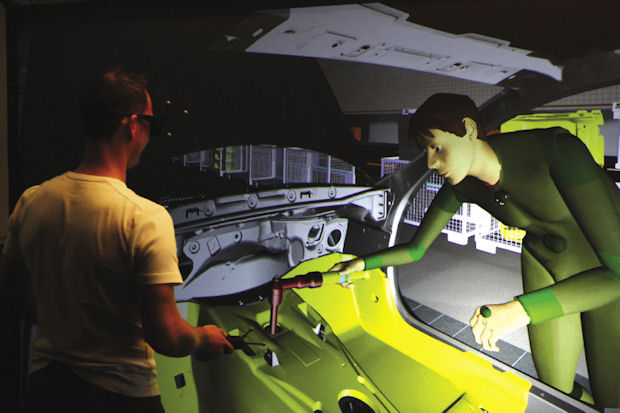

IC.IDO’s Virtual Reality (VR) technology enables customers to present, manipulate and exchange product information virtually. Image courtesy of ESI Group.

Latest News

July 1, 2014

IC.IDO’s Virtual Reality (VR) technology enables customers to present, manipulate and exchange product information virtually. Image courtesy of ESI Group.

IC.IDO’s Virtual Reality (VR) technology enables customers to present, manipulate and exchange product information virtually. Image courtesy of ESI Group.Add a little bit of Iron Man, a little Star Trek and a lot of Matrix, and the combination will give you some idea of working in a perfect virtual reality (VR) environment. Though we’re not quite there, hardware and software developers have worked the details for decades and gotten us surprisingly close (fortunately minus the red and blue pills). Imagine a world that isn’t physical—yet you can walk through it, observe the walls or scenery go by, reach out and touch a button, chair or wall, and see everything react in real time. Immersive VR spaces are here today, created through a combination of high-definition projectors, head-mounted displays, polarized glasses, wireless tracking technology, computer graphics and more, all coordinated to work in unison and present realistic, user-interactive experiences.

Across the board, it’s clear that the people involved in VR systems are passionate about the technology and building on achievements in gaming, entertainment and simulator training. Automotive, architectural and healthcare programs use immersion tools to uncover problems and verify solutions. The benefits are thoroughly tangible: for example, in 2013 Ford Motor Co. used its Immersion Laboratory to verify more than 135,000 details on 193 virtual vehicle prototypes prior to building prototypes; that’s in one year alone.

Although describing 3D virtual environments on a flat piece of paper seems incongruent, here are some personal perspectives (and 2D images) that might convince you of VR’s possibilities.

Creating Virtual Reality

The Sensics XSight panoramic HMD for single-viewer virtual-reality immersion with 1920x1200 pixels per eye, tiled OLEDs and 123° field of view. Image courtesy of Sensics.

The Sensics XSight panoramic HMD for single-viewer virtual-reality immersion with 1920x1200 pixels per eye, tiled OLEDs and 123° field of view. Image courtesy of Sensics.Starting in the 1960s, the technology behind flight simulators, displays, video games, computer input devices (the mouse, tracking gloves and cameras) and Hollywood effects cross-pollinated to support the emergence of VR environments. More recently, developments in high-performance computing (HPC) added the processing power that makes real-time responses possible.

Jason Jerald, founder and president of NextGen Interactions, has the big picture on VR immersion technology, having worked in computer graphics and 3D interfaces for more than 20 years.

“Virtual reality is getting a lot of press and the media has really latched onto it, but it’s been around a long time,” he says. “It’s primarily been for visualization purposes—such as for design reviews, to make sure things look right or are in reach.”

But Jerald admits that for engineering applications, such as putting together components to make sure they fit, “we have a ways to go.”

Currently, VR configurations, whether for gamers or engineers, take two basic approaches, each with its own appeal. On one side are permanent or portable computer-assisted virtual environment (CAVE) systems, where one or more users, immersed in a 180° or 360° display, wear polarized glasses and stand, sit or walk within a partially or fully enclosed space. Tracking technology on the lead person governs the changing views and interactions.

On the other side are head-mounted display (HMD) systems worn by a single individual, who sees perhaps an 80° 3D view delivered as separate near-to-the-eye images. Again, the user interacts with the scene by wearing infrared or electromagnetic devices that work with a tracking system to capture head and hand motions.

Top-of-the-Line Immersion Experiences

Tom DeFanti is the director of visualization at the Qualcomm Institute (QI), a facility that houses several generations of CAVEs at the University of California San Diego (UCSD). His name appears in just about any description of these systems, which is no surprise since he created the first such VR environments in the early 1990s while founding the Electronic Visualization Lab (EVL) at the University of Illinois-Chicago. Officially, the name CAVE is a trademark of the University of Illinois, but its variations are used everywhere.

Qualcomm Institute (QI) Director of Visualization Tom DeFanti, one of the pioneers of CAVE VR environments, immersed in a simulation of the QI building at the University of California San Diego. Image courtesy of UCSD.

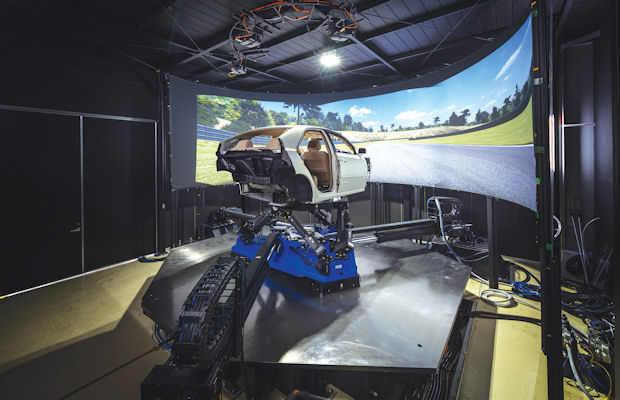

Qualcomm Institute (QI) Director of Visualization Tom DeFanti, one of the pioneers of CAVE VR environments, immersed in a simulation of the QI building at the University of California San Diego. Image courtesy of UCSD. Nine degree-of-freedom VI-DriveSim immersive virtual-reality vehicle test platform. It is based on MSC Adams multibody dynamics simulation software from MSC Software. Image courtesy of VI-grade.

Nine degree-of-freedom VI-DriveSim immersive virtual-reality vehicle test platform. It is based on MSC Adams multibody dynamics simulation software from MSC Software. Image courtesy of VI-grade. Researchers Eduardo Macagno and Jurgen Schulze inside the virtual reality simulation of a hospital clinic, in the CAVE at the Qualcomm Institute at the University of California San Diego. Image courtesy of UCSD.

Researchers Eduardo Macagno and Jurgen Schulze inside the virtual reality simulation of a hospital clinic, in the CAVE at the Qualcomm Institute at the University of California San Diego. Image courtesy of UCSD.A CAVE’s visuals are rear-projected onto multiple large screens or displayed on groups of LCD panels. When the configuration includes three or more walls, floor and/or ceiling, even a small space offers the illusion of much greater volume. UCSD’s StarCAVE is pentagon-shaped, with upper screens that tilt inward; 34 projectors combine to create the stereo visuals.

In listing what comprises a useful and affordable do-it-yourself VR system, DeFanti says, “It should involve a tracked real-time 3D stereo image with a screen large enough that you feel immersed and not see the edges as a dominant part of the image.” One can do this with single units or arrays of HDTVs and UHDTVs you can buy at an electronics retailer, he adds, noting that while good head/hand tracking can run about $10,000, “cheaper means are coming along. Kinect systems and their clones are becoming useful for controls, but not so much for head-tracking.” (Editor’s Note: See calit2.net/newsroom/article.php?id=2273 for a view of the newest UCSD CAVE incarnation called WAVE, comprising 35 LCD panels lining a horizontal semi-cylinder.)One of the primary users of the UCSD StarCAVE for design evaluation purposes has been Eve Edelstein, a research professor recently appointed to the University of Arizona. Edelstein brings a diverse background in architecture, anthropology and neuroscience to programs that observe how humans respond to their environment, whether natural or man-made.

In one of Edelstein’s projects, the StarCAVE was set up to visualize part of a hospital under construction, specifically a nurse’s station with views to two patient rooms: “We brought in actual sound recordings of ambient noises plus a doctor reciting a medical order.” She says that participants in the VR scenario could not distinguish the spoken words, proving the need to change the layout and even to use building materials with different acoustical properties (which were then simulated and approved).

High-fidelity VR systems already exist in such corporations as Caterpillar, General Motors and Ford Motor Co. At Ford, a CAVE Immersion Laboratory contains several VR setups with different goals. Elizabeth Baron, a VR and Advanced Visualization specialist, says the goal of their lab is to give designers and engineers a cross-disciplinary approach to what customers would see if they sat in the vehicle.

“Instead of doing engineering by PowerPoint or spreadsheets, we’re all looking at the same thing,” Baron adds. “It’s a great tool to get designers, engineers and ergonomics people to understand the same thing at the same time.”

Ford Immersive Vehicle Laboratory, with Elizabeth Baron, Virtual Reality and Advanced Visualization technical specialist. Image courtesy of Ford Motor Co.

Ford Immersive Vehicle Laboratory, with Elizabeth Baron, Virtual Reality and Advanced Visualization technical specialist. Image courtesy of Ford Motor Co.Begun in 2006, Ford’s Dearborn, MI, Immersion Lab now contains three types of VR experiences: the CAVE that helps the team perform studies on driver visibility and ergonomics; a Virtual Space “powerwall” recently upgraded to a 4K (4096x2160 resolution) display for 3D model review; and a Programmable Vehicle Model viewed using HMD equipment. The company has just opened a second such lab in Australia, and has satellite viewing centers in five other countries, supporting international design collaboration.

Head-mount VR Systems

The second VR interaction mode is through HMD technology. Far less expensive than CAVE systems, HMDs combine stereo, near-to-eye displays with real-time tracking systems. Important factors include resolution, color depth, field of view and brightness. The closed-loop visual and audio functions immerse wearers in their own highly interactive spaces: moving the physical controllers in one’s hands triggers a corresponding action in the virtual world. However, it’s a single-user system, meaning no one else in the room sees any part of the VR view.

Virtual reality headset in use at the Ford Immersive Vehicle Laboratory. Image courtesy of Ford Motor Co.

Virtual reality headset in use at the Ford Immersive Vehicle Laboratory. Image courtesy of Ford Motor Co.Ford’s Immersion Lab uses HMDs in its Programmable Vehicle Models (PVM). These comprise a physical seat and steering wheel mock-up set to the dimensions of a given design. IR tracking spheres on the HMD and gloves let users touch and feel the wheel, shift, seat and other interior elements for ergonomic evaluation.

User view inside the virtual reality 3D CAVE at the Ford Immersive Vehicle Laboratory. Tracking spheres on polarized glasses and hand controllers allow closed-loop positioning feedback. Note the virtual “hand” being used to verify the position of the door handle. Image courtesy of Ford Motor Co.

User view inside the virtual reality 3D CAVE at the Ford Immersive Vehicle Laboratory. Tracking spheres on polarized glasses and hand controllers allow closed-loop positioning feedback. Note the virtual “hand” being used to verify the position of the door handle. Image courtesy of Ford Motor Co.Until recently, the PVM field of view (FOV) was 50° to 80°. Since October, Ford has been evaluating one of the hottest items in the VR world, the 105° FOV Oculus Rift, an HMD unit plus developer kit first offered on Kickstarter. The company was recently acquired by Facebook, unleashing hundreds of online speculations about adding VR to social media interactions. But for the engineering world, a favorable point is its operation with PCs.

Sony’s Project Morpheus HMD (in development for PlayStation use) may also be a contender. It has a polished visor-style look and feel compared to the current ski-goggle appearance of Oculus Rift. It’s also slimmer, since much of the processing is done in a separate box that sits between the headset and the PS4. The PS4 operation makes it unavailable for engineering VR applications, but perhaps demand will drive expansion to a more open source.

Two high-end, highly experienced HMD manufacturers are NVIS and Sensics. NIVS is a 30-year-old company whose clients include Honda, BMW, Boeing, MIT and Walt Disney. Its HMDs support such advanced options as eye-tracking (knowing where you are looking) and motion-tracking (with three or six degree-of-freedom configurations). Sensics, a panoramic HMD company, has a broad range of products that offer ultra-wide FOV and high resolution based on flat-panel, OLED or tiled OLED displays.

Bringing in the Experts

A number of commercial companies can help you create a VR visualization solution. ESI Group markets the IC.IDO solution specifically for the engineering market.

“For a VR system, there are three components: first, the hardware, from projectors to computers to tracking systems; second, the software (that we make) that tells all the hardware what to do so everything is correctly scaled and behaving in unison; and third, the operators who build up the scenarios to study different engineering problems,” says Ryan Bruce, ESI Group’s business development manager.

Close-ups of mounting system hardware for VI-grade’s VI-DriveSim nine-degree-of-freedom vehicle-handling simulator. Image courtesy of VI-grade.

Close-ups of mounting system hardware for VI-grade’s VI-DriveSim nine-degree-of-freedom vehicle-handling simulator. Image courtesy of VI-grade.IC.IDO software scales from use on desktop PCs to CAVE immersion systems. It can handle massive data sets and collaborate among multiple locations, but a major strength is that it is based on a real-time physics engine to respond to your actions. For example, Bruce offers, “If I’m (virtually) trying to replace an alternator, I can pull the bolts out, grab and pull it. If there’s an electrical cable connected to it, the (virtual) cable will show you’re stretching it too much.”

Other companies offer both specialized and broad options to create various VR systems. Mechdyne, an international consulting firm in the visual information business, licensed the original CAVE technology and name from DeFanti’s EVL facility. It built upon that to develop flat, curved, CAVE and portable immersion systems with multiple interaction options. Virtalis is another VR system developer; its ActiveCUBE, ActiveWALL, ActiveMOVE and ActiveSPACE variously include options for portability, full immersion, HMD use and remote participation.

Always Room for Improvement

NextGen Interactions’ Jerald says HMD systems are getting better at dealing with “simulator sickness”; CAVEs tend to have fewer problems with this since latency is less of an issue. Another challenge is using curved, seamless display walls. These improve realism—in fact, people tend to walk into them—but the images are harder to align.There’s no question about the demand for such technology to enhance today’s design and visualization tools, and developments in MEMS sensors and computational algorithms will continue to expand VR capabilities. As QI’s DeFanti concludes, “Design engineers would love to see their designs from every angle, in super detail, and in stereo 3D from their precise perspective. It just makes a simulation so much better.”

Watch Michael Abrash, Oculus’ Chief Scientist, explain: “What VR Could, Should, and Almost Certainly Will be within Two Years” during Steam Dev Days 2014:

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Pamela Waterman worked as Digital Engineering’s contributing editor for two decades. Contact her via .(JavaScript must be enabled to view this email address).

Follow DERelated Topics