Hype around the use of artificial intelligence (AI) has always been high but, in the past few years, the coverage around AI in healthcare applications has seemed to reach a peak. AI could automate the process of diagnosing patients and selecting optimal treatments, while saving millions of dollars.

On the surface, it seems logical that AI could potentially augment or even replace some diagnostic processes, but there is a lot of potential for things to go wrong.

Consider the experience of the MD Anderson Cancer Center’s work with IBM’s Watson platform, which was expected to revolutionize clinical decision-making. Not only were there technical failures—Watson couldn’t work with the electronic medical records system—but the system was never fully functional, and the entire project wound up being a multi-million-dollar boondoggle.

Outside reports on Watson-based work at the Memorial Sloan Kettering Cancer Center indicate that the technology also produced inaccurate and even unsafe recommendations, in part because of poor training the AI system received from engineers and clinical staff.

The high-profile failures of Watson point to a key weakness in AI when it comes to healthcare applications: Despite the glut of medial information available, much of the data isn’t very good. In the case of treating cancer, for example, oncologists are selecting among treatments that don’t necessarily have comparable clinical trial data available. There is also much about how certain types of cancer operate in the body that isn’t well understood.

In other words, it’s easy to train an AI engine to recognize a dog from a selection of thousands of pictures because we already what a dog is. Applying that technology to cancer diagnoses is challenging, not because AI doesn’t work, but because of the limits of the human knowledge required to train the system in the first place.

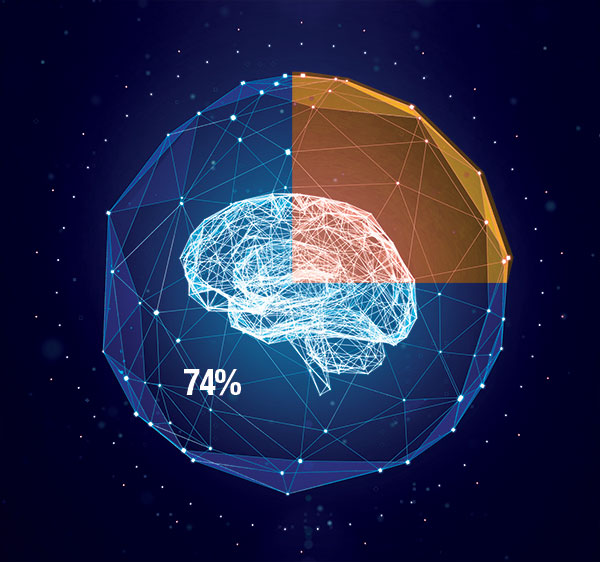

Still, there have been successes. At the University of Iowa Hospital & Clinics, staff report that machine learning has led to a 74% reduction in surgical site infections in three years, potentially saving millions of dollars. The solution, developed with DASH Analytics, monitors electronic health record (EHR) data until it spots an opportunity where decision support can improve outcomes.

The clinician is then presented with decision support information during the regular EHR workflow. They are able to see the risk for a specific patient, and are given suggested actions that can reduce or mitigate the risk. In the case of the surgical site infection model, real-time data from the EHR is combined with patient history and the World Health Organization surgical safety checklist so that, during the closing checks after surgery, the nurse is given information that can help reduce infection risk during wound closure.

Surgeons are able to use costlier interventions on a selective basis with those patients most at risk of post-surgery infection. The DASH technology hs also been chosen for the Centers for Disease Control (CDC) Epicenters Program, where it will be evaluated in a multi-center clinical trial. There are other projects underway as well, and the National Institutes of Health (NIH) are even part of a project that hopes to successfully deploy AI and supercomputers to help improve cancer treatment.

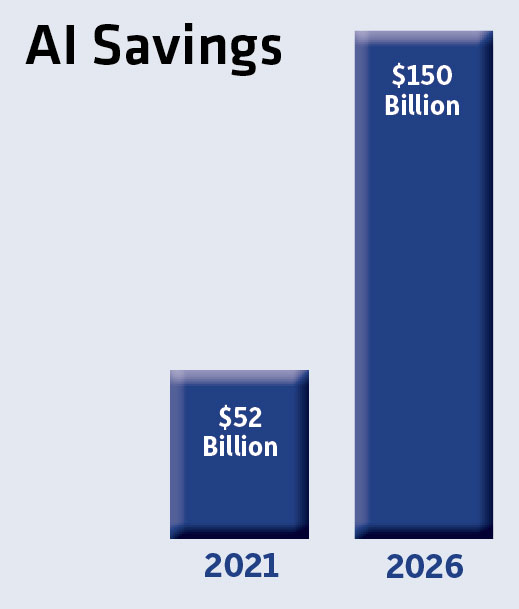

According to ABI Research, AI-based predictive analytics could save hospitals $52 billion by 2021. Last year, there were 53,000 patient monitoring devices using data to train AI models for these applications. According to ABI, that number could reach 3.1 million in the next five years.

Accenture estimates the potential savings could be as much as $150 billion annually in the U.S. healthcare system by 2026.

Getting to that point will require an investment high performance computing resources, research, and data collection at all levels. “If AI vendors hope to fulfill the potential of their applications in hospitals and medical institutions, they must help implement the communications, network and IT infrastructure necessary to deliver actionable analytics,” says Pierce Owen, principal analyst at ABI. “Unfortunately, clinicians in most hospitals often must work with pen and paper or pagers from 20 years ago, and have limited access to secure, networked devices. These institutions need help to collect data in a secure manner and deliver actionable analytics while staying compliant with all regulations.”

Availability of data directly affects the training of an AI system, which has an enormous impact on how successful these systems are in medical applications.

That was a key element in a recent study that looked at how well a neural network could accurately diagnose skin cancer. Researchers at the University of Heidelberg in Germany pitted AI against a group of 48 dermatologists to see who could more accurately predict the difference between cancerous skin lesions and benign ones. The dermatologists achieved 86.6% accuracy; the computer performed with 95% accuracy.

To train the the system (the convolutional neural network, or CNN), the team presented more than 100,000 images of malignant melanomas along with benign moles and nevi. After the training, the team selected 300 images (unknown to CNN). The AI system was not only able to better identify melanomas, but was less likely to misdiagnose benign moles.

“This CNN may serve physicians involved in skin cancer screening as an aid in their decision whether to biopsy a lesion or not,” says the study’s author, Professor Holger Haenssle, senior managing physician at the Department of Dermatology, University of Heidelberg, Germany. “Most dermatologists already use digital dermoscopy systems to image and store lesions for documentation and follow-up. The CNN can then easily and rapidly evaluate the stored image for an ‘expert opinion’ on the probability of melanoma.”

Image- or pattern-recognition systems appear to be where AI shows the earliest promise when it comes to diagnostic solutions. Solutions that require analysis of other types of data are highly dependent on the ability to successfully integrate with proprietary electronic medical records and to parse noisy data streams. Some examples include:

Armed with enough data, AI systems could do even more good. At Mt. Sinai in New York, for example, a team of researchers created a system called Deep Patient to evaluate the hospital’s health data using unsupervised learning. Looking at data from hundreds of thousands of patients, the solution was able to improve disease prediction across a range of conditions.

Electronic health records are “noisy” when it comes to data quality, with inconsistent and difficult to characterize information. The Deep Patient approach used a deep neural network composed of a stack of denoising autoencoders in an unsupervised manner, and appears to be able to analyze that type of data more effectively than other approaches.

“My main goal was to show that it was possible to apply deep learning to health records more efficiently than other techniques and get more insight from the electronic health records,” says Dr. Riccardo Miotto, data scientist at the Icahn School of Medicine at Mount Sinai, and lead author of the study.

These neural network-based AI systems “learn” the way a human does — not through questions and answers, but by gradually testing themselves against data that has been classified according to whatever task the researchers want it to perform.

That’s why image-based identification of, say, skin cancer or anomalies in mammograms are so well suited to this approach. The key, then, is utilizing AI systems that are backed by sufficient HPC resources, and then providing them with as much good data as possible.

According to Miotto, there are other obstacles that AI needs to overcome before it is adopted more broadly in healthcare.

“The biggest limitation is that AI mostly captures correlation, not causation,” Miotto says. “The interpretability of the model is also tricky, because it is very complicated. It is difficult to tell a doctor why the model is predicting these results. And it is also difficult to convince doctor to use automated systems.”

Dell EMC enables digital transformation with trusted solutions for the modern data center.

HPC Leading Edge, November 2018

Focus on the use of high performance computing in healthcare and life sciences.

Brian Albright is the editorial director of Digital Engineering.

Contact him at [email protected].

Join over 90,000 engineering professionals who get fresh engineering news as soon as it is published.