Simulation Powers Energy Saving Analysis

Digital twins and supercomputing resources drive large-scale, multi-building simulation to promote energy conservation measures.

Latest News

August 29, 2021

With sustainability and energy conservation a core imperative over the next decades, widespread research and development efforts are being trained on how to reduce the carbon footprint of the nation’s building infrastructure, now estimated at around 129 million edifices with a total annual energy bill of an eye-popping $403 billion.

One such effort, conducted by the Department of Energy’s (DOE) Oak Ridge National Laboratory (ORNL) leveraging the supercomputing horsepower of the DOE’s Argonne National Laboratory, is employing simulation and digital twins to assess energy use across more than 178,000 buildings in Chattanooga, Tennessee.

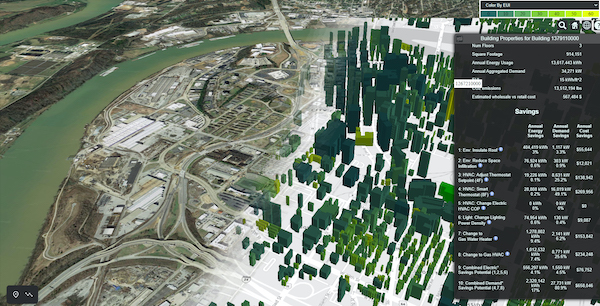

A team of researchers developed the Automatic Building Energy Modeling (AutoBEM) software used to detect buildings, generate models, and simulate building energy use for very large areas. Rather than modeling one building or even several hundred, the effort aims to create an energy picture of a large network of buildings as part of a strategy to plan the most effective energy-saving measures.

A multi-building simulation view is critical in certain scenarios like district systems, urban heat islands, and climate change impacts to the nation’s energy flows, according to Dr. Joshua New, senior R&D scientist in Grid-Interactive Controls at ORNL. “Simulation is important because it allows us to get output from the model for things that most people care about for buildings—energy, demand, emissions, or cost reductions from different energy efficient technologies,” New explains. “The advantage of a digital twin is that it allows performance improvement more quickly without the risk or expense of modifying objects and systems in the real world.” There is also a subsequent validation phase of digital predictions prior to rolling out any changes to the energy system, he said.

New’s team partnered with a municipal utility to create the digital twin of Chattanooga’s building landscape, augmenting the more traditional approach of using prototypes of common commercial buildings like offices and warehouses. The conventional modeling approach would create gaps in what the computer model will predict, so the team opted to validate the model with actual energy usage as part of their study and collaboration. To do so, they integrated the utility’s data on energy use for every building, down to 15-minute intervals, including satellite images, tax assessments, and other relevant data sources. They also came up with eight energy conversation measures—among them, roof insulation, lighting changes, and improvements to generate heating and cooling efficiencies—which they projected on the model to gauge impact on energy use, demand, cost, and emissions.

A central player in the study was the Theta supercomputer at the Argonne Leadership Facility, which relies on CPU horsepower unlike the more common HPC platform architecture that is based on GPUs. A building energy model has an average of 3,000 inputs, which could encompass an hourly lighting schedule of a single room resulting in more than 8,000 values; scaling that exercise across eight different energy-saving measures and upwards of 100,000 buildings showcases the scale of the simulation job while illustrating the need for a CPU architecture, officials said.

“Modeling energy use across all of the nation’s buildings is an incredibly data-intensive undertaking that requires conducting thousands of simulations with different parameters,” explains Katherine Riley, ALCF director of science at Argonne. “Our Theta supercomputer is built to support complex, large-scale computing campaigns. Its computational and scaling capabilities are perfected suited to handle AutoBEM’s dynamic workflow for creating nation-scale building energy models.”

As part of the process, the team encountered challenges related to compilation as well as load balancing and packaging the simulation engine so it could be distributed and executed in parallel on each node’s core. The team also needed to figure out how to execute independent simulations in a way that parallel simulations didn’t overwrite one another’s temporary files. The ORNL research team successfully worked through these and other issues and is sharing lessons learned to help others and to minimize reinventing the wheel, New says.

The AutoBEM simulation found that nearly all Chattanooga buildings saw energy savings when implementing the proposed efficiency measures. Just one conversation measure such as improved HVAC efficiency or new insulation or lighting has the potential to offset 500 to 3,000 pounds of carbon dioxide per building, the researchers concluded.

Watch this video to learn more about Argonne’s Theta supercomputer.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Beth Stackpole is a contributing editor to Digital Engineering. Send e-mail about this article to [email protected].

Follow DE