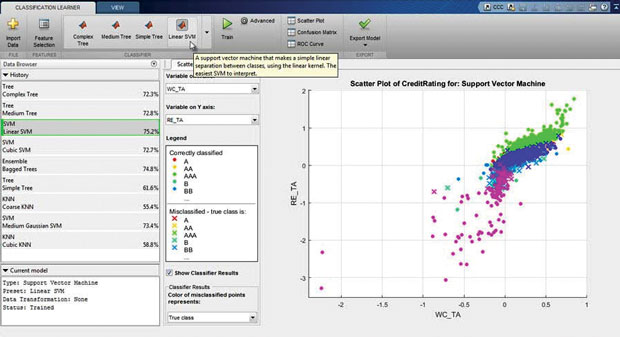

The Classification Learner app lets you interactively train models to classify data using supervised machine learning. Image courtesy of MathWorks Inc.

Latest News

August 3, 2015

The Classification Learner app lets you interactively train models to classify data using supervised machine learning. Image courtesy of MathWorks Inc.

The Classification Learner app lets you interactively train models to classify data using supervised machine learning. Image courtesy of MathWorks Inc.Internet of Things (IoT) data is the new currency driving strategic decision making, including helping engineers gain insight into what to build and how to build it more effectively. Yet in order to reap the full impact, organizations need a strategy for managing the data deluge so it doesn’t swamp efforts to optimize product designs.

Big Data’s role is to deliver insights that can steer higher quality product designs and be leveraged for predictive maintenance to more effectively service products once out in the field. The concept works by equipping products of all types with an assortment of sensors that collect and transmit critical data in real-time over the Internet to a central repository. From there, the data—recorded temperature, stress points and speed, among other variables—is massaged, potentially mingled with external data, and ultimately mined for insights that could direct any number of product development decisions.

This scenario, while still nascent, has huge potential to transform the engineering and design process. Yet the problem, according to experts, is that the data coming off of smart connected products is so vast that it’s next to impossible for engineering teams to come up with any of these killer insights without help from an additional technology platform that will facilitate data collection and analysis.

“The data has gotten so big that the ability for the human mind to find points of value within the data set is extremely difficult,” says Rob Patterson, vice president, Corporate Strategy for ColdLight, a provider of an automated, advanced and predictive analytics platform recently acquired by PTC. “There needs to be some kind of handshake between man and machine in order to find value in IoT data.”

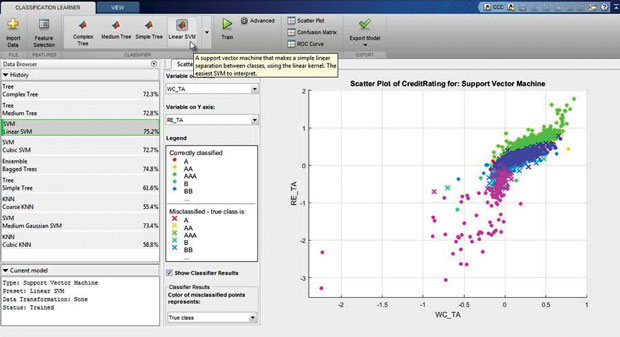

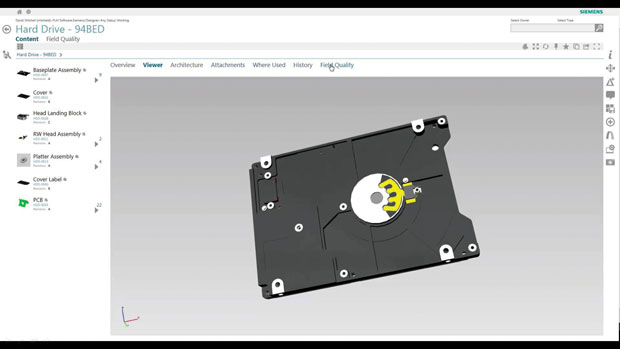

Omneo runs billions of variable combinations to uncover hidden data trends that can used to mitigate product quality issues in future designs. Image courtesy of Siemens PLM Software.

Omneo runs billions of variable combinations to uncover hidden data trends that can used to mitigate product quality issues in future designs. Image courtesy of Siemens PLM Software.In recognition of both the IoT’s potential and the Big Data problem, traditional design tool vendors are taking aggressive measures to augment their product portfolios with new capabilities that address emerging requirements. Some, like PTC and Siemens PLM Software, have made acquisitions in the Big Data analytics space planning to meld what has historically been an unrelated enterprise information silo into the traditional engineering software workflow. Others like MathWorks are positioning their machine learning capabilities to help solve this challenge, and non-traditional players such as analytics companies are also jumping into the fray and tuning Big Data solutions to address the needs of engineers.

“I don’t know any great answer to the problem out there today,” says Mike Campbell, PTC’s executive vice president of CAD products. “All I know is that design engineers trying to develop the next great products are starved for real-world information. They recognize that they’ve been making assumptions about requirements and real-world conditions … and they’re very excited about gaining insights about how products are used or whether they are under or over designed.”

Closing the Information Gap

Bill Boswell at Siemens PLM Software agrees that the traditional process often leaves engineers making critical design decisions in a vacuum. While there is plenty of data—in PLM (product lifecycle management), ERP (enterprise resource planning) and voice of the customer systems—he says the problem of fragmented silos creates information chaos in companies, and traditional business intelligence tools don’t help span the gap. “Today, companies are making decisions on a pretty short set of data,” says Boswell, senior director of Cloud Services Marketing and Business Strategy. “Traditional business intelligence isn’t taking advantage of Big Data.”

To see how Big Data can change the equation, Boswell uses the example of an electronics manufacturer trying to identify a quality problem causing recalls on a hard disk drive. By combining engineering data, PLM data, field quality data, returns data, customer experience data and what Boswell dubs “call home” IoT data, engineers can ask a different set of questions they may never have considered before to get to answers they never would have found, he explains.

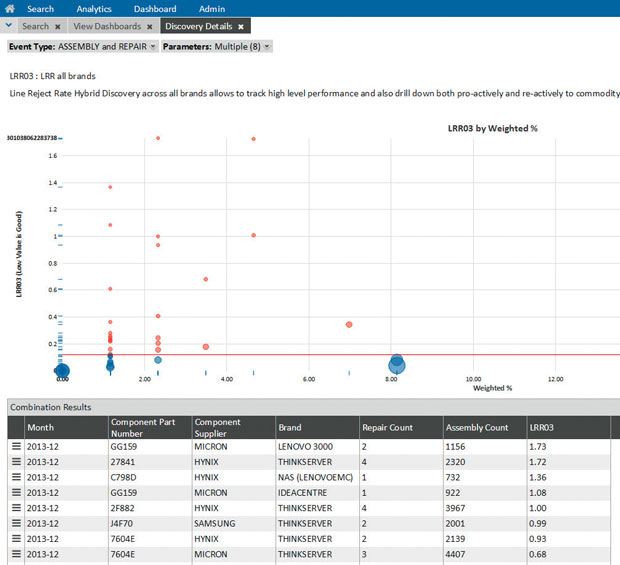

The monitoring tool in Omneo Performance Analytics can be used to track and observe trends for all Big Data sources in a single place for more comprehensive analysis. Image courtesy of Siemens PLM Software.

The monitoring tool in Omneo Performance Analytics can be used to track and observe trends for all Big Data sources in a single place for more comprehensive analysis. Image courtesy of Siemens PLM Software.Cutting-edge organizations might be doing some of this kind of analysis on a very rudimentary scale, but it’s next to impossible to see patterns between data stored in siloed systems without some sort of automated, analysis tool, Boswell says. To that end, Siemens recently released Omneo Performance Analytics, part of the Omneo quality management platform that came through its acquisition of Camstar, best known for its manufacturing execution system (MES). Omneo Performance Analytics, available as a Software-as-a-Service (SaaS) offering, monitors data across the entire supply chain and customer experience, analyzing billions of data combinations to uncover hidden intelligence that can proactively pinpoint the source of product issues well after a product has been released to the field.

Having this 360° view of the supply chain, coupled with the ability to churn through billions of data combinations in seconds, was a critical asset for Dell, an Omneo user, to proactively identify and service a problem for one of its major customers, according to Rami Lokas, senior director, R&D for Omneo. The customer was experiencing on-going issues related to laptop memory, and using the Omneo platform to connect the dots between the various data silos, a Dell quality engineer was able to determine in minutes—not weeks—the source of the problem and resolve it.

“All of a sudden, the light switch went on and the engineer realized it wasn’t the processor or the motherboard, but the integration between the two,” Lokas says. “The number of permutations you’d have to go through to uncover that would be humanly infeasible.” Instead, Omneo’s ability to automate that discovery process was essential to finding the patterns in Big Data and jumpstarting the root cause analysis for the service engineer, he explains.

MathWorks customers facing similar challenges are leveraging a variety of tools to find patterns and gain insights from an ever-expanding lineup of data sources, including data acquisition hardware, data warehouses, live and historical industrial plant data, and of course, IoT. Via its family of Toolbox offerings—the Data Acquisition Toolbox, the Database Toolbox, the Image Acquisition Toolbox, the Neural Network Toolbox to name a few—MATLAB users are attacking diverse and dynamic Big Data sets to gain insights that can be leveraged to improve existing designs or build new products and services, says Seth DeLand, MathWorks’ product manager for Data Analytics.

DeLand uses the example of a large off-highway equipment manufacturer that had 40 years of stored data that was continuously collected from machines in the field. Using the MATLAB Toolbox offerings, the manufacturer could develop more accurate test models to understand how its equipment is actually performing in the field and whether it can hold up under a full range of operating conditions. Rather than use subjective tests that an engineer devised years ago based on assumption, engineers can leverage the treasure trove of historical data to create models for hardware-in-the-loop (HIL) testing and test beds that are more reflective of real-world conditions. “They might find that a subassembly was never designed for the use case that it’s under in the field and although it’s still working, they can deliver feedback to the design team they never would have gotten because there were no field failures,” he says.

MathWorks’ machine learning capabilities also have potential for helping engineers get a handle on Big Data. Engineers need to be able to explore and visualize data, eliminate noise and outliers, and develop predictive models, but the problem is they are engineers—not statisticians, says Paul Pilotte, MathWorks’ technical marketing manager for Data Analytics. “When you have a lot of data, you need a more efficient way of developing models and getting data to surface to create accurate predictive models—machine learning is a powerful approach for that,” Pilotte says. “It gives you the ability to build highly accurate predictive models that a human person couldn’t do.”

Instead of requiring the user to define a formula or equation, machine learning algorithms automatically fit widely applicable models to data. Image courtesy of MathWorks Inc.

Instead of requiring the user to define a formula or equation, machine learning algorithms automatically fit widely applicable models to data. Image courtesy of MathWorks Inc.Helping human engineers make sense of Big Data is where ColdLight fits into PTC’s product portfolio. The software, which also has a machine-learning component, among other data analysis capabilities, parses through incoming data, finding patterns within data sets to help with predictive analytics, ColdLight’s Patterson says. “The amount of data available is very large, but it’s not all relevant for actual use or the goal an engineer is trying to achieve,” Patterson says. Manually detecting patterns in such a large data set is next to impossible for highly trained statisticians, let alone engineers. ColdLight automates that process, using machine learning or neural networks, among other data analysis capabilities, to detect patterns and build predictions to only send engineers the data that they need.

That’s when the true value of IoT comes into play, says Patterson. “For the first time from a design engineer standpoint, we have enough data out there … to provide an unbiased view of the world,” he says. “The ability to process that data and build predictions with no predisposition to the business will open possibilities for people to do things in a different manner than has been done in the past.”

More Info:

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Beth Stackpole is a contributing editor to Digital Engineering. Send e-mail about this article to [email protected].

Follow DE