New Memory Solutions Could Boost Engineering Computing Speed

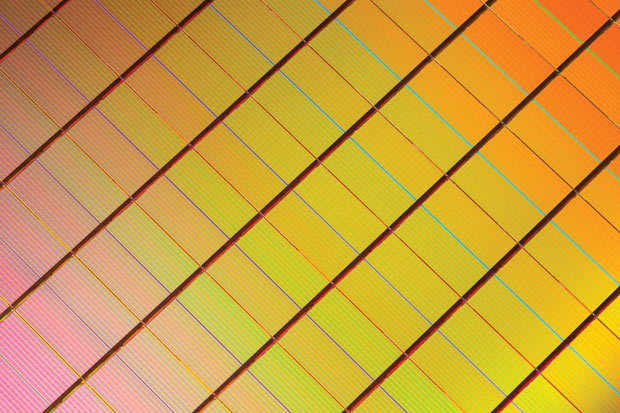

The 3D XPoint is 10 times denser than DRAM offerings. Image courtesy of Micron.

Latest News

June 1, 2016

As models and data sets continue to get bigger and bigger, the demand for larger memory capacity and faster access has increased. Engineers need more space and faster performance to keep up.

“For engineers, scientists and people creating new technology, it’s important to make those people as productive as possible,” says Scott Hamilton, Precision specialist at Dell. “When we look at this from a workstation perspective, memory and storage are a big part of that equation.”

Now, new memory architectures (referred to as storage-class memory, or SCM) are emerging that could fundamentally change computing speeds. Last year, Intel and Micron unveiled 3D XPoint, memory technology that the companies claim will provide 1,000 times the performance of current solid-state drives (SSDs).

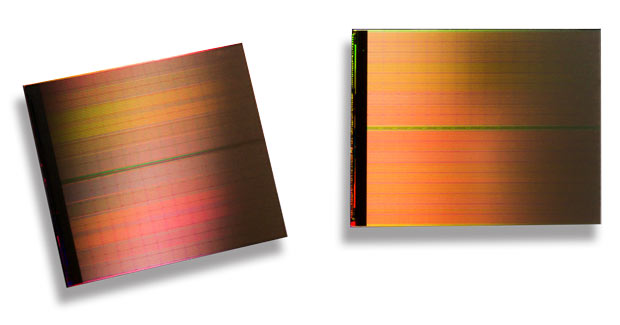

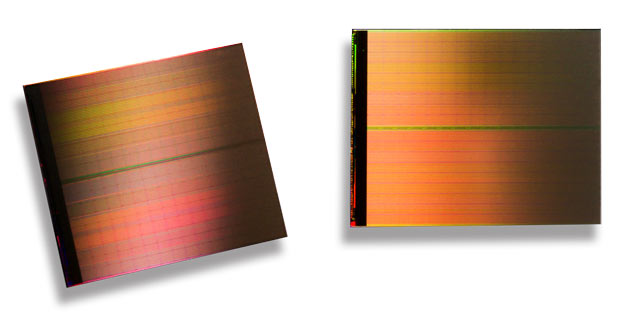

The 3D XPoint is 10 times denser than DRAM offerings, addressing challenges with memory limitations. Image courtesy of Micron.

The 3D XPoint is 10 times denser than DRAM offerings, addressing challenges with memory limitations. Image courtesy of Micron.

Also last year, HP and SanDisk (now a Western Digital brand) announced their own SCM collaboration, which will combine HP’s memristor efforts and Western Digital’s non-volatile ReRAM memory technology. The companies claim that their version of SCM will also be 1,000 times faster than flash storage with higher endurance, lower costs and lower power requirements than DRAM. The solution is intended to allow systems to employ tens of terabytes of SCM per server node for in-memory databases, data analytics and high-performance computing.

It could be another year (or more) before these solutions turn up in the data center, and even longer than that before you’ll see them in a workstation. In the meantime, computer OEMs (original equipment manufacturers) are addressing this need for faster access and more storage in a variety of ways. In the case of Dell’s Precision tower workstations, the company now offers Broadwell EP processors, up to a terabyte of RAM, and Ultra-Speed PCIe drives that the company says are up to four times faster than SATA SSD storage. Later this year, Dell will offer a new SuperSpeed feature on its mobile workstations. The company worked with Intel to provide 2667MHz bandwidth on its mobile units, a significant increase in clock speed.

Memory, Storage or More?

What the Intel/Micron and HP/SanDisk projects represent, though, is a fundamental change in the way memory operates. Storage class memory is not quite memory or storage, although it will reside in the memory slots on the motherboard. This type of memory is faster and more resilient than flash, and is also persistent.

Traditionally, applications store data temporarily in volatile DRAM memory. Persistence is achieved by writing the data and metadata to the disk. With SCM solutions, content remains in memory.

New SSDs have provided significant benefits to engineers when loading models and storing them, and can have a huge impact on productivity. That has helped relieve some of the latency that bogged down operations in the past. “The storage device was the bottleneck, because it can’t load the data fast enough,” Hamilton says. “With the new SSDs, that bottleneck is not as significant as it used to be.”

New NVMe (non-volatile memory express)-based systems use flash memory in the form of an SSD to provide lower latency. “These go on the PCIe bus rather than the data bus, the so the bandwidth is greater. In internal tests, we’ve seen three to four times performance improvements with NVMe drives,” Hamilton says. “For example, when you are doing simulations, those data sets are often more than you can fit in RAM, so you are relegated to this situation where the CPU is waiting for data off of the storage device. Since that’s always slower than RAM, you pay a latency penalty. But if you can increase the performance of the storage device, you increase your overall performance.”

This is what Intel’s 3D XPoint-based Optane SSDs are meant to address. Optane combines 3D XPoint with Intel’s system memory controller, interface hardware and software IP. 3D XPoint builds on NVMe and will be mounted on PCIe cards, and will further reduce latency.

Intel and Micron describe the 3D XPoint (pronounced crosspoint) architecture as a “transistor-less cross point architecture” that creates a “three-dimensional checkerboard where memory cells sit at the intersection of word lines and bit lines, allowing the cells to be addressed individually. As a result, data can be written and read in small sizes, leading to fast and efficient read/write processes.”

DRAM and NAND Flash use electrons to store cell state, and the cell requires sufficient volume to hold those electrons. DRAM loses the electrons when power is removed (which makes it volatile), while NAND Flash retains the electrons (making it non-volatile).

“3D XPoint memory does not use electrons to store cell state, but instead relies on the transition of a bulk material,” says Michael Abraham, 3D XPoint business line manager at Micron. “The material’s resistance can be either high or low. Because electrons are not required, 3D XPoint memory can scale to smaller lithographies than NAND Flash and DRAM.”

NAND Flash has higher capacity per physical area than 3D XPoint, but the Intel/Micron solution is faster to read and write, and can do so in smaller units; it’s also 10 times more dense than DRAM.

The resultant Optane SSD is really just a faster version of existing solutions that combine an SSD working in conjunction with a spinning drive to boost the performance of the spinning drive, says Mano Gialusis, product manager for Dell’s mobile workstation line.

“What Optane does is provide faster throughput or performance,” Gialusis says. “But there is always going to be a bottleneck if you are working with extremely large files. Gigabytes of files would have to be transferred to the Optane device, and that is where it (being non-volatile) could store those or pin those to the device, which would allow you to have faster access.”

According to Paolo Faraboschi, fellow and vice president, Systems Research, at Hewlett Packard Labs, the HP/SanDisk version of SCM has a capacity and cost profile similar to storage, with performance characteristics (bandwidth, latency, access methods) similar to memory. Faraboschi says it can be thought of as “byte-addressable non-volatile memory” in that users can access it directly (like memory) without going through a block interface.

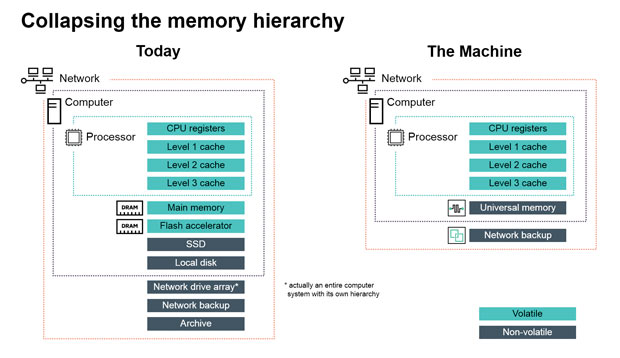

“This ‘fast-and-cheap’ combination allows us to discard today’s memory hierarchy, which translates data in small chunks between slow-but-cheap flash and HDDs and fast-but-expensive DRAM,” he says. “This busywork of today’s memory hierarchy represents something like 90% of everything a computer does today.”

Accelerating Applications

So does this help engineers and other end users? Initially, the pay off will be in the data center for large simulation and data analysis applications.

In the case of 3D XPoint, the technology can provide better SSD performance. It could also be placed on a memory module and attached to a memory controller on the CPU. This is potentially more disruptive and allows faster non-volatile accesses.

“Any engineering application that requires more memory per CPU will benefit,” Abraham says. “But Micron sees the most immediate benefits for enterprise applications that handle Big Data sets or real-time analytics. Those applications are in lots of different fields, but you see them often in finance and high frequency trading, security/fraud detection and even on-demand content platforms.”

“CAD-type applications are both compute- and memory-intensive,” adds HP’s Faraboschi. “Most people can relate to having to turn off parts of an assembly or disable shading in order to get a decent response time. With enough memory, however, instead of constantly re-computing ray tracing, cross-sections and similar tasks, the application can just look up previous scenarios. The end result is a much more responsive user experience and the ability to work on much larger datasets that are impractical today.”

For software developers, these new solutions can provide a faster way to access non-volatile memory. According to Abraham, many of the “hooks” for 3D XPoint and NVDIMM are occurring in a set of libraries defined in PMEM.

“These libraries will make faster block-based access and non-volatile variable access easier to use while bypassing much of the overhead of file systems and drivers,” Abraham says. “Much of the plumbing work is happening now to allow middleware applications—like databases, data analytics, etc.—to optimally access 3D XPoint technology, and then end user applications will be able to take advantage of it, too.”

HP is looking to address fabric limitations with The Machine, its new research project that changes the current memory hierarchy. Image courtesy of HP.

HP is looking to address fabric limitations with The Machine, its new research project that changes the current memory hierarchy. Image courtesy of HP.

That will eventually require a re-architecting of applications to derive the most benefit from the lower latency. As Faraboschi points out, applications are written with existing memory limitations in mind. In order to access a storage device today, programmers have to use storage abstractions that move data in large chunks between the I/O subsystem and DRAM memory.

“This causes serialization and deserialization overheads, and makes in-memory programming very difficult, often impossible,” Faraboschi says. “With SCM, you can completely rethink the compute/space balance that the application requires. We understand that in order to achieve maximum performance gains, some recoding is inevitable. However, to ease the transition, companies like HPE (Hewlett Packard Enterprise) are working on new programming tools and models that use familiar languages and constructs as much as possible.”

The Impact on the Data Center

Data centers will be the first beneficiaries of storage-class memory. In fact, enterprise data centers are the initial target market for 3D XPoint, because the technology provides new ways to handle data-intensive workload streams.

“The initial enablement work requires careful collaboration between hardware OEMs, software companies and the media experts in our development groups, however—which is why you haven’t seen end products yet,” Abraham says. “You’ll begin to see new architectures developed around 3D XPoint technology emerge in data center environments in 2017 and 2018.”

According to Faraboschi at HP, data center performance is limited both by the memory hierarchy, as well as the low bandwidth and latency of the fabric connecting servers.

“SCM, on its own, can’t address the fabric limitations,” he says. “For that, you need a new fabric architecture such as the one we plan to enable in The Machine, HPE’s research project to reinvent computer architecture from the ground up.”

The Machine leverages SCM and other technologies to create terabyte- and petabyte-sized pools of universal, non-volatile memory. One key piece of that solution is the memristor, a resistor that stores data after losing power, but HP’s commercialization of that technology has been slower than expected.

Coming to a Desktop Near You?

So far, neither of the first two types of SCM are going to be available for at least a year or more. Adoption at the workstation level will be even farther off.

These new memory solutions create fast storage that could provide benefits for professional workstation applications with high storage demands. Abraham, however, thinks workstation users will have better results in the short term with flash-based SSDs for now because of the lower price point. That will change as the technologies mature and prices come down.

Dell plans to adopt the technology as soon as it’s available and proven, and the company expects that CAD and simulation users will clamor for it. In the meantime, workstation manufacturers continue to load up on the number of cores available on their machines via Intel’s Xeon products.

“CAD applications are memory hungry, and products are just getting more complicated,” Dell’s Gialusis says. “The amount of analysis work is increasing, and more products are integrating both mechanical and electrical components. Products are more complex. We’re all trying to make engineers as productive as possible, and these memory developments are another piece of the puzzle that help us enable that.”

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Brian Albright is the editorial director of Digital Engineering. Contact him at [email protected].

Follow DE