Product design is reliant on digital tools more than ever. To meet tighter project deadlines, firms use simulation to rapidly iterate through potential designs and test the limits of their offerings. But as products become more complex and models become larger, simulation can become a bottleneck without the right hardware supporting it.

“Simulation is about how rapidly you can explore the design space, and compute power is a part of that,” says Doug Neill, vice president, Product Development at MSC Software, part of Hexagon.

If an engineer’s toolset isn’t balanced to the workload, they risk missing deadlines or worse—creating a product that is tough to manufacture or fails in the hands of end users.

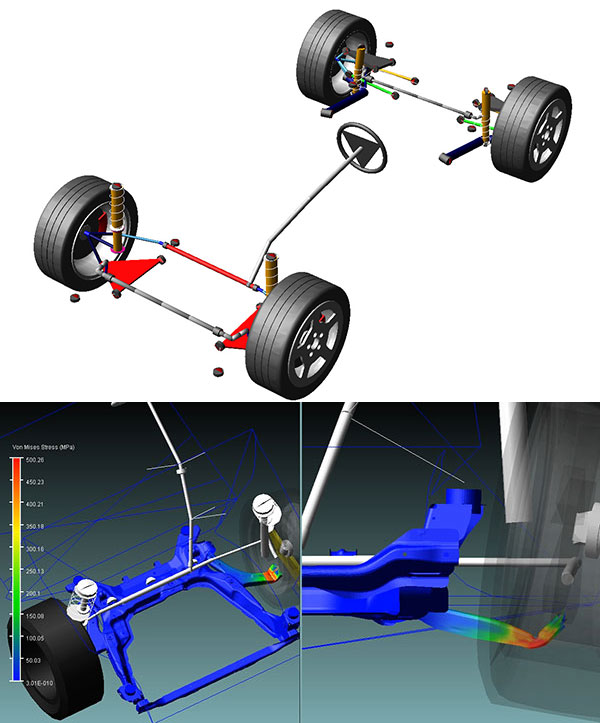

Simulation has traditionally been used to eliminate concepts that aren’t viable. Engineers settle on some things they aren’t quite sure about and perform testing to validate the simulation, explains Neill. Over the years, simulation has changed to accommodate models that combine much more information. Although coupled physics models aren’t new, they’re becoming common to integrate all the necessary systems into one model.

“The biggest evolution by far is complexity and size from the simulation models,” Neill says. “The idea was to keep the model as small as possible and have many instances of models.” Now, simulation models are much more realistic and have higher degrees of freedom. Instead of being a mathematical representation of an object, CAD models are now recreations—sometimes literal digital twins—of actual products and systems.

One obvious hardware issue created by modern simulation workflows relates to data storage. Historically, engineers would take a product design’s metadata and spawn math models; any design changes would then require a completely new simulation run. Now engineers are able to isolate individual parts and make changes faster and on a more granular basis. Engineers often want to reuse some parts as they make design iterations to others. With so many available versions, engineers must be able to effectively store and sort through the possibilities.

Storage is relatively inexpensive and simple to add onto an existing computing platform. The more pressing hardware concern for engineers running larger, more complex models on a workstation is the time it takes to perform high-fidelity simulations. Ultimately, simulation run times are affected by processing speeds. Should you invest in multiple CPUs, high core counts or cores?

“When weighing whether to maximize CPU core count, CPU frequency or some blend of them, one should always seek the latest CPU micro-architecture and generation,” according to the white paper "Selecting the Right Workstation for Simulation." “Newer CPU generations typically come with either a process shrink—smaller transistors—or a higher-performance architecture. For example, newer architectures often provide more performance at the same frequency, and this extends beyond just CPU performance. Because many applications spend time waiting on a single core to feed the GPU instructions and data, as the CPU’s integer performance increases, so does the graphics performance. What this means is that the same frequency CPU on newer-generation architectures can provide higher frames per second with the same graphics card.”

Of course, as simulation software evolves to take advantage of the higher core counts available in GPUs, there are more benefits to using GPUs in workstations. “It’s one of those last bastions of software for the GPU to come in and accelerate the software,” says Chris Ramirez, strategic alliances manager at Dell.

For example, engineers using ANSYS Discovery Live or Creo Simulation Live can experience real-time 3D simulation. Designed for rapid iteration of concepts, the simulation software makes use of NVIDIA GPUs to enable interactive design exploration and rapid product innovation. “These innovative, new real-time engineering simulation tools actually only run on NVIDIA GPUs,” points out Andrew Rink, head of marketing strategy for manufacturing industries at NVIDIA. “A minimum of 4GB of GPU memory is required for interactive simulation, although 8GB is recommended for higher performance, such as what is offered in the new Quadro RTX 4000.” Hundreds of applications are being accelerated by NVIDIA GPUs, including design and simulation software from Altair, ANSYS, Autodesk, Dassault Systemes, MSC Software, Siemens PLM Software and more.

In the end, engineers should consider how their hardware supports their individual use cases. Tools like the Dell Performance Optimizer allow administrators to see how much storage, memory and processing power engineers are using to run simulations, Ramirez says. That data can help determine not only what level of hardware to invest in, but also what type of resources engineers need.

As a general rule of thumb, companies should assess their engineering workstation hardware whenever new software investments are made, or at least once every three years. According to the paper "Making the Case for a Workstation-Centered Workflow," a new engineering workstation investment will pay for itself in about six weeks.

“The foundation of simulation is being done on a workstation,” Ramirez explains. “The one-on-one nature of workstations makes it easy for engineers to securely access data and simulation models. Data is the lifeblood of engineering. Having the ability to make sure your data is on-premise is important.”

DE's editors contribute news and new product announcements to Digital Engineering. Press releases may be sent to them via [email protected].

Follow DE

Join over 90,000 engineering professionals who get fresh engineering news as soon as it is published.