NVIDIA GTC 2021 begins this week with keynotes, guest speakers, and recorded talks. Image courtesy of NVIDIA.

GTC 2021: NVIDIA Launches Omniverse, Develops CPU for Data Centers

GPU Maker Continues to Focus on AI-Accelerated Markets

Latest News

April 13, 2021

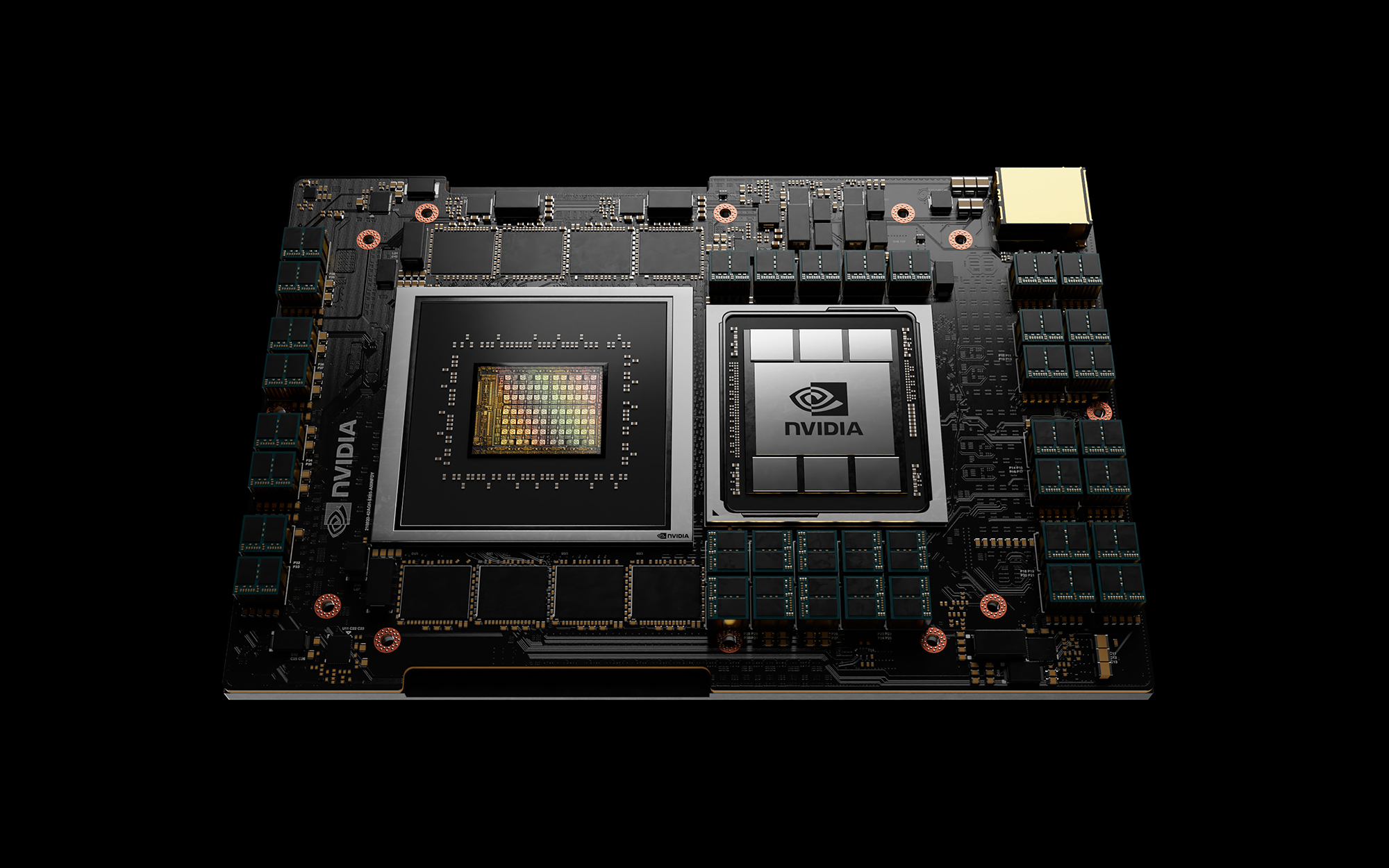

NVIDIA's next big product for the data center is not a GPU but a CPU, codenamed Grace. The news was announced by NVIDIA CEO Jensen Huang at the company's annual GPU Technology Conference (GTC), held virtually this week.

He described Grace as “a new kind of computer, the basic building block of a modern data center, the world’s first CPU designed for Terabyte-scale accelerated computing.”

NVIDIA chose ARM architecture as the foundation of Grace because “it's super energy-efficient, has an open licensing model, and is used broadly in the mobile and embedded segments,” Jensen said.

With the birth of Grace, Jensen pointed out, NVIDIA becomes a three-chip company, producing CPUs, GPUs, and DPUs (data processing units).

“While the vast majority of data centers are expected to be served by existing CPUs, Grace—named for Grace Hopper, the U.S. computer-programming pioneer—will serve a niche segment of computing,” the company writes.

NVIDIA has shown no sign or plan to offer its Grace CPUs to the workstation market, dominated by Intel and AMD CPUs. But Grace is bound to cut into the data center market that benefits from the increase in AI-related workloads. And that should make the rival processor makers nervous.

Omniverse Emerges from Beta

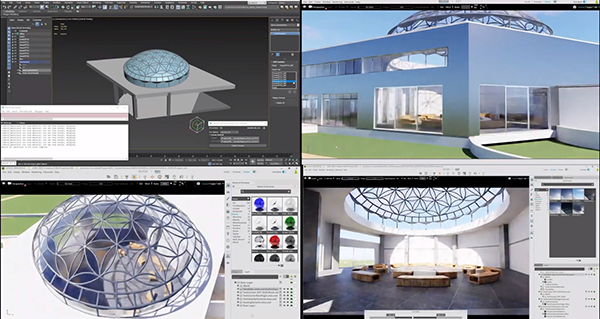

Since its preview at GTC 2019, Omniverse had been offered in Beta form to selected customers. Two years later, the cloud-hosted collaborative design platform emerges from Beta, ready to become an offering from NVIDIA partners.

Jensen described Omniverse as “a composition, animation, and rendering engine.” With hooks to VR hardware, Omniverse allows users from different locations to meet in virtual space and collaborate on designs or solve engineering issues using raytraced 3D objects.

“One of the most important things about Omniverse—it obeys the law of physics. Particles, springs, and cables behave as they would in real life,” said Jensen.

In Omniverse, rigid and soft body interactions are simulated in NVIDIA PhysX, employed in video and computer games to create realistic action. It uses the open standard USD (Universal Scene Description) language developed by Pixar to build its environment. The graphics are rendered in NVIDIA RTX.

Pl

Digital Twins in the Omniverse

One of the featured customers at GTC was the telecommunication firm Ericsson, which dove into NVIDIA's Omniverse as an early user to simulate 5G network performance.

“NVIDIA Omniverse Create integrates a state-of-the-art ray tracer with the interactive tools to manipulate and explore complex scenes. This means we can experiment with placement of Ericsson products and explore their impact in real time,” writes Ericsson on its partnership with NVIDIA. “We have a vision where 5G can be explored in our virtual worlds together with our design teams, business leaders, industry partners, and customers. After all, we are in the age of digital twins!”

BMW uses the Omniverse to build digital twins of its automotive plants. Dr, Milan Nedeljkovic, member of the BMW board of management, joined Jensen in the GTC keynote.

“For the first time, we are able to have the entire factory in simulation. Global teams can collaborate using different software packages, using Revit, CATIA, and point clouds,” he noted. “BMW regularly reconfigures its factory to accommodate new vehicle launches ... An expert can 'worm holes' into the Omniverse using a motion capture suite, records task movements, and another expert can adjust the line design in real time. Two planning experts can work together to optimize the line in Omniverse. We want to be able to do it at scale in simulation.”

Infrastructure software maker Bentley's iTwin gives you clues to how NVIDIA partners might repackage Omniverse for hosting digital twins of specific disciplines.

Morpheus: AI-Trained to Watch Over the Network

To fans of the Matrix film trilogy, the name Morpheus immediately brings to mind the image of Laurence Fishburne, perpetually draped in a long coat. It's fitting then that NVIDIA has named its cyber security product Morpheus.

“The CPU workload of monitoring data-center traffic is simply too great,” said Jensen. “Today we are announcing Morpheus, a data center security platform for all packet inspection. It's AI-trained to identify suspicious traffic activities, such as leaked credentials.”

Jensen's keynote ran for one hour and 45 minutes, the majority of which was devoted to AI-accelerated data center workloads, autonomous driving, digital twins, and natural language processing. A good chuck of the keynote was devoted to cloud gaming, filmmaking, and computer graphics, but proportionally, data center workloads took center stage, suggesting a significant shift in NVIDIA's core businesses.

As Jensen looked back on the company's growing footprint in scientific computing, he recalled an encounter.

“A scientist once told me that because of NVIDIA’s work, he could do his life’s work in his lifetime. I can’t think of a greater purpose,” said Jensen. “With just a GeForce, every student can have a supercomputer ... With GPUs in supercomputers, we give the scientists a time machine.”

More NVIDIA Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DERelated Topics