Normally NVIDIA GTC (GPU Technology Conference) attendees will pile into the hall of a conference center in the Silicon Valley to hear NVIDIA CEO Jensen (Jen-Hsun) Huang's roadmap, predictions, and promises, but not this year.

Appearing in a recorded video going live today, Huang said, "Our first kitchen keynote -- I'm coming to you from our home in California. I hope all of you are sheltering safely at home."

It was the keynote he would have delivered in person at the live event originally scheduled for March 22, but Coronavirus and the lock-downs prevented it from taking place onsite.

In the video keynote, Huang thanked the frontline workers in the fight against COVID-19 -- "the nurses, doctors, truck drivers, retail clerks, warehouse workers, all the people who are keeping the world going while we shelter at home."

In the use of HPC (high performance computing) to analyze the new virus, the GPU has become a powerful ally. "Oxford Nanopore was able to sequence the virus's genome in just seven hours using our technology," Huang said.

Even before the virus outbreak, GPU-accelerated HPC workloads have become more commonplace. "Data processing and the movement of data around data centers is more important than ever. The applications we're using now are so large they don't fit in any computer," Huang observed. "In fact, the server is no longer the computing unit. The data center is the computing unit."

Huang believes, in the next decade, data center-scale computing will become the norm. The vision led to NVIDIA's recent $7 billion acquisition of Mellanox, a leading interconnectivity solution provider to the data center sector.

Mellanox's hardware works with specialized processors such as the Mellanox BlueField 2, an I/O (input/output) processing unit. "BlueField 2 accelerates security and packet processing at line speed, in this case, up to 200 GB per sec," said Huang in his video keynote.

Devices like BlueField 2, Huang speculates, will become one of the three main pillars of modern computing -- "the CPU, for general purpose computing; the GPU, for accelerated computing; and the DPU (data processing unit), which moves the data around the data center."

Increasingly, GPU-centric workloads are becoming part of AI and machine-learning applications. Therefore, the nature of GPU is gradually evolving from a graphics processor to a data-crunching engine. The latest NVIDIA architecture Hung unveiled this week reflects this.

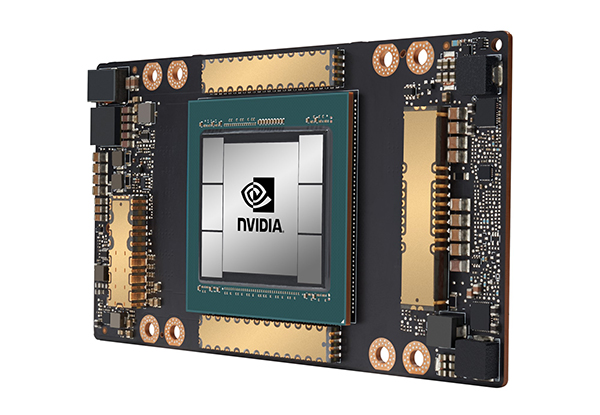

"The NVIDIA A100 is based on an architecture we call Ampere," explained Huang. "First, we are using tsmc's 7 nanometer process that's been optimized for NVIDIA, using a technology called CoWoS, chips on wafer on substrate. It puts the memory on the substrate, allowing us to interoperate incredibly fast. It provides up to 1.5 Terabytes frame buffer bandwidth. This is the first processor in the history that comfortably provides over a Terabyte per second of bandwidth."

The Ampere architecture has a new tensor numerical format, called Tensor Float 32. "You input in FP32, it processes in Tensor Float 32, it accumulates in FP32 (FP for floating points). As a result, no code change is necessary when you train," said Huang."We can now effectively accelerate [neural network] processing by a factor of two."

By NVIDIA's own benchmark tests, the Ampere-based A100 performs at nearly 20 times the performance of Volta GPUs. The A100 is "the world's first processor to achieve over 1 Petaops -- 1,250 Teraops per sec," Huang boasted.

The third generation of NVIDIA's DGX hardware, targeting AI researchers, will be made available as DGX A100, outfitted with Ampere GPUs. "The previous generation of DGX is mainly for training. With this one, you can use it for training, analytics, and inferencing," said Huang. "You can also split up this DGX and share it among 50 different users at one time."

With many of its GPU-powered hardware catering to the autonomous vehicle developers, NVIDIA has established a firm foothold in the automotive sector. This week, the company announced its robotic training system Isaac has been selected by BMW Group to implement autonomous factory logistics.

"BMW Group’s supply chain takes millions of parts flowing into a factory from more than 4,500 supplier sites, involving 230,000 unique part numbers, and in growing volumes as BMW Group’s car sales have doubled over the past 10 years to 2.5 million vehicles ... To optimize the enormous complexity of this material flow, autonomous AI-powered logistics robots now assist the current production process in order to assemble highly customized vehicles on the same production line," wrote NVIDIA in its announcement.

AI-driven Isaac can generate object variations and random orientations for image-based system training. Using Isaac, a carmaker can train factory logistics systems to recognize different objects and take appropriate actions with little human intervention -- an approach that will likely become the norm in the era of social distancing.

COVID-19's impact on the automotive sector is a mix, according to Danny Shapiro, Senior Director of Automotive, NVIDIA. "The ride-sharing sector is seeing a huge drop. We don't yet know what the effect will be," he said, during a briefing call with reporters. "We might see a shift from certain types of vehicles -- like a shift from those transporting people to transporting goods, due to people being under lockdown. Automotive business is long-term, not like the transactional sales in gaming. We have amazing commitment from our customers, like Volvo, that say now more than ever, they believe self-driving vehicles are the future."

Since its founding in 1993, NVIDIA (NASDAQ: NVDA) has been a pioneer in accelerated computing. The company’s invention of the GPU in 1999 sparked the growth of the PC gaming market, redefined computer graphics, ignited the era of modern AI and…

Cut Retrieval-Augmented Generation (RAG) Hallucinations by 50%

Most teams hit the same wall with enterprise AI: LLMs that hallucinate, pipelines that don’t scale, and infrastructure that’s harder to design than the models themselves.

Kenneth Wong is Digital Engineering's resident blogger and senior editor. Email him at [email protected] or share your thoughts or suggestions at digitaleng.news/facebook.

Follow DE

Join over 90,000 engineering professionals who get fresh engineering news as soon as it is published.