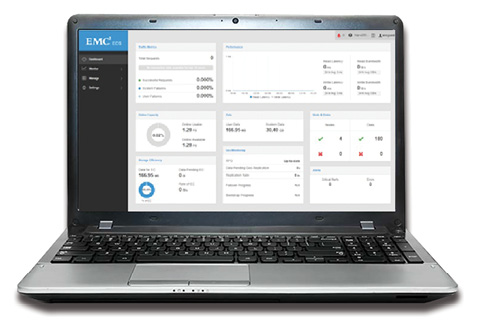

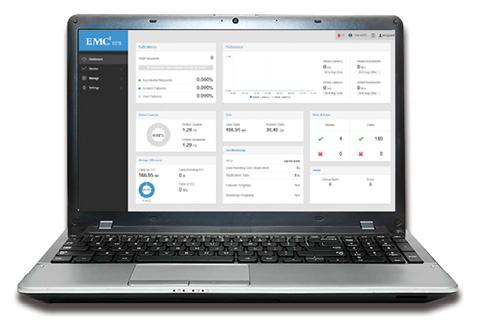

Dell EMC Elastic Cloud Storage has a new Heads Up Display with an integrated management dashboard across off and on premise storage. Image:Dell EMC

Latest News

November 1, 2017

Some of the largest volume of trades made on Wall Street nowadays comes from “quant-run” hedge funds. Their traders and analysts deploy algorithms and high-performance computing (HPC) to efficiently trade securities. A high volume of big data feeds into this model, executing trades and trading rules with lightning speed and efficiency. They are carefully calculated better than any human could.

Dell EMC Elastic Cloud Storage has a new Heads Up Display with an integrated management dashboard across off and on premise storage. Image: Dell EMC

Dell EMC Elastic Cloud Storage has a new Heads Up Display with an integrated management dashboard across off and on premise storage. Image: Dell EMCHPC is emerging as a dominant force to be reckoned with in financial trading. Sophisticated software, hardware and a small army of quantitative professionals are behind the movement, which may change the operations of capital markets forever.

Speed for Profit

“While it’s true that high-frequency trading is about volume, it’s much more about latency,” says Ed Turkel, HPC strategist at Dell EMC. “The key to high-frequency trading is how quickly a system can respond to a change in the stock feed with an appropriate trading response. With multiple high-frequency trading companies all seeing the same feed, the one who can respond to it the fastest does the transaction first, and makes the most profit.”

“With multiple high-frequency trading companies all seeing the same feed, the one who can respond to it the fastest does the transaction first, and makes the most profit.”— Ed Turkel, Dell EMC

Latency is the time it takes for data to be stored and retrieved. Low latency means the data is as close to real time as possible—which can be the difference between a profitable or unprofitable trade. HPC is a boon to low latency on the trading floor where trade frequency and volume are high and real-time transactions are paramount.

Turkel says high-frequency systems are designed with the least latency, via highest performance processors and lowest latency networks. “The volume part then is a combination of how many low-latency transactions can a single server support, combined with how many servers are used,” he adds. “We call this a scale-out computing problem, as the volume scales with the number of servers.”

New Dimension in Trading?

HPC has introduced a new dimension to trading in financial markets. Transactions are filled in microseconds. Split-second decisions are made by machine. HPC is a game changer that all stakeholders in the trading cycle—including analysts, traders, regulators and customers—are getting their arms around.

“It is a vicious circle if you think of influence of high-performance trading on high-frequency trading,” says Oleg Solodukhin, global head of Market Data at Devexperts, a provider of financial software and services based in Munich, Germany. “High-performance computing advances produce essential assets for high-performance trading, which in turn is a major factor in accelerating development in HPC. This process leads to the dominance in terms of volume of high-frequency trading over human-based trading. Acceleration allows the latency to be brought down to single-digit microseconds. The speed of decision-making in high-frequency trading is already far behind human capabilities, and development of high-performance trading continues to widen the gap between algo-trading robots and humans. The volume also continues to grow since high-performance trading capabilities increase, and, in our vision, requires additional regulation or introduction of high-frequency trading specific fees.”

Traders can range from big banks to small boutique firms. Some have their own proprietary equipment and technology. Many use a cloud-based scalable configuration that a vendor provides for them off their premises. This provides more access to the merits of HPC for smaller firms who don’t want or need a sophisticated IT staff and on-site equipment. Cloud-computing vendors who offer HPC data services provide the flexibility that makes real-time high performance and high frequency trading a reality for many in the financial services industry.

Infinite Flexibility

“Research with hundreds of thousands or millions of observations is now commonplace, and researchers are able to conduct many of these large-scale statistical analyses with an ordinary laptop, operated from the comfort of the corner coffee shop,” said Scott W. Bauguess, acting director of the Security and Exchange Commission’s Division of Economic and Risk Analysis, in a 2016 address to the Midwest Region Meeting of the American Accounting Association in Chicago. “For more intensive and complicated computing needs, the institutions we work for are no longer required to invest in expensive computing environments that run on premises. Access to high-performance cloud computing environments is ubiquitous. You can rent CPU time by the cycle, or by the hour, and you can ramp up or down your storage space with seemingly infinite flexibility.”

Despite the convenience and flexibility that cloud services offer, many firms are pondering the investment in assets and infrastructure to have their own HPC capability. It could reduce the overall cost and provide a return on investment they can reap in years to come.

“For the broader environment, the regulatory environment is driving much increased demands for risk analysis and data analytics,” Bauguess says. “This is causing financial service industry companies to want to increase the amount of computing they do, and the volume of data they collect and analyze, while inevitably they’re also being driven to lower costs. This conflict is driving a great many questions about outsourcing parts of their workloads to the public cloud.”

Few firms are willing to move their full computing and data management environments to the public cloud, so the real interest is in hybrid cloud environments. “This raises the question of how much to keep on premise vs.

off-premise in the cloud, which will inevitably be different for every firm,” Bauguess says.

Built to Scale for Growing Demand

Concurrent with the investment in hybrid cloud solutions is an explosion in data that is putting an increased focus on storage and computation, Turkel says. Traditional scale-out storage models and Elastic Cloud Storage (ECS) are growing exponentially, along with the tools to analyze that data. He says this is leading to a growing interest in machine learning technology and solutions as a way of finding trends in the data, detecting fraud and other uses.

So where will we be in the next three to five years?

“We see several short-term trends in high-performance trading in the equities and financial market environment,” Solodukhin says. “First, we see a continuous growing demand to host high-frequency trading solutions on public clouds from Amazon Web Services, Google Cloud and Azure. Second, financial market participants continue to invest in machine learning and AI algorithms that run both on common and specialized hardware. Third, and maybe the most significant one, we should expect a major boost and new end-to-end computing solutions from collaborative effort of open source frameworks, driven by community and niche proprietary hardware vendors.”

“The speed of decision-making in high-frequency trading is already far behind human capabilities, and development of high-performance trading continues to widen the gap between algo-trading robots and humans.”— Oleg Solodukhin, Devexperts

Moore’s Law—the notion that computer processors double in complexity every two years—continues to drive increases in compute performance, and specialized processors are accelerating that in some cases, which allows for more compute or lower cost. “Some firms are having great success using application accelerators for risk applications,” Turkel says. “On the storage side, scale-out storage is where the growth is, while cloud/object storage is dropping the cost down dramatically, even as the volume of storage is increasing. Between the two, solid-state memory and storage technologies are enabling tiered memory [and] storage models that allow for more data to be held in faster memory or storage closer to the processing elements, significantly increasing performance of data analytics applications.”

Turkel says that machine learning technologies, as they become more mature, are likely to have enormous impact in a variety of areas of financial services.

“All risk models require that the model builder decide upfront what parameters will be used as the input to the models,” he adds. “If a relevant parameter is missed, the model’s accuracy can be lowered. But machine learning can learn from vast sources of structured and unstructured data to develop models that can evolve naturally over time as more learning takes place.”

More Info

Security and Exchange Commission’s Division of Economic Risk Analysis

Sponsored Content: Partner Profile

Dell EMC and Nallatech: OpenCL Integrated Platforms with FPGA PCIe Accelerators

Nallatech provides a family of FPGA PCIe Hardware Accelerators fully configurable using OpenCL. This software based programming model has democratized the use of the FPGA technology and multiplied the opportunities to deploy FPGA compute across the datacenter. FPGA experts, as well as newcomers, can now develop and deploy their FPGA based products in record times.

The need for FPGA compute across multiple markets has become more apparent in recent years. Various industries have used the FPGA technology over the last two decades, but the generalization of high level programming tools, like Intel OpenCL SDK, as well as low level improvements of the FPGA architecture, like hardened Floating Point fabric, have democratized and opened new doors for FPGA technology. Leading the trend, Nallatech is providing solutions to facilitate the integration of FPGA compute in the datacenter and the transition from prototyping to production.

The Solution

Nallatech FPGA Accelerators integrated in Dell EMC servers provide a production-ready solution, for FPGA experts and newcomers, to develop and deploy FPGA algorithms in the datacenter. With this ready-to-use solution, FPGA programmers can focus completely on developing their own massively parallel and compute intensive applications while reducing power consumption and total cost of ownership.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Jim Romeo is a freelance writer based in Chesapeake, VA. Send e-mail about this article to [email protected].

Follow DE