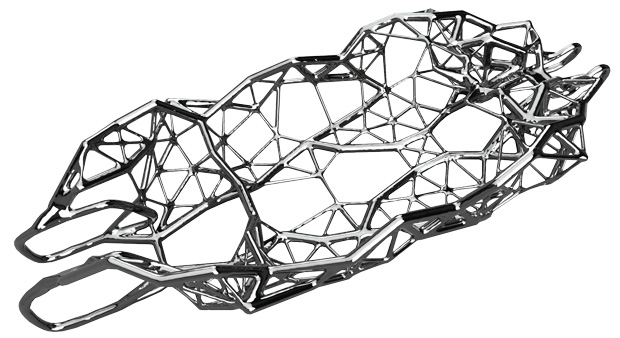

Hack Rod and Autodesk used data from sensors in a custom car (and on its driver) to measure strains and stresses. They then fed that data into Dreamcatcher, which used to real-world information to create a new body design that improved the vehicle’s ability to withstand those stresses. Images courtesy of Autodesk.

Latest News

December 1, 2016

Hack Rod and Autodesk used data from sensors in a custom car (and on its driver) to measure strains and stresses. They then fed that data into Dreamcatcher, which used to real-world information to create a new body design that improved the vehicle’s ability to withstand those stresses. Images courtesy of Autodesk.

Hack Rod and Autodesk used data from sensors in a custom car (and on its driver) to measure strains and stresses. They then fed that data into Dreamcatcher, which used to real-world information to create a new body design that improved the vehicle’s ability to withstand those stresses. Images courtesy of Autodesk.Drones that can inspect cell phone towers and identify maintenance issues. Self-driving cars that learn how to react to unexpected obstacles in the road. Virtual phone assistants that can respond to your hastily spoken questions. Breakthroughs in artificial intelligence (AI) technology are enabling everything from autonomous vehicles to more accurate speech and image recognition.

AI also presents an opportunity for engineers to improve design optimization and increase their efficiency via machine learning systems that can automate a number of tedious design tasks.AI sprang from science fiction and then made the leap to reality via early computing experiments in the 1950s and ’60s. Over the last several years, the technology has rapidly advanced, in part due to the development of complex neural networks created using algorithms that allow these systems to teach themselves how to do things, and to continue to get better at those tasks as they are exposed to more data. AI technology dating back decades takes a huge leap forward thanks to vast Big Data stores, high performance computing resources and powerful processing.

“The training of the system is a heavy process, but one reason that AI is resurgent now is because using GPUs (graphics processing units), the calculations can be run in hours,” says Andrew Cresci, general manager of the Industrial Sector at NVIDIA. “It’s become a very practical operational tool.”

These neural nets learn the way children do; improving their performance by performing actions or analysis thousands of times until they master a skill—just at a highly accelerated rate. This deep learning approach mimics the function of the neocortex, learning to recognize patterns in digital data.

“Modern products are becoming increasingly complicated, and AI accelerates the design process through faster, more efficient engineering,” says Nidhi Chappell, director of Machine Learning, Data Center Group, Intel. “Machine learning, a leading technique for AI, is being used to optimize engineering through more efficient design and quality control. AI can be used to reduce the design cycle and detect more bugs during development, and quickly browse through many thousands of historical test records to uncover hidden patterns—a task which would otherwise take a human hundreds to thousands of hours and would be impractical to perform manually. There are countless examples of how this technology is transforming business today.”

Some of those examples include:

• A Google deep learning system that studied millions of YouTube videos was shown to be twice as effective at image recognition than any previous system.

• A reduction in the error rate in Android’s speech recognition capabilities.

• Google’s AI system teaching itself to encrypt data.

• Microsoft has demonstrated a speech recognition system that can transcribe speech and then translate it into multiple languages.

• IBM, which was a pioneer in the space with its Watson platform, is using that technology in a number of applications. That includes health care, where the cognitive computing system is being used to help physicians make medical decisions.

• Design co-op Local Motors is using Watson to add cognitive computing to its Olli self-driving vehicle, which can analyze and learn from transportation data generated by sensors on the vehicle.

Meanwhile, NVIDIA, Intel and others are pushing the envelope on compute power, making it possible for these systems to advance even faster. For example, in August it was announced that Intel had acquired Nervana, a startup focused on AI software and hardware to advance Intel’s AI portfolio and enhance the deep learning performance Intel Xeon and Intel Xeon Phi processors.

“Today, it still takes far too long to develop and deploy intelligent systems,” Chappell says. “We must shrink the time that it takes to ingest data, build models and especially to train complex neural networks—which can take days or weeks depending on the level of complexity.”

For designers working on future products, AI will be an increasingly important product component in many sectors as more “smart” machines are created. AI can also have a hand in the design process itself—considerably shortening development cycles.

“Machine learning ties together the entire design-make cycle,” says Mike Haley, senior director of Machine Intelligence at Autodesk. “This will impact the entire design process. Eventually, data from products in the field will be aggregated and used as a predictor of what worked in the design and what didn’t, and you have this intelligent simulator that gets better and better because of what it is learning. It will make design easier.”

Autodesk recently partnered with machine intelligence specialist Nutonian to embed the Eureqa artificial intelligence modeling engine into its IoT cloud platform Fusion Connect. The combined platform will be used to create predictive models for product failures or design flaws, by using data to create equations that represent what is actually happening with a device in the real world. The company’s Design Graph product is another machine learning system that helps users manage 3D content.

Autodesk has even extended its use of AI into customer support, teaming with IBM to create Otto, a digital concierge that uses IBM Watson technology to manage customer and partner inquiries.

One of the most well-known AI-related projects at Autodesk is the Dreamcatcher generative CAD software that uses machine learning techniques to generate designs based on designer-designated objectives related to function, materials, performance criteria, cost constraints and other data.

Ultimately, AI could even make manufacturing easier. “For example, setting up a CNC (computer numerically controlled) molder is a complex, difficult task,” Haley says. “But that is a task that could be machine learned to a fairly large extent. It could make that technology much easier to use in the future.”

Other software companies may follow suit. Bricsys’ BricsCAD solution may also integrate AI at some point. At the company’s recent user conference, executives mentioned the possibility of using AI to automate walls, floors, stories and other elements in the conversion to a full building information model.

“What AI can do is predict design intent if you have some idea of where you are headed and what you are trying to build based on what the system has seen in the past,” NVIDIA’s Cresci says. “That’s one approach. There is also predictive design, where you tell the system the type of thing you want and it will iterate around that design to help predict the best combination of parameters.”

Merging Artificial Intelligence and Design Software

Combining AI and CAD will require design companies to have access to large amounts of data for the systems to “learn” how to perform whatever function is required. Logic-based machine learning approaches would require product components and structures to be stored in hierarchical form as opposed to deep learning approaches.

Autodesk’s first foray into machine learning was Design Graph, a system that uses algorithms to manage large stores of 3D design data. The solution creates what the company calls a “living catalog” that categorizes every component and design created by a company.

Designers can search for a part type and be able to view hundreds of potential options. The system can identify designs based on their shape, structure and other characteristics without the need for labeling or metadata. A360 users can search Design Graph for design files that already exist that fit their parameters.

“This was a great place to start with AI, because people produce a lot of 3D data, but the limiting factor was having humans curate the content,” Haley says. “It was a much better idea to have a machine learning system collate the data, learn your own unique taxonomy and deliver it in just-in-time fashion. The tool can predict what you need and give you a custom catalog. It can prevent duplication of components.”

AI can also be used to create designs from scratch using specific constraints and other data. Or it could be used to help quickly iterate and optimize existing designs.

What’s more, design software can use data generated by smart products to help improve next-generation designs of the same item. This combines the concept of the IoT with AI. Autodesk worked with the Bandito Brothers on the Hack Rod project, for instance, which used data from sensors in a custom car (and on its driver) to measure strains and stresses. They then fed that data into Dreamcatcher, which used real-world information to create a new body design that improved the vehicle’s ability to withstand those stresses.

Dreamcatcher isn’t an AI product in and of itself, but it does use machine learning to generate multiple design options by rapidly evaluating design trade offs. This generative design solution can help designers concentrate on the creative aspects of a design, instead of more repetitive design tasks.

Artificial Intelligence Challenges

Integrating AI technology into design and engineering processes will require both an investment in data collection and gaining the trust of end users. “You want the system to be trusted by the user, otherwise they will not be able to take full advantage of capabilities of the AI system,” says Francesca Rossi, AI ethics researcher at IBM Research. “We need to build systems that create trust between the human and the system.”Intel’s Chappell says trust in AI will depend on making society aware of the potential AI has to transform the world and solve previously intractable problems, and then bringing together government, business and society’s thought leaders to address the potential adverse effects of AI. “The promise of AI goes beyond automating dangerous or tedious tasks, such as driving, to accelerating large scale problem solving, unleashing new scientific discovery, and extending our human senses and capabilities,” he says. “This new symbiosis between humans and machines will expand our capacity and lead to unprecedented productivity gains.”

Trust can be built through verification of the solution using test data and letting end users see how the solution can potentially function. “The trust is based on your confidence in the test data and the ability for the system to delivery very high accuracy,” says Jim McHugh, vice president and general manager at NVIDIA.

“If the pain is great enough, they will trust it,” Haley says. “Even if the system gets things wrong, it can still generate insight or find designs and correlations that you didn’t know existed. Even a system that has error in it is still often better than the manual systems people have today.”

Another challenge is making sure you have reliable data to train the system. “People do need to make that leap of faith in believing the system is accurate,” McHugh says. “The prep work necessary to get you there is the big challenge.”

Having enough of the right data is critical. In the case of Design Graph, Autodesk lets the product learn from its entire customer base, not just the data in place for a specific customer. “It’s not learning your specific designs, but it can learn to identify components like bolts. We are going to mine everybody’s data at a certain level. By allowing the system to learn using data about bolts, we aren’t giving any design secrets away. But the solution can identify any bolt in the world.”

Data is going to be the key for firms preparing to leverage this type of technology. Companies should identify the type of data they might need to train an AI solution (energy simulations for buildings, or the effect of certain stresses on an aircraft part, for example), and make sure they can accumulate the data.

However, that level of data sharing still creates unease for many firms that are sensitive about their data. The use of AI is also going to cause additional disruption because it will fundamentally alter the way a designer (and everyone else) works.

“You also have to get educated really quickly,” Haley says. “Look around and see if you can create worthwhile data sharing arrangements or data partnerships across your industry.”

“In my entire career I’ve never witnessed the speed of the technology explosion like we’ve seen with machine learning,” Haley adds. “How you are going to work is going to change quickly—at a frightening pace for a lot of people.”

A lot of people still don’t quite grasp what AI is or how it might work for them. For example, one common misperception is that you can simply turn the AI system on and it will work. Machine learning or deep learning systems have to go through an actual learning process. The software itself is always fundamentally shifting and changing, which is a difficult concept to grasp.

Earlier this year, Amazon, DeepMind/Google, Facebook, IBM and Microsoft formed the non-profit Partnership on Artificial Intelligence to Benefit People and Society (also called the Partnership on AI) to conduct AI research, develop best practices and encourage the adoption of AI systems that humans are comfortable working with.

The group is also working on educational efforts so that industry users are able to fully comprehend how the technology works and understand its potential.

“The role of the partnership is to make everybody understand what the real capabilities of AI are, and where it can be useful in our professional and private lives,” IBM’s Rossi says. “We can also help improve AI to make it not just effective for our goals, but also improve it so it is more aligned with humans.”

That will be important as AI continues to evolve. Haley expects there will be three eras of AI software: 1. the use of intelligent tools, like design optimization software; 2. truly intelligent assistants that can help engineers complete tasks—finishing parts of a common design or layout, for example; and 3. an AI system that functions as a trusted collaborator. “This is 10 years out, but AI will be anthropomorphized,” Haley says. “It will be akin to working with a colleague.”

Designing Smarter Products

Designers will also be challenged to integrate AI into an increasing array of products and smart machines. That will require designing in some level of flexibility, because the products themselves will learn to perform tasks in better ways over time. In some cases, they’ll even learn new tasks.

For example, factory robots that in the past were created to perform specific tasks (completing a single weld in an automotive assembly, for instance) will be replaced with general purpose robots that can be trained to perform multiple tasks under varying conditions. “By incorporating AI, you can make the robot responsive to its environment,” Haley says. “You can then make the technology more horizontal. Instead of a robot that is dedicated to spot welding, you have a bunch of robots who can figure out what they need to do based on what’s in front of them.”

Low-power system on a chip (SoC) processing capabilities can be incorporated into very small items. There will be heavier sensorization of machines, along with intelligence added at the edge so that machines can learn from and respond to their environment. Those machines can be connected to nearby servers that can aggregate data from multiple devices in a single location. In turn, that information can be fed into cloud systems that provide a higher level of machine learning.

Engineers should ask themselves whether they have four key things, says Chappell: 1. a deep understanding of problems they’re trying to solve; 2. access to data; 3. organizational support to pursue a non-deterministic timeline; and 4. access to AI technology.

“If the answer to the first three questions are yes, or yes maybe, then it makes sense to ask questions to determine the optimal AI technology,” he says. “Does the solution provide compelling price-performance? Does the solution simplify development? Does the solution scale efficiently and seamlessly? Is the solution narrow or broad? Is the solution future-proof?”

Data used to train these systems also has to be accurate and free from data bias. The types of potential bias will vary depending on the application, but the training should take into account all of the likely operational conditions and end users. For example, if a system is supposed to respond to speech input, it should be trained to respond to end users with different accents or speaking different languages.

Design tools will need to be able to effectively incorporate machine or IoT data in a meaningful way. “Everything is going to be automated,” Cresci says. “There will be non-stop monitoring of products and constant engagement with the machine so there are never issues of failure.”

Haley says to expect more AI and machine learning-based products in the design space. He also says that exactly how the technology will be used is still going to be in flux. “As we pivot as a company into doing AI, we have to figure out what is valuable and what is not valuable, and there are going to be some AI solutions that aren’t that great,” Haley says. “The only way we can discover what is going to work is by doing a lot of experiments.”

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Brian Albright is the editorial director of Digital Engineering. Contact him at [email protected].

Follow DERelated Topics