HPC Handbook: Simulation Successes

Latest News

February 2, 2016

Editor’s Note: This is an excerpt of Chapter 8 from The Design Engineer’s High-Performance Computing Handbook. Download the complete version here.

Throughout The Design Engineer’s High-Performance Computing Handbook, we’ve covered the basic concepts, theories, industry statistics and hardware that support HPC. However, the best way to show the impact and versatility of these systems is through real-world applications. As the technology becomes more developed, and technology such as the cloud makes HPC more accessible, engineers will be able to leverage more processing and simulation power throughout the design process.

In this chapter, we’ve compiled five use cases so you can see how engineers benefit from simulation-led design:

1. BLOCK Transformatoren-Elektronik

A manufacturer of coiled products that are used in a variety of industries, especially for electronics applications, BLOCK expanded simulation resources with COMSOL Multiphysics and HPC. The company can run its simulations on a multicore workstation with no limit to the number of cores, as well as on a cluster with no limit to the number of compute nodes. This offers them improved efficiency regardless of whether a simulation is run on a workstation or a cluster; their R&D team can now quickly deliver the best products to customers.

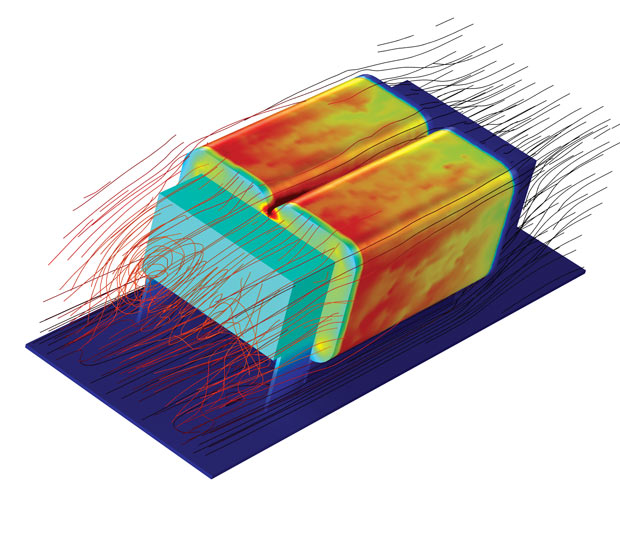

Simulation of an air-cooled DC choke where temperature distribution and velocity streamlines are shown. Image courtesy of BLOCK Transformatoren-Elektronik.

Simulation of an air-cooled DC choke where temperature distribution and velocity streamlines are shown. Image courtesy of BLOCK Transformatoren-Elektronik.“I’m currently using a cluster with 22 cores and 272GB of RAM and I can easily run my simulations remotely on it,”says Marek Siatkowski, who is responsible for all of BLOCK’s simulation activities. “COMSOL supports distributed memory computing where each node of a cluster can also benefit from local shared memory parallelism; this means that I’m getting the most out of the hardware available.”

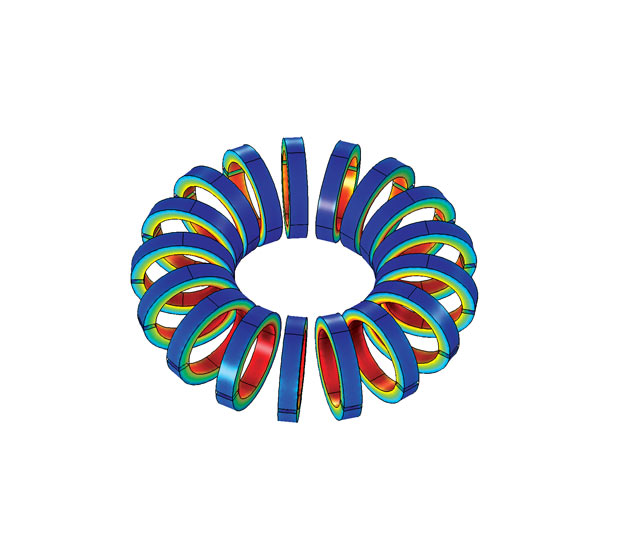

Magnetic flux density in a toroidal choke is pictured. Image courtesy of BLOCK Transformatoren-Elektronik.

Magnetic flux density in a toroidal choke is pictured. Image courtesy of BLOCK Transformatoren-Elektronik.2. Pinnacle Engines Looks Into the Flames

Tony Willcox, director of simulation and controls at Pinnacle Engines, can gauge an engine’s average performance characteristics such as torque, power, fuel flow and emissions via testing, but Willcox and the simulation team at Pinnacle Engines are also interested in what they cannot see or measure.

“There are things we can’t measure in a test, but can mimic in simulation,”Willcox says. “For instance, we could turn off heat transfer to see what the engine’s efficiency would be without heat loss in the cylinder. Or we could turn off the leakages through the piston rings. With these options, we can better understand the impact of different factors on our design.”

Pinnacle Engines’ choice of software is CONVERGE CFD from Convergent Science. “When we need an answer, we need it in a matter of days,”says Willcox. “We can’t wait for weeks. There are too many parameters to sweep, so even if we had the hardware, we wouldn’t be able to investigate all of them.”Unless the design parameters can be calculated in parallel.

The answer is on-demand computing infrastructure, specifically designed for Design of Experiments (DOE)-type simulation. The answer, in this case, also turned out to be just 40 miles away from Pinnacle Engines’ San Carlos, CA, headquarters. San Francisco-based Rescale, a cloud simulation supplier, offers a scalable on-demand platform—a combination of software and hardware—for those seeking to do precisely what Pinnacle Engines wants to do. The company makes its solution directly available in the Web browser. It also has a partnership with Convergent Science, which simplifies acquiring additional licenses for parallel simulation runs.

3. UberCloud Team 171’s Dynamic Study on Frontal Crash of a Car

Crash testing requires a number of test vehicles to be destroyed during the course of physical testing, which is time consuming and uneconomical. An efficient alternative is virtual simulation of crash testing with crash tests performed using computer simulation with finite element (FE) methods.

One study by an UberCloud team focused on the frontal crash simulation of a representative car model against a rigid plane wall. The computational models were solved using the FE software LS-DYNA that simulates the vehicle dynamics during the crash test. The cloud computing environment was accessed using a VNC (virtual network computing) viewer through a Web browser. The 40-core server with 256GB RAM installation was at Nephoscale, a cloud computing host. The LS-DYNA solver was installed and run on an ANSYS platform with multi-CPU allocation settings.

The representative car model was traveling at a speed of 80 km/hr (49.7 mph). The effect on the car due to the frontal impact was studied during which component to component interactions were analyzed and car impact behavior was visually experienced.

The LS-DYNA simulation was performed to evaluate the damage caused in the car assembly when it impacted with velocity against a rigid wall. The car was travelling at an average speed of 80 km/hr (49.7 mph). The following plots highlights the damage caused on the car.

The impact study on the car was carried out in the HPC environment, which is built on a 40-core cloud server with CentOS Operating System and LS-DYNA simulation package and is running on an ANSYS platform. The HPC performance was evaluated by building the simulation models with different mesh densities that started with a coarse mesh that was transformed into a fine mesh that had 1.5 million cells in the simulation model.

The advantage of the HPC cloud resource is that it increases the power of LS-DYNA to solve the simulation model in a shorter run time. The use of the HPC cloud has enabled simulations that include complex physics and geometrical interactions. The problems posed by these categories require high computing hardware and resources that are not possible using a normal workstation.

4. Farr Yacht Design

One of the top racing-yacht teams in the world, Farr Yacht Design collaborated with Penguin Computing and NUMECA on the company’s extensive simulation needs.

“Because of the huge number of data points and the hundreds of runs required, if we had been running the NUMECA FINE/Marine CFD software exclusively on our in-house HPC cluster, it would have taken us over six months,”says Britton Ward, vice president and senior naval architect at Farr. “By being able to access POD (Penguin on Demand), we got our results in six weeks,”he comments.

POD provides a persistent and secure compute environment designed specifically for HPC. Jobs are executed directly on physical systems and all systems are connected through a low latency QDR InfiniBand fabric for optimal application scalability. POD provides Farr with access to needed computational resources when the company’s internal cluster, consisting of a number of standard x86-based servers, cannot provide the compute power needed to run complex simulations often involving computational fluid dynamics (CFD) and finite element analysis (FEA).

5. Stanley Black & Decker Optimizes Designs

Known for its tools, Stanley Black & Decker (SBD) is a diversified global provider of hand tools, power tools and related accessories, mechanical access and electronic security solutions, healthcare solutions, engineered fastening systems, and more. When it came to optimizing a hammer mechanism design for their top-selling rotary hammers, SBD engineers knew they needed a computer-aided engineering (CAE)-based approach.

To meet time-to-market requirements, Stanley Black & Decker turned to HPC-powered CAE for design exploration. Image courtesy of Stanley Black & Decker.

To meet time-to-market requirements, Stanley Black & Decker turned to HPC-powered CAE for design exploration. Image courtesy of Stanley Black & Decker.“Optimization by CAE is the only realistic way to achieve this; the requirements are just too complex to rely on experience based knowledge or pure physical testing,”says Andreas Syma, director of Global Computer Aided Engineering at SBD. Previously SBD had been using workstations and CAE software with an optimization runtime of about three weeks, but Syma’s goal was to reduce that to a weekend.

Altair introduced SBD to HyperWorks Unlimited, a fully managed HPC appliance for CAE. This turnkey appliance includes pre-configured HPC hardware and software, with unlimited use of all HyperWorks applications plus PBS Works for HPC workload management. SBD chose a 160-core HyperWorks Unlimited system, which Altair delivered and installed in just a few days. “The system just works,”says Syma. “It’s bringing up our utilization with no performance or maintenance issues to date.”

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

DE’s editors contribute news and new product announcements to Digital Engineering.

Press releases may be sent to them via [email protected].