Sorting Out the Need for Personal Supercomputers

The power on your desktop can drive the most demanding applications.

Latest News

January 3, 2010

By Peter Varhol

The history of personal computing is a very short one—it was only in the latter part of the 1960s that professionals could hope to have a time-sharing terminal on their desks. In the 1970s and 1980s, Unix workstations were relegated to the most demanding engineering tasks, with X-Windows servers dividing the computational horsepower among multiple users.

The Dell Precision line is a capable model for high-end engineering design work. It is shown here running DASSAULT Systemes Catix. |

Supercomputers were an entirely different type of beast. Born in the 1960s out of Control Data Corporation, they were simply the fastest computers money could buy. They used specially designed processors and were particularly quick at math computations. In the 1970s and 1980s, they were designed and manufactured by the likes of Cray (two separate companies bore the name of Seymour Cray), SGI, Pyramid, Thinking Machines, and IBM.

Today supercomputers are custom-designed parallel processing machines that are built to the needs and specifications of individual buyers. They use industry-standard processors, but hundreds or even thousands of them, and typically cost millions of dollars.But it is possible for individual engineers to have 80 or even 90 percent of the performance of a custom-designed supercomputer right on their desk; or more likely, under their desk in a standard PC tower configuration. It might cost a few thousand dollars, or at the top end a few tens of thousands, but you can have more power on your desk than was available in any research lab only a few years ago.

The Nehalem processor from Intel is capable of powering demanding applications that require Intel compatibility. |

How? Mostly it’s thanks to faster industry-standard processors, the PC architecture, and the race to the bottom on prices. But there is also help, through the use of other processors that are better at computation than the industry-standard Intel processors.

The Different Levels of Supercomputer

There are three levels of personal supercomputer. The first is the faster Intel-processor computer. It might be a quad-core Xeon desktop, with up to 192GB of memory. It could be a Xeon or Itanium with two or four processors, perhaps each with multiple cores.

By any measure, this is a fast computer. According to the popular SPEC benchmark, a Dell Precision with the Intel Xeon X5470 processor reached a peak SPECmark of 36.7, one of the top values recorded on a standard PC configuration.

The second level starts with the same level of computational power as the first, and then incorporates one or more high-performance coprocessors. The coprocessor, such as the NVIDIA Tesla, might be designed for superior floating point performance. Because of the low cost of such graphics processing units (GPUs), you could run a number of them in parallel and still put a supercomputer on your desk. The problem with this configuration is that you need code that can take advantage of both that processor and any unique architecture being used. The third level includes specialized memory and processor architectures. While the processors will be off the shelf, how they are configured, and how they access memory, are likely proprietary, optimized for high performance. These are typically designed for specific uses, or are laboratory projects. Depending on the configuration and purpose, such a system can cost anywhere from low five figures to well up into six figures.At the Low End

You can move into the lower end of the personal supercomputing range with high-end off-the-shelf systems for under $2,000. It starts with Intel’s Nehalem architecture, which is revolutionary in a number of ways that will benefit users such as engineers who require very high-performance systems on a budget.

One significant innovation in Nehalem (now incorporated as a part of the Xeon chip line) is its so-called Turbo Boost Technology, which automatically delivers additional performance when needed by taking advantage of the processor’s power and thermal headroom. It does so via overclocking, a technique well known to anyone who built his or her own PCs 15 or 20 years ago. Because Intel processors were capable of running faster than their rated clock speeds, replacing the clock crystal with a faster one was an easy way of getting a computer to run faster with a slower, less-expensive processor.

|

Nehalem does it in a different way. It detects when a processor core is running at close to capacity, then overclocks itself one step at a time to be able to run its workload more easily. As the workload diminishes, it clocks back down to its normal speed.

The processor also incorporates scalable shared memory with memory distributed to each processor with integrated memory controllers and high-speed point-to-point interconnects. Specifically, it has the memory controller on-chip, rather than across the memory bus on a separate chip. This enables the controller to understand what is happening in the processing pipeline and make fetch decisions based on that tight coupling. This approach has the potential to improve performance by ensuring that data and instructions are ready to go so that pipeline stalls become less common.

HP uses the Nehalem processor primarily for servers. If engineers are willing to invest in a system configured as a server, such a configuration will be comparable to the Dell in memory capacity and bus speeds.

More Power for More Money, Tradeoffs

The next level of personal supercomputer will typically run a standard operating system that lets you get your routine work done, but has the ability to dispatch specific parts of the application to one or more graphics processors, such as those produced by the likes of NVIDIA and ATI (a part of AMD). NVIDIA, in particular, has gone a long way in this direction. Using the Tesla processor and CUDA architecture, it has created a processing architecture that lets you run heavy floating point code on multiple graphics CPUs. In September of this year NVIDIA announced its new architecture, Fermi, which overcomes some of the limitations.

At the high end, Intel is once again getting into the picture. The company is scheduled to reveal details of its new Larrabee family, Intel’s first multicore architecture designed for high throughput applications featuring a programmable graphics pipeline. It uses multiple X66 processor cores rather than some exotic processor that may be more suited for the task.

The compelling thing about Larrabee is that far fewer code changes will be necessary in order to use existing applications. In fact, all existing applications should be able to use it, albeit not nearly to its potential. Rewriting code to make it more parallel where appropriate will offer occasional dramatic performance gains, but that requires additional development effort.

Do Operating Systems Matter?

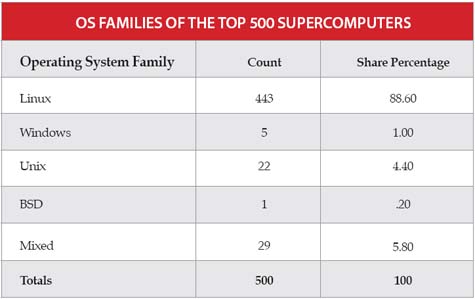

According to a list of the top 500 supercomputers on the planet today (500.org/list/2009/06/100), 443 are running Linux. Does that mean Linux is the fastest OS? Well, no. And there is no question that an operating system that can have an influence, depending on your application.

Microsoft, for example was happy to note last year at the Supercomputing Conference that Windows Server 2008 was used with the fastest computer on the top 500 list. But it is likely such a minor role that being able to easily run the applications you need is more important. If your application consists of many threads, you may want to look at an OS that does fast context-switching. Of course, you’ll also want to make sure that your applications are available on the OS that you choose.

In the Market?

If you’re looking to buy a desktop supercomputer, you must first assess your needs. If your primary need is to run standard design applications, the lowest level of desktop power is probably fine.

If you need still more, or if you don’t have source code that can easily be rewritten and recompiled, look toward a new architecture like Intel’s Larrabee. It will take a little longer to get there, and cost a bit more, but you will get the power of a true personal supercomputer.

More Info:

AMD

Contributing Editor Peter Varhol covers the HPC and Engineering IT beat for DE. His expertise is software development, systems management, and math systems. Send comments about this column to [email protected].

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Peter VarholContributing Editor Peter Varhol covers the HPC and IT beat for Digital Engineering. His expertise is software development, math systems, and systems management. You can reach him at [email protected].

Follow DE