AR-VR: Beyond Joysticks and Touchscreens

Voice command, hand gesture, texture mimicry and other advances bring a greater touch of naturalism to AR-VR.

RealWear lets you use voice control to operate its hands-free AR system. The company has a strategic agreement with Honeywell to cobrand and sell the RealWear AR systems. Image courtesy of RealWear.

Latest News

December 19, 2018

How do you interact with 3D models of products that only exist as polygons and pixels? The current generation uses the mouse and the keyboard, a paradigm that’s anything but natural.

The emergence of augmented reality (AR) and virtual reality (VR) systems represents an opportunity to redefine the human-pixel interaction, but early implementations wrestled with the less-than-ideal control systems. The joysticks and game-style controllers adopted by many AR-VR systems are well-designed for common game actions: a trigger-like button for shooting games, four directional buttons or a steering stick for game avatar movements or sturdy buttons to pound on during fight sequences. But they’re poorly designed to duplicate the more delicate and complex actions involved in, say, manufacturing installation, engine disassembly or operating a tractor.

The new wave of AR-VR apps, however, comes much closer to mimicking the actions they depict, bringing the technology a few steps closer to real-world fieldwork, enterprise training and plant operations.

Tech Soft 3D added AR-VR support in its HOOPS developer kit, incorporated into many standard CAD and 3D design software programs. Image courtesy of Tech Soft 3D.

Is Your AR System a Good Listener?

In April 2018, Greenlight Insights, an analyst firm tracking the AR-VR market, published a report titled “XR in Enterprise Training,” with “X” standing in for the unspecified variations of reality mimicry (mixed, virtual, augmented and extended reality).

“Industries as diverse as automotive manufacturing, consumer retail and healthcare delivery are using virtual and augmented reality technology to realize productivity gains and invent service models. Organizations that succeed in harnessing XR-enabled training will lead. Those that don’t may be at a serious disadvantage,” warns Greenlight Insights.

Last September, at the Virtual Reality Strategy (VRS) Conference hosted by Greenlight Insights, Tom Dollente, director of Product Management, RealWear, was on the panel that explores the use of AR for frontline workers. (Editor’s note: DE Senior Editor Kenneth Wong moderated the panel.) RealWear’s products—HMT-1 and HMT-1Z1—use a head-mounted camera and a small projector mounted before the right eye. Designed for hands-free use, it employs voice control for user input, command and selection. Users may attach it to a hard helmet for deployment in construction sites and other hazardous zones.

“We learned a lot while working to perfect our voice-recognition algorithm,” says Dollente. “We fine-tuned our system so it can detect the direction of the voice. If someone nearby happens to utter a command phrase (such as Home), the headset won’t execute it. We also learned to use commands like ‘Terminate,’ which has distinct consonants, highly unlikely to be confused with other words, and is not a phrase someone might say frequently.”

Such considerations are important, as the user will be wearing the RealWear system and going about their daily routines. Therefore, the system needs to be able to distinguish legitimate verbal commands from background noises, nearby conversations and the user’s incidental conversations with coworkers.

At November’s Autodesk University conference in Las Vegas, John SanGiovanni, CEO and cofounder of Visual Vocal, demonstrated the latest incarnation of his company’s mobile VR app with voice memo feature.

“Suppose you’re at a construction site, and you’d like to add a voice memo for your team, discussing the issue you have with the location of a certain door,” he says. “You can launch the Visual Vocal app, then use our unique inking method to annotate and record your message.”

Visual Vocal doesn’t provide its own means to capture an immersive environment for playback; instead, the app works with content from other reality capture apps such as Google’s Cardboard app (free; available for iOS and Android devices). The company uses Chirp, a partner technology, to link mobile devices to join the same VR collaboration session in real time, bypassing the need to email a link or transfer a file for shared viewing.

SanGiovanni refers to the cloud-hosted Visual Vocal annotations as VV, and the Visual Vocal-style multi-user collaboration sessions as Mind Merge (terms that he hopes will catch on). This approach allows users to embed floating, finger-drawn 3D markups, along with voice messages that can be played back by others you have chosen to work with. Visual Vocal’s current promotional materials emphasize architecture, engineering and construction (AEC), but the app is adaptable to factory floor, manufacturing plants and other sites for the same purpose and workflow.

The Key is in Your Hand

An exhibitor at the VRS Conference, uSens, says the key to a more natural interaction in AR-VR is hand tracking. Accordingly, it uses computer vision to recognize and interpret hand gestures and movements that humans naturally use in communication (such as a raised thumb for a sign of approval) and object manipulation (for example, shaping clay with bare hands or striking nails with a hammer).

“We have two solutions for that. One uses your mobile phone’s color camera and doesn’t need any additional hardware,” says Dr. Yue Fei, cofounder and CTO at uSens. “Another is our own hardware, called Fingo [a kit that includes stereo cameras].”

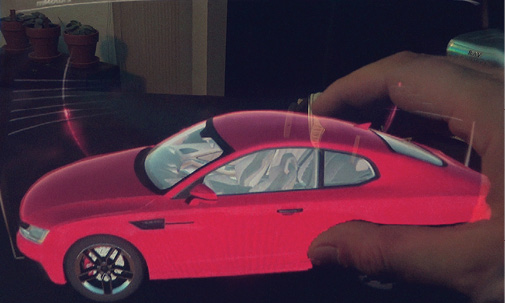

Meta lets you interact with hologram-like digital models using natural hand motions. Image courtesy of Meta.

The human hand is a soft-tissue object that presents challenges for the computer to recognize. Therefore, uSens uses a technique where the system tracks the easily identifiable joints, which, in this case, are finger joints.

“Our algorithm acts like [an] X-ray; it can see through the skin and select the bone joints,” says Fei.

Pure computer vision, as it turns out, is insufficient for hand tracking, especially in incidents where the angle of the hand obscures some of the fingers. By applying machine learning, uSens refines its algorithm to determine the theoretical positions of the joints, even when some of the fingers are invisible to the camera.

Feeling Surfaces

In the exhibit area of VRS, with a mix of actuator-equipped gloves and camera tracking, Miraisens Inc. demonstrated how its technology could introduce haptic feedback to the AR-VR experience. Miraisens’ patented technology was developed in Japan by Norio Nakamura. Nakamura founded the company in 2014. He currently serves as chief technology officer.

The technology is designed to simulate sensations when users interact with digital objects. In a published technical paper, the company explains: “If the skin nerve is properly stimulated, the stimulation signal is sent to the brain and then generates haptics illusion [....] as a method of performing this stimulation, any kind of physical quantity such as vibration can be used.”

Draw with Hand Waves

The rise of consumer and powerful professional tablets prompted many CAD developers to rethink how the sketching environment works inside their modeling programs, but now, a new challenge may be on the horizon: identifying the best way to sketch inside AR-VR environments.

Gravity Sketch says they have the answer. The company developed a drawing program that lets users draw using AR-VR controllers inside virtual 3D spaces. Using AR-VR controllers and touch technologies, Gravity Sketch lets users drag and pull on colored lines and curves to create sketches, surfaces and objects in a virtual space.

Color, brush size and brush style adjustments are facilitated via a floating virtual palette. The system offers IGES export to bring the design into CAD programs for further refinement and parametric design. The program currently works on the HTC Vive and Oculus headsets.

The Road Ahead

The demand for better human-pixel interaction has spawned many innovative, creative solutions that duplicate how people work in the real world, but limitations still remain. Voice command has gotten much better at coping with background noises, but when it comes to operating instructions (opening a file, extruding a model or adjusting the length of a line), few companies have managed to implement natural language support that mimics the way users can talk to iPhone’s Siri or Amazon’s Alexa.

AR-VR systems with hand-gesture recognition let users generate more natural actions, such as gripping a virtual steering wheel to navigate a car or lifting a digital object with their palm. But many lack proper haptic feedback. That means they can’t feel the weight of the virtual engine they’re learning to disassemble or the texture of a virtual car’s interior. These may not be important for sales presentations, but in training applications that require building muscle memory and adjusting the forces and pressures based on feedback, they are indispensable. Overcoming these hurdles will lead to AR-VR becoming a much stronger rival to costly physical training facilities.

For More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE