Digitalization Reveals Product Data Management Gaps

Vendors are redefining data management capabilities to address the diversity and large-scale data requirements of the digital thread.

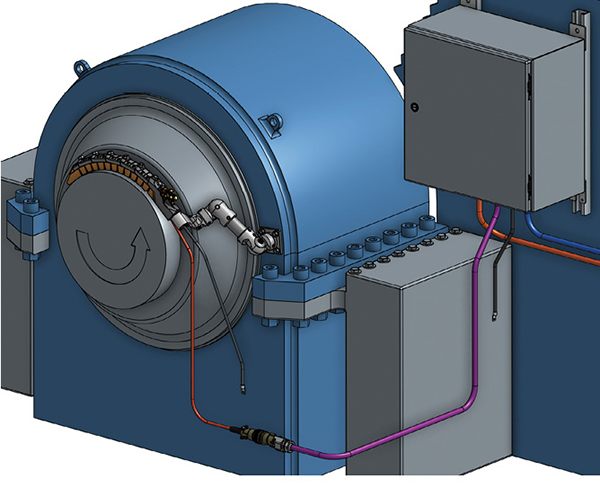

Onshape’s built-in data management capabilities allow Cutsforth to accelerate its product development cycle, including for its Shaft Grounding System. Image courtesy of Cutsforth.

Latest News

July 1, 2019

At Cutsforth Inc., a maker of power industry parts and systems, product development used to be a game of hurry up and wait. Using traditional CAD and product data management (PDM) systems, engineers typically were constrained by frequent system crashes and a burdensome check-in and check-out process that took too much time to manage while hampering design collaboration.

Of greater concern was the ripple effect that stops and starts had on the overall development process. Accelerating time to delivery of its brush holders and shaft grounding solutions is crucial for the Cutsforth engineering team as it strives to help power generation customers steer clear of any risk of downtime.

“Every time we’d open up a part, we’d have to interface with some completely different [PDM] program, which pulls you out of the workflow,” explains Spencer Cutsforth, an R&D engineering technician at the company. “If you can reduce the amount of time spent pausing and breaking the workflow, you can move forward much more quickly.”

Cutsforth eventually swapped out its traditional CAD and PDM system for Onshape, a cloud-based CAD platform that has integrated data management capabilities. Onshape is built on a new big data-styled database model that supports myriad forms of data and flexible schema, unlike many old-school PDM platforms, which use structured relational database architectures as their core foundation.

As manufacturers continue down the path of digitization, traditional PDM systems are buckling under the weight of stitching together larger CAD models, high-fidelity simulations, electronic CAD files and digital twin data models. These larger, more complex systems models need to be managed in the context of linkages to multiple design, document and simulation applications; have intricate relationships and interdependencies; and require highly sophisticated search capabilities beyond what’s been widely available in conventional PDM and CAD management solutions.

“The gradual digitization of more and more pieces of what we would like to store, manage and keep safe has grown,” notes Stew Bresler, senior product manager for NX and Teamcenter integration at Siemens PLM Software. “The number of references and relationships that we have to track so we can pull in the right pieces of data has become far more complex.”

The Evolution of PDM

The conventional paradigm for PDM systems as well as other CAD data management techniques, including shared network files or Excel spreadsheets, has been a file-based approach. However, 3D models and related data stored in a single file share or Excel spreadsheet can be easily lost, corrupted or overwritten and are highly insecure.

PDM systems were designed to tackle many of these issues, but the architecture still maintains models and product data in a file-based format, albeit with metadata to help track where files are kept along with a summation of what’s inside to provide a level of visibility.

This approach gets harder to find more granular information bits as the volume and variety of product-related materials scales and more requisite data is managed outside of PDM software. Moreover, PDM systems leverage a check-in and check-out model to aid in version control; however, that singular process creates obstacles to an efficient engineering workflow since multiple users are locked out of working on the same file simultaneously.

This user restriction promotes a serial, not parallel, design workflow, which is often cause for bottlenecks and delays.

“Everything doesn’t have to be in a monolithic part file,” notes Bresler. “It bloats everything, makes it difficult to work and makes scalability hard.”

To address the issue, Siemens Teamcenter PLM platform supports a common data model and linked data framework that spans cross-domain engineering functions such as ECAD, software and wire harness integration. The company’s 4th Generation Design (4GD) software delivers a more flexible component-based design paradigm for working with large-scale product data.

Although 4GD is currently targeted at the shipbuilding industry where designs encompass millions of parts, Siemens is evaluating how the technology can be applied to handle large-scale data management problems for other industry-specific use cases, Bresler says.

At Autodesk, interoperability between its Vault—its on-premise PDM system—and Fusion 360, is designed to mitigate some of the data management challenges associated with traditional PDM. The Desktop Connector for Fusion allows design teams to integrate an Autodesk data source like Vault with the cloud platform for streamlined collaboration without the constraints of traditional check-in and check-out processes.

At the same time, Autodesk AnyCAD, which allows any type of CAD data to be integrated into Inventor and Fusion 360 without the need for file translation, facilitates a data pipeline that promotes collaboration and aids in data management.

“We’re not looking at products as independent, but rather as a collaboration story,” notes Martin Gasevski, senior product manager for Autodesk Fusion 360. “Instead of the classic point-to-point integrations, we are trying to automate [the process] with a digital pipeline.”

Newcomer Onshape is turning the traditional paradigm on its head with its cloud-based approach to CAD and integrated data management capabilities. A typical file-based system stores data in a single “lump,” making it hard to change any one individual piece of data. With a database-driven design, data is not stored in big lumps, but in small chunks. That allows engineers to work on a design simultaneously because what is changed on one area of the design does not affect the rest of the model.

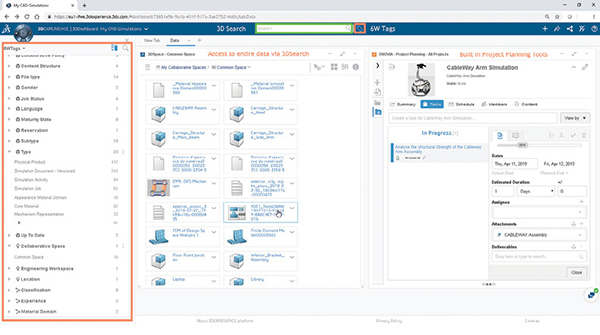

Advancing a model-based and platform approach is part of how Dassault Systèmes is addressing many long-standing data management limitations. A common data model accessed by a range of apps as opposed to point solutions accommodates different data types, including structured (CAD or simulation models) and non-structured data (social collaboration feedback).

“Everything is model-based—there is no file check-in or check-out and no multiple versions or copies of data,” notes Srinivas Tadepalli, SIMULIA Strategic Initiatives and director of strategic initiatives for cloud at Dassault Systèmes. “Everyone is working on the same data model so there is no discontinuity in the digital thread.”

Simulation Raises the Stakes—Again

Data management gets even trickier when new data types like simulation models get thrown into the digital thread. Typically, simulation has been handled as a separate process, in part because it has been governed by a different user base and because it involves a wide assortment of physics applications and file types, often coming from different vendors. As a result, the unique needs of simulation taxes traditional data management capabilities.

“A single engineering workflow can involve tools from multiple vendors, each with its own data format that must be transferred from one application to the next and with full traceability from the simulation results back to the upstream requirements and CAD designs,” says Andy Walters, director, software development at ANSYS. “Workflows are often automated and replayed in optimization loops that greatly amplify any inefficiencies in file storage, retrieval and translation, and large files exacerbate what is already a complex process management problem.”

One way ANSYS is avoiding storage waste is by promoting reuse of existing CAD and CAE models through sophisticated search capabilities. For example, the ANSYS Simulation PDM (SPDM) system supports flexible keyword and property search, with filtering based on model and project type, user or team ownership or additional parameters.

“Search is only possible if the system automatically extracts simulation properties and other metadata from archived files, if it allows for custom fields and if it works equally well for all commercial and in-house file types,” Walters says.

Aras is addressing the simulation data management wrinkle with its acquisition of Comet, an SPDM vendor-neutral process automation platform. Aras is incorporating the Comet data model as an integral part of Aras Innovator. Plus, Innovator’s federated services approach also allows the Aras database to connect with external data sources via a link or file connection without extracting specific data from it.

“There are a number of areas that require data to be distributed and managed in multiple pools and this ends up being big data,” explains Malcolm Panthaki, Aras’ vice president of analysis solutions. “You need a way to seamlessly and securely deal with information distributed within multiple data stores … because we don’t want the digital thread to be broken.”

In the end, successful data management in the age of the digital thread may have less to do with technology and more to do with getting engineering organizations to change the way they work.

“The biggest challenge is not the technology—it’s the fundamental process change for how customers have typically done their business,” says Mark Fischer, director, product management and partner strategy at PTC.

More Ansys Coverage

More Aras Coverage

More Dassault Systemes Coverage

More Onshape Coverage

More PTC Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Beth Stackpole is a contributing editor to Digital Engineering. Send e-mail about this article to [email protected].

Follow DE