Making Digital Thread Work for You

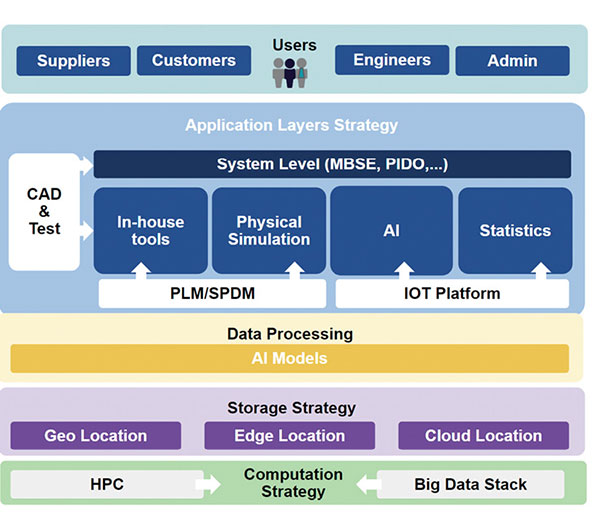

Digital thread initiatives may require a mix of on-premise and cloud-based compute resources.

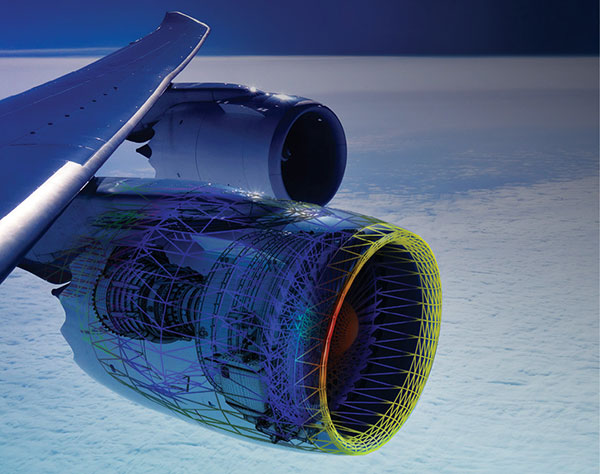

The magic of digital thread is that it sews together data from the design, manufacture and maintenance spheres, providing a single version of the truth that promises to mitigate the challenges posed by the complexity of today’s product development processes. Image courtesy of Rescale.

Latest News

March 1, 2020

The evolution of digital thread is still very much in flux. A number of essential components have yet to be clearly defined, and industry leaders and technology providers still have to agree on the best way to implement the concept to enable broad adoption. As a result, engineers are often left with as many questions as answers.

Some big issues that challenge companies interested in implementing digital thread include questions like: What is the best scale for the initial stages of a digital thread initiative? Is it practical to expect most companies to use on-premises resources to collect the relevant data and extract knowledge via big data techniques, or must they turn to cloud-based systems and services? What type of compute resources must companies bring to bear to handle the data that comes into play when implementing a digital thread, and what role does high-performance computing (HPC) play?

The answers certainly are not etched in stone. A look at today’s practices and tools, however, does give engineers an idea of the current state of the technology and offer a glimpse of what may come tomorrow.

The Concept

Just one of several buzzwords to emerge from the convergence of big data and the drive toward digitization, “digital thread” refers to a communication framework that connects data flows to create a holistic view of an asset’s data throughout its lifecycle (Fig. 1). The overall goal is to eliminate silos of information that so far have hindered data exchange between disparate software systems.

By aggregating all design, manufacturing, performance, supply chain and maintenance data of an asset, the digital thread aims to provide the means to deliver the right information, to the right place, at the right time. This translates into providing engineers and companies with the ability to access, integrate, analyze and transform data from all relevant systems into actionable information.

This digital mechanism promises to revolutionize design, manufacturing and supply chain processes by supporting more effective assessment of an asset’s current and future capabilities; enabling early discovery of performance deficiencies; opening the door for continuous refinement of designs and models; and optimizing manufacturability.

Bottom line? Advocates contend that the digital thread will mitigate the challenges posed by the complexity of today’s product development processes.

Where Does the Data Come From?

To construct a digital thread, engineers tap two groups of data. The first consists of “digital definitions data,” provided by domain platforms like product lifecycle management (PLM), application lifecycle management, manufacturing operations management and enterprise resource planning (ERP) systems.

“PLM systems source most of these digital definitions,” says Dave Duncan, vice president, product management, at PTC. “ERP/CRM orders, product clouds and other sources provide the lighter weight product properties, like model numbers and options. PLM retrieves configuration-specific detail from these product properties by decoding ‘recipes’ of overloaded design definitions, such as CAD, BOM [bill of materials] and process plans.”

The second data group contains information pertaining to the physical experiences of products and processes. This data is often sourced by Internet of Things (IoT) platforms, which provide sensor-based activity data on business system activity, such as manufacturing histories, field dispatches, maintenance work orders and warranty claims.

Basic Implementation Steps

There are several phases to building a digital twin. The first step calls for the engineer to create a model, or digital representation, of one or multiple physics that support the product or system.

The development team then gathers a set of data to feed the model, using different sources, which range from IoT-device sensor data to traditional data sources like comma-separated value (CSV) files. Everything that will enrich the model and add value is welcome.

The challenge then becomes stitching the disparate types of data together using a digital thread. This represents the glue that allows the engineer to connect the right data with the right model or models.

All of the aforementioned data must be supported by a set of tools that combines CAE, IoT and advanced analytics that run on top of the infrastructure. This, in turn, calls for a transversal layer of security for the whole chain.

These, however, are only the first steps toward reaping the full value of digital thread.

“So far, the industry has been moving in one direction, getting everything in a single digital environment,” says Alvaro Everlet, senior vice president, IoT platform, Altair Engineering. “Now it’s time to use that privileged point of view to turn it into value that translates into insights or actions that the analytics tools can provide.”

Start Small

In general, all digital thread implementations follow these standard steps. That said, every implementation is shaped by application-specific requirements.

Foremost of these modifications are often process constraints rather than technology limitations. To avoid problems throughout the development process, these obstacles should be addressed holistically, early in the initiative.

A chief challenge involves determining the ideal scale of the initiative. This requires identifying priority use cases and determining what data is required to support them. It’s best to start with a subset of potential digital twins, and stitch together only what you really need.

“We’ve worked with customers that initially tried to extract every piece of product and process data they could find in their systems,” says Duncan. “They then link them together and tell their app developers, ‘Here’s everything we have. So go get whatever you need.’ Not only were these budget-busting implementations, but the end result was too complex for the organization to utilize. If it requires data scientists to query a digital thread, it’s a miss.”

Starting small allows development teams to deploy the digital thread quickly and to achieve value early, but engineers should also avoid boxing themselves into a corner. Early on, they should plan how they are going to iterate.

This requires considering two dimensions. The first is use case coverage, which involves expanding the data and query paths. Ensure these anticipated data and paths will gracefully layer onto the digital thread foundation.

The second dimension is integrating capability within the existing use cases, from descriptive to predictive to prescriptive value. Descriptive results return data fields specific to a product or process, in context to the user and task. A lightweight digital thread that keeps most of the data in place serves descriptive value well. Data in place inherently secures the data, too. Users can access only the data that they can reach directly via the source applications.

Predictive and prescriptive results involve heavier data lifting and more robust security structures to calculate similarities, analyze outcomes and apply machine learning.

Value of Local Computing Resources

Perhaps one of the biggest digital thread considerations revolves around the compute resources required to ensure a successful initiative. The question becomes: Can most companies succeed using on-premises resources to collect all the relevant data and extract knowledge, or must companies turn to cloud-based systems and services to achieve digital thread’s full value?

Like other most digitalization initiatives, no single answer fits all situations. For development teams to make the right choice, they have to weigh a number of issues.

For example, the local, or on-premises, approach has great appeal when issues like regulatory compliance and latency play key roles.

“Many digital thread initiatives incorporate some level of field sensor data or OT [operational technology] factory data, so it will be necessary to ensure that investments are made in extending the enterprise IT capabilities to the edge and to recognize the cost and effort required to link IT and OT systems,” says Rick Arthur, senior director of digital engineering at GE Research. “Real-time and latency-sensitive data and processing will likely reside on edge/IoT and in dedicated [on-premises] systems.”

Opting for the Cloud

Although arguments can be made for the on-premises approach, the scale, dynamic nature and workloads of digital thread initiatives increasingly draw engineers and companies to the cloud. In general, most processing-intensive and highly variable workloads benefit from the elastic qualities of a cloud infrastructure.

“[Digital thread] workload demands are almost always nonlinear, and having the ability to burst capacity—while incurring a temporary, incremental cost—will meet tight business requirements with a significantly lower [total cost of ownership],” says Taylor Newill, senior manager of product management at Oracle.

“User-friendly deployment automation options, along with on-demand burst infrastructure capacity, allow for fast, easy and low-cost options for testing new software versions and performing additional process iterations on large data sets. In the cloud, it’s a trivial exercise to snapshot terabytes of data on-demand, replicate a complete production compute environment, test version changes, play back logged activities during an investigation, or perform additional analytics without impacting production activities,” he adds.

Cloud infrastructures also perform well in supporting digital thread initiatives that call for agile enterprise-scale access to specialized compute resources. Rather than restricting or prioritizing access to on-premises resources based on limited hardware capacity, the cloud promises to make it possible to address demand in a timelier manner.

“When it comes to ease of scalability for the digital thread, we think it is more realistic for companies to turn to cloud-based systems and services,” says Duncan. “Engaging with a cloud-based system or service allows for a more flexible and agile process. The information making up the digital thread is not fixed, and the means by which it is used should not be either. Additionally, as a digital thread iterates, suppliers and other partners often participate directly, which is much easier and [more] secure to broker with cloud approaches than corporate intranets.”

This type of flexibility becomes particularly important when the digital thread’s development process takes on a global scale.

“Today’s organizations have engineering centers and customers distributed across the world, and the complexity of the supply chain is increasing,” says Fanny Treheux, director of industry solution marketing at Rescale.

A Little Bit of Both

Digital thread developers don’t have to limit themselves to either on-premises computing infrastructures or cloud-based systems and services. Today, a growing number of companies are choosing to have it both ways (Fig. 2).

“Increasingly, organizations are adopting hybrid infrastructures that can leverage either local or cloud resources through technology like cloud bursting, depending on the workload requirements,” says Everlet. “For example, an organization using digital thread physics-based models may work with data-driven models in the cloud to define an algorithm that can be run in a local environment for low-latency decisions.”

This approach allows companies to maximize the benefits they derive from IoT assets while meeting their need to craft digital threads that operate well on a global scale.

“Depending on regulatory, security and process needs, we see companies deploying cloud and non-cloud solutions for different domains,” says Johan Grape, Sr., director of technology and innovation at Siemens Digital Industries Software. “IoT platforms and edge solutions are being adopted by companies for gathering data from their operating assets for digitalizing their service processes. Cloud-based solutions are being created to address the global reach and scalability challenges.

“From an engineering data perspective, companies could consider leaving the work-in-progress data on-premises and storing released data in the cloud to the level that they need to support their business scenarios,” Grape adds.

Is HPC Necessary?

After deciding whether cloud services are required for a digital thread implementation, the next question development teams should address is the need for high-performance computing (HPC). As with many other aspects of digital thread development, application requirements play a big role in helping engineers answer this question. Often the approach pursued includes a variety of computing platforms.

“What we see with our customers is that they often run many types of simulations, many of which are best served by different types of infrastructure,” says Edward Hsu, vice president of product at Rescale. “This can include general-purpose CPUs, GPU-enabled, high-memory or high-interconnect systems—what people often think of as HPC.”

In addition to the type of simulations being run, the type of compute resource required also depends on the active phase of the digital thread’s lifecycle. “Typically, while authoring the digital thread, engineers operate within a working context,” says Grape.

“There is not a lot of need for additional computing power to support these tasks. The issues of massive data do not really come into play here,” Grape adds. “Consuming a digital thread across disciplines and domains can, however, be more challenging, depending upon the scope of the digital thread required for a business need.”

For example, when the digital thread is being used to improve product design and performance, it may be necessary to gather as-operated field data from customers, as-validated performance data from test labs, as-built yield and manufacturability data from factories and suppliers, and as-designed and design trade-off options from engineering.

“Consolidating and cross-referencing these data will require significant investments in big data systems to capture and unify field, physical test, design simulation, manufacturing and supply chain data,” says Eric Tucker, senior director of HPC and machine learning products at GE Research. “Depending on the complexity of your product and maturity of your design practice, HPC resources may be required.”

When cost becomes the determining factor, market research increasingly indicates that the use of HPC resources quickly provides a good return on investment. A 2018 study conducted by Hyperion Research (hpcuserforum.com/ROI) on economic models linking HPC with return on investment shows that a dollar invested in HPC resources returns approximately 100 times on each dollar invested in traditional product design practices.

More Altair Coverage

More PTC Coverage

More Rescale Coverage

More Siemens Digital Industries Software Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News