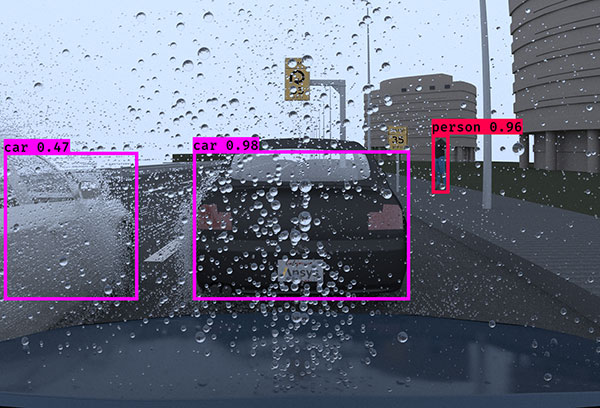

Nature can run major interference when engineers test autonomous driving in bad weather. Image courtesy of Getty.

Latest News

September 2, 2022

Nature presents a major obstacle when engineers test autonomous driving in bad weather. You cannot invoke a snowy, rainy or sunny day on demand; nor can you summon up a thunderstorm at your engineering team’s convenience—at least you can’t in the real world. But you can in the virtual world where you control the pixels. This has now become a growing business segment for simulation software makers.

“Autonomous vehicle makers need to be able to parametrically vary the weather conditions so that they test the full range. It’s not adequate to just test the most extreme cases. That’s where simulation plays a critical role,” says John Zinn, director of global autonomous solutions, Ansys.

Zvi Greenstein, GM of automotive at NVIDIA, points out that collecting data on a hazardous road in bad weather is not only time-consuming but dangerous. “This is where synthetic data generation [SDG] helps fill in the gaps,” he says.

Simulated Sight for the Car

For autonomous cars, sensor-enabled perception is critical to navigation.

“Driving in the rain is challenging, but rain at night is even more challenging. For example, light sources interact with droplets on the lens or windshield to create specular artifacts in your camera system, which can obscure important details, creating challenges for perception systems,” says Zinn.

Ansys’ simulation products for autonomous vehicle (AV) and autonomous driver assistance systems (ADAS) include AVxcelerate Headlamp; AVxcelerate Sensors; and Ansys Speos for optical simulation.

“In our solution, we have physics-based virtual sensors. When we simulate the camera, we’re measuring the light propagation throughout the scene, how that light is then returned to the camera system and how it’s modified by the rain and bad weather. This is what’s fed into the AV’s perception system,” Zinn explains.

Once a graphics processing unit (GPU) maker, NVIDIA has in the last decade redefined itself as a leading supplier of artificial intelligence (AI) hardware and software. For the AV sector, it offers NVIDIA DRIVE Sim, a multisensor simulation platform connected to NVIDIA Omniverse, the company’s virtual world.

“NVIDIA DRIVE Sim’s sensor pipeline incorporates various aspects of real-world sensor subcomponents, including lenses, image sensors, postprocessing and image signal processor (ISP) workflows to replicate real-world behavior,” says Greenstein. “This pipeline allows users to incorporate certain kinds of noise or aberrations at each stage of the process to generate datasets that might be difficult for a perception algorithm.”

According to Nathir Rawashdeh, professor, departments of Applied Computing and Electrical and Computer Engineering at Michigan Technological University (MTU), one of the difficulties to overcome is working with fast-evolving devices.

“Sensor and computing technologies are rapidly evolving and changing in an engineering sense, which requires continuous updating of noise simulation and sensor degradation models to serve the ADAS community of engineers and researchers,” Rawashdeh says.

Synthetic Weather

With deep roots in games and film, NVIDIA integrates physically accurate raytraced visuals into its AV simulation solutions.

“The first step in modeling wet weather is introducing wetness on road surfaces and accurately understanding the physics of light reflection from puddles or wet patches on a road,” Greenstein says. “Modeling multiple sensor modalities, real-world materials, introducing particulate weather effects, including rain and snow, are complex engineering problems that need expertise in rendering, sensing and modeling physical behavior.”

Greenstein adds, “DRIVE Sim makes it possible for AV developers to take a scene and expand to address what-if situations that may be rare or too dangerous to capture in real-life testing. New data generated from these tests can help train and iterate upon deep neural networks [DNNs] and test the software stack.”

Since it’s impractical to collect real-world data to cover the breadth of hazardous conditions the AV will likely encounter, synthetic datasets play an important role.

“Synthetic datasets are programmable, allowing developers to zero in on the edge cases on which neural networks must be trained to operate safely. Specifically, NVIDIA DRIVE Replicator provides hard or impossible ground truth that humans can’t label, including severe weather conditions, edge cases, or even velocity, depth and occlusion,” says Greenstein.

Greenstein cautions that synthetic data is not a replacement for real-world data; rather, it’s a good way to augment it.

“The method can greatly accelerate the development process when used to target specific scenarios,” he points out.

Fidelity to the Real World

Jeremy Bos, associate professor, Electrical and Computer Engineering; and faculty advisor, Robotic Systems Enterprise, MTU, believes it’s important to understand the degree of accuracy required for different purposes in simulation-based AV training.

To achieve full realism, “the physics of the interaction between the weather and the sensing system must be modeled,” Bos says.

This includes digitally recreating the characteristics of the rain, dust and snow, down to the particle size, droplet shape and distribution; the cameras or antennas detecting them; and how the two interact. Other items to simulate are cloud coverage, time of day, season and the surface properties of wet, snowy, icy and dusty roads.

“Rainfall and snowfall are generally not uniform. Blowing snow can form coherent structures,” Bos says. This is what the researchers call “bulk weather medium effect.”

On muddy roads, the AV will likely have to deal with the splashes and kickups from the vehicles ahead. Whereas humans have learned to interpret mud sprays and droplets as particles, the AV could have trouble distinguishing them from solid objects.

“The big mushroom cloud around the back of a truck or an SUV makes the vehicle look bigger than it is for the lidar,” Zinn says. “We can model the full dense cloud and the road spray, including the tire track patterns and the shapes of the sprays they produce, using [computational fluid dynamics (CFD)].”

You can technically simulate all the likely effects on the road, but it might not be practical or even necessary, Bos says. In most cases, based on what you want to evaluate, using representative models with approximations is sufficient. The strategies may include using heuristic rules to characterize the interaction for the sensing systems; ignoring the ambient light, using a lightbox or simplified lighting models and using uniform weather instead of intermittent weather.

You Say Downpour, I Say Drizzle

Hazardous weather appears in different forms in different regions. Therefore, Bos cautions against overgeneralization.

“For example, winter conditions in Lake Tahoe are not the same as in Michigan; a thunderstorm in Miami is not like a tropical storm in Hawaii or evening rain on Kauai. Local climatology can produce important variations. Simulating a worst case may cover all other conditions. However, the second you start making assumptions and approximations, you’ve lost something,” Bos explains.

Regional differences introduce a classification problem for programming, Zinn points out. “What’s heavy rain in Arizona is a light or medium shower in the Pacific Northwest. So we break these down into absolute metrics, such as inches of falling rain per hour to define the density,” he says.

AV makers also need to clearly identify the operation design domain of their system, according to Bos. “It can be enough to design and test for one environment, say, Arizona. However, if the weather changes outside of what has been characterized, modeled and tested, the autonomous vehicle cannot operate,” he warns.

Antennas in Simulated Rain and Fog

For Cadence, a computational software developer, the focus is on the AV’s antenna.

“Rain, mist and fog are all weather phenomena with different densities and scattering properties that impact signal propagation and thus the performance of these systems. Adding weather effects into the system simulation would be a natural extension to offer to the AV design engineers developing an end-to-end link budget,” explained David Vye, senior product marketing manager for RF/microwave products at Cadence.

The company offers Cadence AWR Visual System Simulator (VSS), a software package for RF/wireless communications and radar system design and analysis.

“There are well-known models for rain attenuation, such as ITU-R, Crane and DAH, as well as open-source implementations for them. Furthermore, manufacturers may use models based on their own measurements,” Vye says.

VSS software can incorporate open-source models as well as MATLAB’s model (“Modeling the Propagation of Radar Signals”). The simulation becomes far more complex when AV developers couple the simulated sensor data with other electrical systems and vehicle dynamics, to verify if the input triggers the appropriate deceleration, acceleration or stoppage.

“Cadence AWR Design Environment platform incorporates circuit simulation and system simulation as well as electromagnetic (EM) analysis, such as method of moments (MoM), finite element methods (FEM), and electrothermal analysis from finite element analysis (FEA) and CFD programs,” says Vye.

In less-than-ideal weather, the ADAS needs to analyze the degraded input from the cameras, antennas and microphones to make educated guesses, just as human drivers would. This is where machine learning plays an important role.

“AI can learn patterns in large amounts of perception data and can also be continuously updated via cloud computing using data actively collected from other vehicles on the same road,” Rawashdeh points out.

More Ansys Coverage

More Cadence Coverage

More NVIDIA Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE