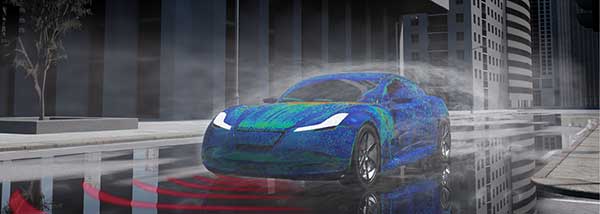

Autonomous vehicle simulations should also be performed in nighttime and other low-light conditions. Image courtesy of Dassault Systèmes.

Latest News

September 15, 2020

Hats off to those riding shotgun in the self-driving test vehicles operated by Lyft, Uber, Argo AI and other autonomous vehicle (AV) adoption pioneers. With their eyes on the road and hands at the ready, these brave souls are paving the way toward a more efficient future—one where commuters can avoid wasting time behind the wheel each day, where accidents and traffic jams are ancient history and where individual car ownership will likely become obsolete.

Unfortunately, these manual testing efforts are doomed to failure, according to Sandeep Sovani, director of advanced driver assistance systems (ADAS) and autonomy at Ansys.

Sovani suggests that without comprehensive simulation tools like those found in Ansys Autonomy, this glorious future is 26,400 years away.

“That’s how long it will take for traditional road testing to log the 8.8 billion miles needed to make self-driving cars a reality,” he says. “Simulation offers tremendous advantages over the current testing methods and can reduce that by a factor of a hundred or maybe a thousand, which would mean two and a half years to get where we need to be.”

This screenshot illustrates the nearly infinite variety of lighting and road conditions with which a fully autonomous vehicle must grapple. Image courtesy of Ansys.

Another and perhaps more important advantage is simulation’s ability to test the scenarios that drivers most want to avoid, such as icy road conditions or collisions with pedestrians and other vehicles. These are dangerous, potentially life-threatening events in the real world; in the virtual one, they’re no big deal. Sovani refers to this as one of simulation software’s superpowers, in that it can go places and do things that no human driver would dare attempt, as well as see things invisible to the human eye.

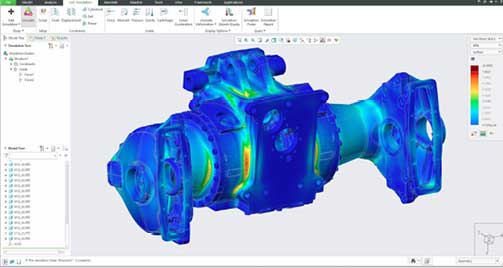

“Simulation allows an engineer to visualize temperature, vibration and mechanical forces, or virtually cut an internal combustion engine in half while it’s operating for a peek inside the combustion chamber,” Sovani says. “None of this is possible in the real world, but in the simulated one, you can go deep inside any object and look at what’s happening. With those insights, it becomes much faster and easier to improve product designs.”

AVs, he explains, must be able to sense, think and act. Somewhat surprisingly, the Ubers and Lyfts described earlier are already doing a reasonably good job on each of these counts, but nowhere near as effectively and intelligently as required for automakers to avoid the legal and possibly moral responsibility, should their inventions fail. Hence the need for simulation software.

“Some AV manufacturers are targeting hundreds of millions of kilometers of driving simulation every two weeks,” says Sovani. “That’s what’s needed to subject these systems to the universe of scenarios they are likely to encounter.”

Running Interference

Automakers face several challenges with the first of Sovani’s AV requirements: sensing, notes Rachel Fu, technical director for R&D strategy for SIMULIA at Dassault Systèmes. She says the sensors used in autonomous driving systems must be electromagnetically high-performing; compatible with the available installation space; either invisible or capable of aesthetically improving the vehicle design; and compliant with electromagnetic compatibility and interference (EMC/EMI) requirements.

“When radar sensors are placed behind bumpers, for instance, the beam can become distorted, resulting in ambiguous object detection,” Fu says. “Electromagnetic simulation helps predict these effects, providing additional optimization opportunities or recommendations for system tuning. Such simulation can also predict sensor behavior in a realistic virtual environment that includes car geometry, roads and highways, accounting for the detection of so-called ghost targets that sometimes occur due to reflections.”

In addition to electromagnetic performance, so-called “soiling” and wind or road noise are other factors that should be considered when determining optimal sensor location. Designers can either replicate these conditions via extensive road testing or use software with advanced fluid simulation capabilities. Regardless of the method, the number of sensors and their placement must be optimized to minimize both conditions, a task that the company’s SIMULIA simulation software is quite capable of performing, Fu adds.

“Another factor affecting the adoption of self-driving vehicles is the ability of passengers to perform activities such as reading or socializing without experiencing motion sickness,” she says. “Because they’re no longer focused on the road, drivers are more sensitive to the quality of the vehicle dynamics and overall riding experience. SIMULIA’s simulation capabilities provide fast, accurate and stable solutions, including flexible chassis and real-time tire modeling, all of which are critical for passenger comfort.”

Simulation Necessity

But is all this simulation really necessary? Waymo recently announced that its self-driving vehicles have driven 20 million miles of driving on public roads in 25 cities. Most new cars offer automated steering, braking and other “super cruise” technology. Does this mean the industry is getting close to AV nirvana without investment in these advanced software systems?

We’re not even close, according to Matthieu Worm, director of autonomous vehicles at Siemens Digital Industries Software. Where the adaptive cruise control systems just mentioned are required to pass roughly 150 proving ground tests before approval, AVs must adapt to a seemingly endless list of scenarios before they are considered better drivers than their flesh-and-blood counterparts.

“Unlike computers, humans are very capable of making decisions in unexpected, unseen situations,” he says. “Consider an AV in Australia. It’s certainly possible to teach it to brake when a kangaroo jumps in front of the car, but what happens when you take that same car to the United States and it encounters a cow? Humans have no problem differentiating between the two, but it’s still quite easy to fool the relatively crude neural network found in today’s self-driving cars.”

Further, you might get the car to react appropriately under each of these situations, at least until it starts to rain, or a human is walking alongside the cow, or it’s late afternoon and the sunlight is hits the camera at the right angle. An AV must learn to behave properly regardless of the circumstances, and do so perfectly—or at least, more perfectly than humans.

“Simply put, there’s no practical way to develop fully autonomous vehicles without advanced simulation, such as what we offer with Simcenter Prescan, part of our Simcenter portfolio for simulation and testing,” Worm explains.

Smart Cars, Smart Roads

AV development extends well beyond the bumpers. Worm points to Cooperative Vehicle Infrastructure System (CVIS), a European project that “was funded a couple of years ago with many initial players and academia partners working together on determining the role of infrastructure sensing and vehicle-to-vehicle communication.”

The role of infrastructure has thus far been underestimated, Worm adds, but the automotive design community continues to gain awareness about the added value of infrastructure simulation.

“Simcenter Prescan therefore not only brings on-board sensor simulation capabilities for radar, camera and lidar, but also for 4G and 5G communication and [global navigation satellite system] positioning, to virtually test vehicle to infrastructure communication in challenging conditions,” Worm says.

Bernhard Mueller-Bessler is the managing director of VIRES Virtual Test Drive (VTD) at MSC Software, a part of Hexagon Manufacturing Intelligence. He concurs with the need for a realistic-yet-comprehensive testing environment, noting that VIRES Virtual Test Drive (VTD) is a “toolkit for the creation, configuration, presentation and evaluation of virtual environments in the scope of road and rail-based simulation.”

“Think of it as a virtual proving grounds, one that includes not just the streets but any number of infrastructure components, other vehicles, traffic conditions and so on,” Mueller-Bessler says. When used in conjunction with tools like MSC Software’s Adams Real Time, which he described as a hardware-in-the-loop solution, and SimManager for process and data management, the software suite is [made] to give automakers the ability to simulate and test “thousands of vehicles simultaneously.”

What About the Hardware?

Such broad-scale testing requires an immense amount of computing power, however, which is why MSC Software works closely with the likes of Amazon and Microsoft, leveraging their cloud-based systems to provide the necessary hardware.

Despite these capabilities, Mueller-Bessler warns that there’s a delicate balance between acceptable simulation performance and what’s actually needed to make the correct decisions.

“You can throw a lot of details into the car and the surrounding area and therefore visualize things better, but this might not make sense from a cost perspective,” he says.

NVIDIA is a well-known hardware and software pioneer, although its participation in automobile development—autonomous or otherwise—might surprise you. “We’ve been an innovator in the transportation industry for several decades and have numerous partners who are building applications on our [graphics processing units (GPUs)], including all types of simulation,” says Danny Shapiro, senior director of automotive at NVIDIA. “Examples include crash test and wind tunnel simulations, where you’re essentially modeling physics directly on the GPU. Doing so allows designers to iterate faster, make better products and reduce costs by not having to physically build models and place them in a wind tunnel or crash test facility.”

The company is also active in developing the artificial intelligence needed to safely operate AVs. It does this through a platform called NVIDIA DRIVE AGX, which Shapiro described as an end-to-end platform able to efficiently process all of the sensor data the car needs to make split-second decisions. These vision systems once required tedious programming and endless lines of static computer code to recognize something as simple as a stop sign; now modern systems like DRIVE AGX use deep learning technology.

NVIDIA is enabling the industry to drive billions of qualified miles in virtual reality with powerful NVIDIA DRIVE Constellation. Image courtesy of NVIDIA.

“For instance, we feed the system pictures of stop signs taken from different distances, different angles [and] different weather conditions, and we then repeat this exercise with all kinds of objects,” Shapiro points out. “Over time, the system’s neural nets learn to recognize cars and trucks and pedestrians and bikes and motorcycles—everything that it might encounter in the real world. We then incorporate this same intelligence into DRIVE Constellation, our hardware-in-the-loop simulator, and use it to validate the actual hardware and software used by AV manufacturers. The DRIVE AGX process the data, plans a safe route and sends acceleration, braking and steering commands, not to an actual vehicle, but back to the simulator. The system thinks it’s on the road even though it’s actually in a data center. This is a much more efficient and safer way to augment on-road testing.”

Time to Market

Jesse Blankenship, senior vice president for advanced development at PTC, views it differently. He says the tools needed for AV design—and even the hardware and software within the vehicles—are nothing new. Companies have designed and manufactured increasingly smart, sensor-equipped, self-driving airplanes, mining equipment, spacecraft, machinery and more for decades; the call for Level 5 (full driving automation) is just forcing automakers to up their game.

Aside from the simulation tools, competing in the AV automation game will require the next generation in design and manufacturing software systems.

“Setting aside the role of a sensor and the data it will collect, think for a minute about the mechanics behind that sensor’s placement, and how it’s attached to the vehicle,” he says. “If you mount it in a zone of plastic deformation, it’s quite likely that the data you receive from that sensor will never be accurate. You need to be able to simulate conditions like these in your products before you manufacture 10,000 of them.”

Finite element analysis continues to be an integral part of the design process, for autonomous and human-operated vehicles alike. Image courtesy of PTC.

Mark Fisher, senior director in PTC’s CAD product management group, also notes the need for more automated software tools, ones with generative design capabilities that serve to accelerate the design process, and topology optimization to help reduce vehicle weight and streamline manufacturing. Both will be required in this age of continuous product development, where the traditional years-long design cycles are compressed into months or even weeks.

“Whoever gets to market first wins, something that’s true for autonomous and traditional cars alike,” Fisher says. “We [at PTC have] introduced a number of different technologies to support this need, real-time simulation among them. This allows designers to speed up and improve the iterative process, and also reduces some of the downstream costs, such as sending a model out for design analysis and evaluation. This is typically an expensive [process] using very expensive software, so you don’t want to learn a few days into it that you put the wrong screw somewhere. That’s just one small example, but the point is that advanced CAD capabilities like those in Creo save time, whatever it is you’re working on.”

More Ansys Coverage

More Dassault Systemes Coverage

More Hexagon MSC Software Coverage

More NVIDIA Coverage

More PTC Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kip Hanson writes about all things manufacturing. You can reach him at .(JavaScript must be enabled to view this email address).

Follow DE