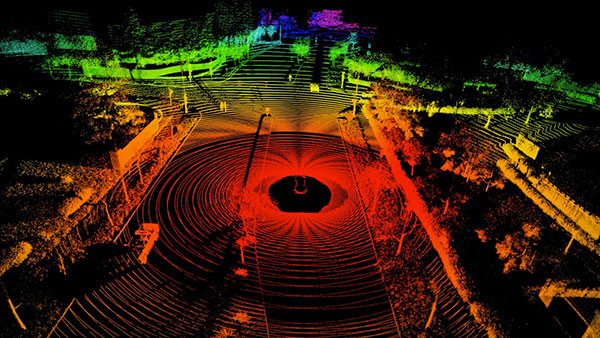

NVIDIA DRIVE Sim Replicator allows you to generate synthetic data based on real-world data for autonomous vehicle training. Image courtesy of NVIDIA.

June 2, 2023

For autonomous vehicle (AV) development, the gold standard is real-world data, collected from actual cars on the road. But there are practical constraints to how much real data can be collected, due to the time and cost involved. This is especially true with accidents and collisions, which cannot be repeated without endangering the vehicle or the human subjects.

“Safety critical situations, like accidents, are a low probability in real life, so if you test your autonomous vehicle in the real world, the car doesn’t have enough chances to experience these situations,” says Henry Liu, University of Michigan’s professor of civil engineering and director of Mcity, a public-private transportation and mobility research partnership. (For more on Liu’s work and Mcity, read “Seeking Misbehaving Virtual Drivers” in this issue.)

One solution to the dilemma is to use synthetic data, spawned from real-world data using artificial intelligence (AI) programs. But the data is valuable only if it matches probable scenarios in reality. We spoke to the experts to understand the rules behind creating synthetic data for AV training.

Realistic Fake

AI hardware and software maker NVIDIA’s offerings to the automotive sector includes DRIVE Sim, a simulation platform for running large-scale, physically accurate multi-sensor simulations. The software runs in Omniverse, NVIDIA’s immersive 3D simulation environment. Part of the Omniverse platform is Omniverse Replicator, which generates synthetic data.

“For synthetic data to be useful, it has to be realistic enough that when the AV system is learning, it can transfer its learning to the real world,” says Matt Cragun, senior manager, automotive vehicle simulation at NVIDIA. “To train AI systems that run in AVs, data is presented together with the ground-truth information so the software can ‘learn.’ This data can be from real-world sources or synthetic from a simulator, such as NVIDIA DRIVE Sim.”

Cragun outlined the three main areas where AV developers tend to rely on synthetic data:

- Data has not been collected, but is known to exist (a phenomenon Cragun refers to as “the long tail of perception”);

- Data has been collected, but is not labeled due to factors such as occlusions or blur;

- Data cannot be collected by sensors (data such as velocity and direction).

The challenge in generating synthetic data, Cragun points out, comes from the difference in how a pixel appears on a real camera versus a simulated camera.

“The renderer, sensor model, fidelity of 3D assets and material properties can all contribute to this,” says Cragun. “To narrow the appearance gap, NVIDIA DRIVE Sim uses a path-tracing renderer to generate physically accurate sensor data for cameras, radars and lidars, and ultrasonics sensors. These details even include high-fidelity vehicle dynamics, which are important because, for example, the movement of a vehicle during a lidar scan impacts the resulting point cloud. Additionally, materials are simulated in DRIVE Sim for accurate beam reflections.”

Realistic Complexity

In its blog post titled “Simulating Reality: The Importance of Synthetic Data in AI/ML Systems for Radar Applications,” Ansys pointed out, “For the purposes of training and testing AI/machine learning (ML) models, synthetic data has many potential benefits over physical data. Firstly, you need to label and sanitize real-world data before use, but this is inherent with synthetic data and simulation.”

Ansys’ recommended approach is not to rely entirely on synthetic data but to use it to augment the real-world data so that AI/ML algorithms have a dataset large enough to analyze and draw inferences from.

“With simulation, you can easily recreate dangerous or rarely occurring situations to fill the gaps of physical testing. In this way, synthetic data both complements and augments real-world testing by increasing sample size and providing unique training opportunities that are not possible with real-world data alone,” Ansys authors wrote.

Simulation programs running on algorithms will always be limited by what can pragmatically be computed with the resources available. This often leads to a reduction or simplification of reality.

“For example, the real world contains dirty roads, dented cars, and emergency vehicles on roadsides, which all must be reproduced in simulation. Another important factor is the behavior of actors, such as traffic and pedestrians,” says Cragun. “We can rapidly manipulate the scene using a capability called domain randomization and place assets in a context-aware manner and then change the appearance, lighting [and] weather to match real-world conditions.”

Similarly, Ansys suggested using simulation to increase the variety of scenarios based on captured real-world data.

“The number of variables from driving conditions to roadways are nearly limitless. Simulation offers a major advantage here by not only making it possible to virtually test countless conditions but by generating enough synthetic data to complement and maximize physical data,” the company said. Ansys offers, among others, AVxcelerate, a simulation program to virtually experience sensors and their performance.

Fidelity Rules

If you’re going to generate synthetic data, it’s also important to understand the gaps and weaknesses in your source data. “If most of the data was collected in daytime conditions, an algorithm trained on that dataset may not perform as well during nighttime or low-light conditions,” says Cragun.

The mix of synthetic to real data is an important factor. Too much synthetic data could result in diluting the reliability of the real-world data.

“We performed a study comparing different ratios of real and synthetic data in the training dataset for a pedestrian-detection task. In this case, a ratio of 20:80 or 30:70 of synthetic to real data consistently showed performance improvements across various dataset sizes when compared to datasets made up entirely of real data. However, this ratio differs for each algorithm and dataset of interest; thus, fine-tuning is necessary,” says Cragun.

More Ansys Coverage

More NVIDIA Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE