When Ansys developed Discovery Live for real-time early design exploration, it brought together three separate technologies. The first was SpaceClaim, a direct editing CAD tool used for faster 3D modeling. Next, it borrowed heavily from its portfolio finite element analysis (FEA) and computational fluid dynamics (CFD) solutions. Finally, it wrote new code to capitalize on the observation that graphics processing unit (GPU)-based computation was increasing in speed at a much faster rate than CPU computation.

“Five years ago we were noticing Moore’s law was declining on CPUs but accelerating on GPUs,” says Mark Hindsbo, vice president and general manager for the Design Business Unit at Ansys. “We got a speed boost of up to 1000x on our algorithms,” when they integrated GPU support into the Ansys Discovery Live prototype. “It allowed a real-time paradigm” for simulation as part of the initial design exploration.

“Whenever customers experience a real-time simulation for the first time they scarcely believe it is real,” adds Greg Brown, product management fellow at PTC. “But they dig in and see it has actually solved the problem they are used to waiting minutes or more for.”

Ansys Discovery Live was a breakthrough for the industry when it first shipped in 2017. Today various simulation and analysis products from several vendors support the use of GPU technology. PTC, for example, sells a version of Discovery Live and has also adapted parts of its existing CAE portfolio to take advantage of GPU acceleration. The switch is breaking down the traditional analysis workflow of one model analysis at a time.

“Now we have an iterative approach, where one question leads to another,” notes Hindsbo.

The GPU was originally designed to provide a performance boost for technical software. In the days of the PC, there were a wide variety of graphics cards, some of which only worked in monochrome. Each graphics card required a unique software driver for each software product it worked with.

When the industry standardized on Windows as the de facto operating system, hardware companies were free to focus on performance. NVIDIA and AMD became the two main survivors of an industry shakeout.

As the market consolidated, GPU vendors started adding end-user programmability. When NVIDIA held its first developers conference, there were presentations from a wide range of scientific, technical and entertainment domains, all focused on the ability to run graphics-intensive applications faster. But for the past three years, NVIDIA developer conferences have been dominated by applications for artificial intelligence (AI), machine learning use cases and advanced simulation and analysis, where fast graphics are only one part of the benefit.

Today, GPUs contribute far more than visualization and rendering capabilities. Though CAD engines are based on single-threaded serial processing, most CAD vendors are finding ways to use GPU power to improve overall performance.

“Naturally, graphics and visualization is the area where users will benefit from having a GPU—large model rendering is greatly enhanced,” says PTC’s Brown. “Today it is safe to say GPU-enabled solvers have opened the door to real-time simulation.”

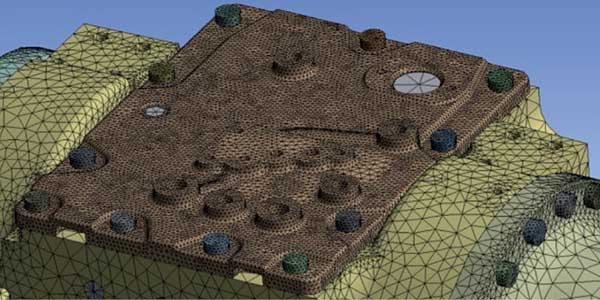

Not all simulations can be formulated to fully leverage a GPU-based process, Brown notes. But with the rise of voxel-based solvers, “suddenly real-time, or at least ‘interactive time’ is within reach.”

Brown says his work laptop, equipped with an NVIDIA RTX 2080 is able to “power generative designs that are basically interactive. I can easily watch the progression and make necessary adjustments to get the desired outcome. Compared to the hardware and software of just 10 years ago this is a quantum leap forward.”

GPUs provide an outlet for algorithms to run orders of magnitude faster in some cases, but they can only do this with the right kinds of problems.

“[These are] things that are ‘data parallel’ as opposed to ‘task parallel,’” Brown says. “If things are inherently sequential then GPUs offer little or no benefit over a CPU.” He says a “few specific operations” in PTC Creo are currently multi-threaded, “but this typically leverages a relatively small number of threads, which can be executed using multi-core CPUs rather than the massive parallel capabilities of a GPU.”

PTC’s Onshape, the first pure-cloud CAD solution, renders the screen using WebGL, which can use the GPU.

“Certainly there are other areas of research we are actively pursuing, and we do expect there will be more areas of our products that get the GPU treatment,” Brown adds.

Today’s GPUs offer fast parallel calculation and an abundance of memory. Sometimes the memory boost is as important as the thousands of small processor cores. This one-two punch will serve GPUs well as computation becomes even more distributed.

“I’m a big believer [that] computing will be on the edge and in the cloud,” notes Ansys’ Hindsbo. To not be perceived as slow, distributed systems must have response times of 50 ms or less. “It is hard to go back and forth to a data center in less than 50 ms,” says Hindsbo. “A high-speed train or a self-driving car needs to answer the bell to ‘Shall I brake?’ in real time. To make decisions faster than 200 ms becomes tough with a cloud-based approach.”

CAD vendors are increasingly taking advantage of artificial intelligence code alongside traditional deterministic code, Hindsbo notes.

“An AI algorithm will use the CPU to set up a specific simulation,” and the GPU runs the problem.

One unexpected benefit of using GPUs for engineering analysis is the cost of running the software. It is common for CAE products to charge for usage based on how many CPUs are running the software.

“They charge by the CPU core but not on the GPU, so from a licensing perspective it is a free speed boost,” notes Rod Mach, principal at TotalCAE, an IT consulting service that specializes in engineering applications. “So our customers use GPU for speed-up without a licensing penalty on their very large models.”

“Not every model or application will benefit” from moving the computation to GPUs, adds Mach. “But it is definitely becoming more common in the implicit realm.” Dassault Systèmes Abaqus, for example, “needs a teraflop and several million degrees of freedom before it is worthwhile to pack up the problem for the GPU.”

TotalCAE runs high-performance computing (HPC) clusters for some clients, and also uses cloud services as needed. But even single-workstation simulation runs can benefit from the GPU boost. “If you only have a few cores—eight or less—adding GPU is a turbo boost,” notes Mach. “It will make a difference on smaller jobs. Every hour counts.”

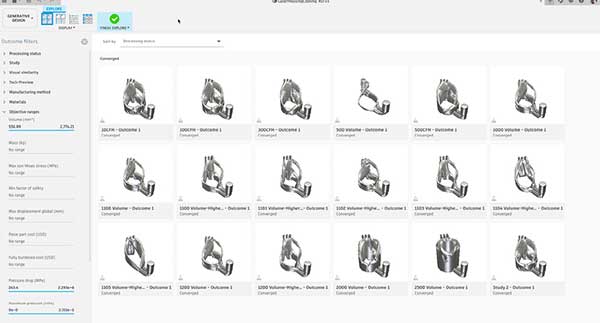

Autodesk is using GPU compute in the cloud for its generative design functionality in Fusion 360. “Generative design is a high-compute workload,” says Brian Frank, a senior product manager at Autodesk. “To make it efficient—for both the customer and for Autodesk—we use a large-scale computer.”

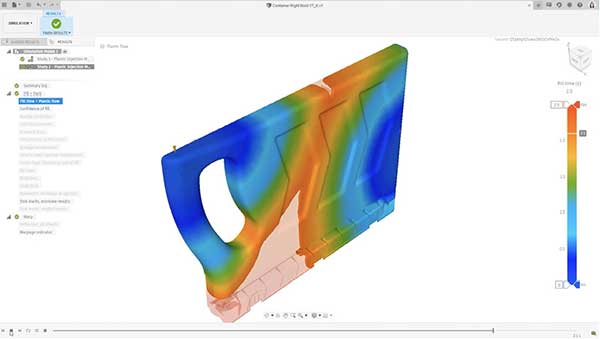

The use of both local and cloud-based GPUs help Autodesk Fusion 360 run common simulations with near real-time results. Image courtesy of Autodesk.

When Fusion 360 sends a job to the cloud it can be either CPU-only or take advantage of GPUs. “When using GPUs, we are talking about a 30 percent to 50 percent speed-up per outcome,” says Frank. “Scale that across hundreds of outcomes, and it is a really significant difference. It is the difference between a couple of hours per outcome versus days” for non-GPU compute outcomes.

Frank says Autodesk customers appreciate the democratization effect GPU use has on simulation, which provides access to large-scale compute resources in affordable small segments.

“Leveraging the power of the GPU is really freeing,” Frank says. “We get to match the right technology to the job. We don’t have to wait for our customers to purchase the latest hardware. Instead of only 5 percent of our customers having high-end resources, they all have access to it.” Whether the user is on an older Mac or Windows computer, a tablet or the latest workstation, “they still get the benefit” of GPUs in the cloud.

Frank says Autodesk is working to extend the value of GPU-based analysis beyond generative design, which is a FEA problem.

“We are working on a new electronics cooling module leveraging the GPU,” as well as other analysis problems that lend themselves to the kind of heavy parallel computation best suited to GPUs, Frank explains.

Looking ahead, software vendors know demand for GPU-based analysis will continue to grow. The question becomes where to focus development efforts.

“Some apps need it more than others,” says Hindsbo. “It might be in some cases that we write for both GPU and CPU. A fluid algorithm might work better in the GPU, while a related set of algorithms for turbulence work better on a CPU. One can accelerate the other.”

PTC is a computer software and services company founded in 1985 and headquartered outside of Boston, Massachusetts.

Randall S. Newton is principal analyst at Consilia Vektor, covering engineering technology. He has been part of the computer graphics industry in a variety of roles since 1985.

Follow DE

Join over 90,000 engineering professionals who get fresh engineering news as soon as it is published.