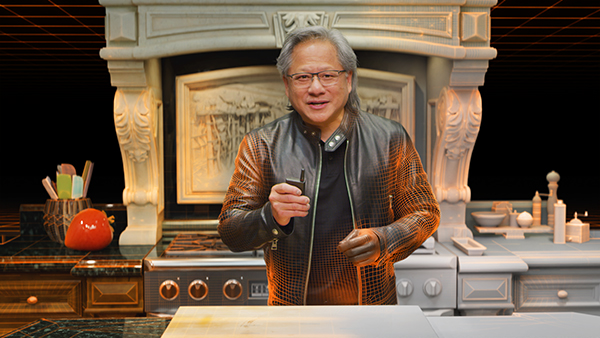

The NVIDIA Creative Team digitally replicated the CEO Jensen Huang’s kitchen in Omniverse for the GTC keynote presentation. Image courtesy of NVIDIA.

SIGGRAPH 2021: NVIDIA Reveals The Secret Behind Its Keynote

GPU Maker NVIDIA Built Virtual Models and Worlds in Omniverse to Create Keynote

Latest News

August 11, 2021

When the pandemic struck last year, SIGGRAPH, like many other tradeshows, made the painful but necessary decision to host a virtual event instead. This year, with the emerging Delta variant raising alarms and forcing many to postpone their business trips once more, SIGGRAPH is again hosting the event virtually.

The 48th annual graphics and animation event kicked off this week (August 9-13), featuring keynote talks by Dr. Kate Darling, research specialist at the MIT Media Lab, on the nature of human-robot interactions; Hany Farid, a professor at the University of California, Berkeley, on the danger of deepfake technology; and more.

As people minimized social contact and retreated indoors, digital worlds emerged to fill not only the emotional void but also some practical functions. “Building the Open Meterverse,” a session jointly hosted by Marc Petit, VP and General Manager, Unreal Engine at Epic Games; and Patrick Cozzi, creator of 3D Tiles and CEO of Cesium, anticipates the need for an open immersive network for gaming, social, and enterprise collaboration.

In “Connecting in the Metaverse: The Making of the GTC Keynote,” GPU maker NVIDIA reveals the use of its Omniverse collaboration software to build digital twins of the NVIDIA DGX Station, company CEO Jensen Huang's kitchen, and the CEO Jensen Huang himself.

From PowerPoint to Omniverse

During GTC 2020 in May of last year, as he appeared in a recorded keynote for the virtual event, NVIDIA CEO Jensen Huang quipped, “Our first kitchen keynote.” Unbeknown to him, it woudln't be the last. This year in April, the GPU computing event once again went virtual. But this time, Haung's kitchen nad the CEO himself also appeared as richly detailed 3D models.

“In the past we used PowerPoint to create the slides. This year, our slides are virtual worlds, completely created and rendered in Omniverse,” said Rev from NVIDIA Engineering. Rob, NVIDIA Creative, described the undertaking to create 100+ slides as “a beast.”

Featuring a skeleton hand picking up a cup of red liquid, sloshing water in a tank, cloth dropped onto a kettle, and waves crashing into a boat, the presentation took full advantage of the simulation tools in NVIDIA's physics engine PhysX 5, part of Omniverse. Some assets were prepared in Maya, then assembled in Omniverse, according to the presentation. For the animated sequence of the DGX Station, the creative team had three weeks to transform the CAD model into a cinematic.

“We had only so much time to build, refine, and iterate, so we set up the entire scene in Omniverse,” said Jason, NVIDIA Creative.

How to Animate a CEO

For parts of the cinematic, the team used photogrammetry as the basis to build a coarse model of NVIDIA CEO Jensun Huang's kitchen. Modelers added more details for realism, down to the screws holding the sockets on the wall and the graphics on the oil cans. The scene includes 6 to 8 thousand objects, depicted in hundreds of millions of polygons, estimated Kevin, NVIDIA Creative.

The AI-based human model of Jensen was built with minimal data from the busy CEO himself. Jensen was scanned inside a portable truck equipped with 3D cameras. The acquired digital model's facial performance was driven by NVIDIA software that can animate a 3D human model based on an audio clip. The NVIDIA video team used a technique to map the real photographs of Jensen to the 3D model’s facial movements to add realism to the footage. The virtual Jensen's body movements were driven by motion-capture data.

In part of the presentation featuring product shots, to faithfully represent the industrial design team's aesthetic vision, the GPU surfaces with diffused materials in real life were rendered with the same quality in the virtual model. Similarly, chips with reflective materials were rendered to show the same reflective effect. The project exemplifies the tight collaboration among NVIDIA’s Creative, Engineering, and Research teams.

NVIDIA has also announced significant expansion of the Omniverse features. For details, read the article from Advanced Product Development Resource Center (sponsored by Dell and NVIDIA).

Intel Highlights CPU-Based Rendering

SIGGRAPH 2021 is sponsored by Intel, NVIDIA, and Unity, among others. Intel led the session titled “Get Powerful CPU Rendering with Arnold, Optimized by Intel.” In it, Fred Servant and Thiago Ize from Autodesk Arnold shared the recent code optimization efforts to improve CPU rendering, including a sneak peak of the AI-based Intel denoiser.

AI-driven denoiser to speed up rendering is also available for the GPU. For more, read about NVIDIA's version of the tool here.

In “Advantages of Powerful CPU Rendering with Intel,” another Intel-led session, Marc Potocnik, designer and founder of studio renderbaron, discussed the use of CPU-based rendering for professional workloads.

SIGGRAPH organizers announced that SIGGRAPH Asia 2021 will be held at the Tokyo International Forum as a hybrid event, set for December 14-17.

More NVIDIA Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE